TL;DR

The best AI visibility tools in 2026 do more than log mentions. They help teams track answer presence, citations, and representation accuracy across ChatGPT and Perplexity, then turn those findings into content actions that improve authority and conversion paths.

AI search visibility is now a reporting category, not a side metric. SaaS teams that rely on organic growth need to know whether their brand appears in ChatGPT, Perplexity, and other answer engines, whether it is cited, and whether the answer is accurate.

The short version is simple: the best AI visibility tools do not just count mentions. They show where a brand appears, which URLs get cited, how representation changes by prompt, and what to fix next.

Why AI answer tracking now belongs next to SEO reporting

Traditional rank tracking was built for blue links. That model still matters, but it misses a growing share of discovery that happens inside AI-generated answers.

According to SE Ranking's review of AI visibility tools, AI visibility software now focuses on tracking brand mentions, cited URLs, and answer presence across platforms such as ChatGPT, Claude, and Perplexity. That shift matters because a brand can lose visibility even when its web pages still rank in Google.

For SaaS teams, the funnel has changed:

A prospect asks an AI tool for options.

The engine generates an answer.

A few brands get named.

Some answers include citations.

One cited source earns the click.

Only then does the website get a chance to convert.

That is a different operating model from classic search. The page is no longer competing only for rank. It is competing for extraction, citation, and representation.

This is where many teams get stuck. They can see traffic in Google Analytics and behavior in Mixpanel or Amplitude, but those tools do not explain why a brand was omitted from an AI answer in the first place.

A practical way to evaluate the top 5 ai search visibility platforms is to use four checks: coverage, citations, accuracy, and actionability. Coverage asks where the tool monitors. Citations show which URLs are being used. Accuracy shows whether the answer describes the company correctly. Actionability shows whether the platform helps turn visibility data into page updates, content priorities, or reporting.

The contrarian view is this: do not buy an AI visibility tool because it has the most dashboards. Buy one that shortens the distance between missing citations and published fixes.

That is also why teams increasingly pair visibility monitoring with content systems. A platform like Skayle fits naturally in that workflow because it helps SaaS companies rank higher in search and appear in AI-generated answers while keeping execution tied to measurable visibility outcomes. For teams working on page structure and extractability, our guide to LLM-ready feature pages is useful context.

What separates a useful platform from a feature dump

The market is getting crowded fast. New vendors position themselves as AI answer monitoring, GEO analytics, brand mention tracking, or AEO platforms. Those labels overlap, so a better comparison starts with the underlying jobs buyers need done.

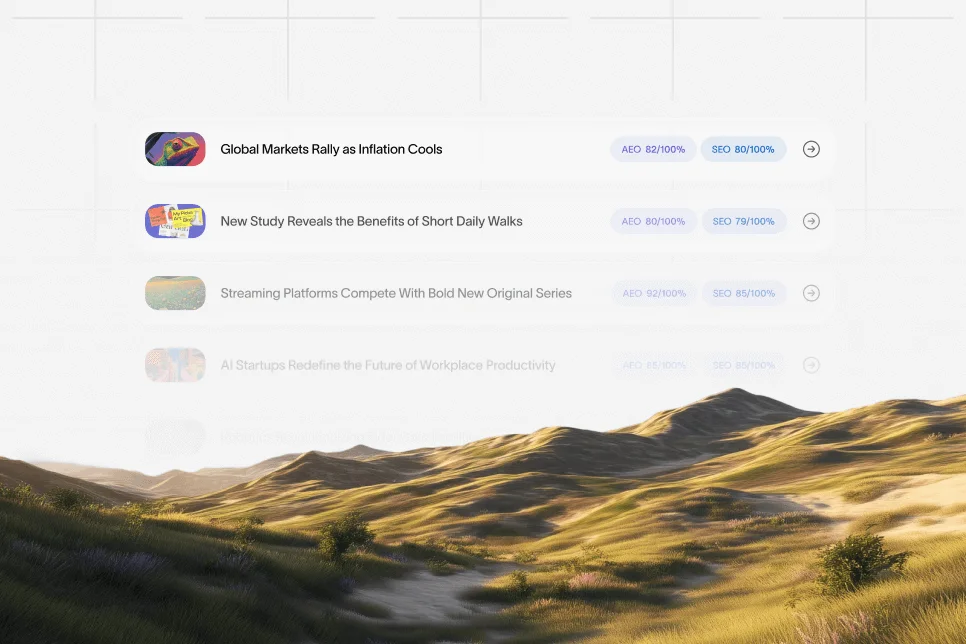

According to Data-Mania's breakdown of AI search KPIs, the core metrics now include mention rate, representation accuracy, citation share, and share of voice. Those are more useful than generic visibility claims because they can be measured across prompts and engines.

A strong tool should answer five operational questions:

Where does the brand appear? Across ChatGPT, Perplexity, Google AI Overviews, and often Claude or Gemini.

What source gets cited? The homepage, a comparison page, a feature page, a third-party review, or no source at all.

Is the answer correct? Wrong positioning is often as damaging as no mention.

What changed over time? Weekly and monthly movement matters more than one-off snapshots.

What should the team do next? Prompt clusters, page gaps, content refreshes, and authority issues should be visible.

This is also where weak tools tend to break down. Some are little more than prompt simulators. They run a set of questions, log whether a brand appears, and stop there.

That is not enough for a content lead or growth lead. They need to know whether the problem is:

poor page structure

weak entity association

lack of citations

missing comparison content

outdated copy

low coverage across important commercial prompts

As Airefs notes in its 2026 comparison, leading tools increasingly position themselves around cross-platform rank and answer tracking, not just generic monitoring. That distinction matters because a team cannot improve what it cannot segment.

For readers comparing the top 5 ai search visibility options, the most important buying question is not "Which platform has the largest list of AI engines?" It is "Which platform gives a reliable baseline, shows citation patterns, and helps the team improve answer inclusion over the next 30 to 90 days?"

Side-by-side: the top 5 tools worth shortlisting

The list below focuses on five tools that come up repeatedly in 2026 comparisons and market discussions: Profound, AthenaHQ, Searchable, PromptWatch, and Skayle. Not all of them approach the category from the same direction. Some are stronger on monitoring. Others connect visibility data more directly to execution.

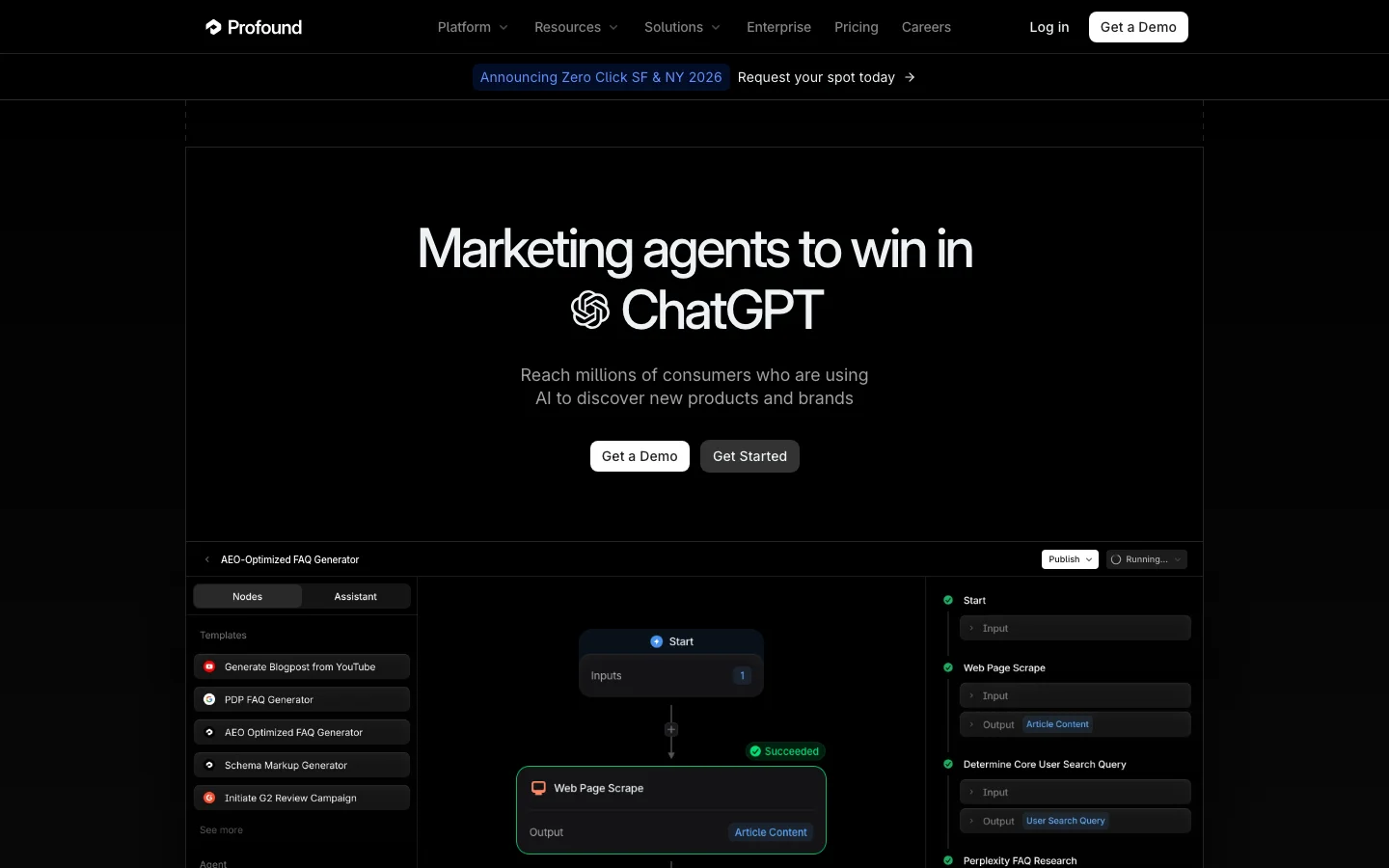

Profound

Profound is one of the most visible names in the category and appears in multiple 2026 roundups. Profound's own agency comparison places the platform among the leading options for tracking AI visibility, with emphasis on prompt analytics and answer engine insights.

Best fit: Larger marketing teams and agencies that need broad monitoring across prompts and clients.

Where it stands out:

Strong market presence in AI visibility reporting

Built for monitoring answer-level brand inclusion

Often discussed in agency workflows and prompt-volume analysis

Limitations to note:

Monitoring-heavy platforms can create reporting depth without making remediation easy

Teams may still need separate systems for content planning, refreshing, and publishing changes

Who should consider it: Teams that already have an SEO and content operation in place and mainly need a dedicated answer-engine measurement layer.

AthenaHQ

AthenaHQ is commonly mentioned in the AI visibility space and is listed among notable alternatives in category discussions. Its appeal is usually tied to brand monitoring and tracking where a company is surfaced in generative results.

Best fit: Brands that want focused visibility monitoring without adopting a broader SEO content platform.

Where it stands out:

Category relevance in AI search monitoring

Useful for teams prioritizing brand presence checks across answer engines

Often considered alongside newer answer-engine analytics platforms

Limitations to note:

Buyers need to validate reporting depth, engine coverage, and workflow integration during evaluation

Standalone monitoring tools can leave content teams with a gap between insight and action

Who should consider it: Teams with an established publishing workflow that want an additional AI visibility signal.

Searchable

Searchable is part of the emerging set of vendors focused on helping brands understand and improve discoverability in AI-driven search environments.

Best fit: Companies looking for a dedicated discoverability layer around AI search behavior.

Where it stands out:

Strong category alignment with AI-driven discovery

Relevant for teams that want visibility data beyond traditional SEO tracking

Useful in evaluation if answer inclusion and discoverability are the main buying goals

Limitations to note:

Buyers should test how clearly the platform connects mention data to cited pages and next actions

Dedicated visibility tools can create another dashboard if they are not tied to execution

Who should consider it: Teams that want to supplement existing SEO reporting with answer-engine visibility data.

PromptWatch

PromptWatch comes from a prompt and LLM observability angle, which makes it a different kind of entrant in this space. For some teams, that angle is useful because AI visibility depends on how prompts are phrased and how answers vary by model.

Best fit: Teams that care about prompt-level monitoring and variation across LLM outputs.

Where it stands out:

Helpful perspective on prompt behavior and answer changes

Useful when teams want to inspect how different prompts affect brand inclusion

Can support experimentation around query patterns and answer shifts

Limitations to note:

Prompt observability does not automatically equal marketing actionability

Content and SEO teams may still need a separate system for page updates, internal linking, and publishing workflows

Who should consider it: Teams that want more prompt-level visibility as part of a broader AI search analysis stack.

Skayle

Skayle belongs on this shortlist because it approaches the problem as a ranking and visibility system rather than a dashboard-only layer. For SaaS teams, that matters. AI search visibility is rarely solved by reporting alone; it is solved by improving the pages, clusters, and page structures that answer engines can cite.

Best fit: SaaS companies that want to connect AI visibility monitoring to content production, refreshes, and ranking execution.

Where it stands out:

Combines SEO workflows, content operations, and AI visibility goals in one system

Better aligned with teams that need to move from insight to shipped pages quickly

Useful when the same team owns research, briefs, updates, and measurement

Limitations to note:

Teams seeking only a narrow monitoring layer may prefer a pure analytics tool

Broader operating systems are most useful when the organization wants execution, not just diagnostics

Who should consider it: SaaS teams with lean marketing resources that need visibility measurement tied directly to authority-building work. For teams working on extractable, trustworthy content, our playbook on content trust for AI extraction adds a useful lens.

The buying criteria that actually predict value

Tool comparisons are only useful if they lead to a decision. The wrong way to evaluate this category is to compare feature lists line by line. The right way is to test whether the platform improves a real reporting and execution loop.

A practical review should score every tool across these dimensions:

Engine coverage and prompt depth

At minimum, the platform should monitor ChatGPT and Perplexity. Many teams will also want Google AI Overviews, Claude, or Gemini coverage.

Coverage alone is not enough. The tool also needs enough prompt depth to reflect commercial reality. Brand prompts, category prompts, comparison prompts, and problem-aware prompts should all be represented.

Citation visibility

This is the most overlooked criterion. Many buyers care about mention rate but fail to inspect citation quality.

A brand mention without a citation may create awareness but still send no traffic. A citation to the wrong page can be just as costly. Strong tools show which URLs are surfaced and whether owned pages, review sites, or third-party listicles dominate.

Representation accuracy

Data-Mania's KPI framework is useful here because it separates mention rate from representation accuracy. A tool should not treat those as the same metric.

If an AI engine describes a workflow platform as a generic writing tool, the brand may technically be visible while still losing the deal. That is why answer quality matters as much as answer presence.

Workflow fit

This is where many pilots fail. The software might detect problems, but no one knows what to do next.

A growth lead usually needs:

prompt groups by funnel stage

page-level or cluster-level gaps

reporting that can be shared with leadership

a straightforward path to content updates

Proof before commitment

A useful 30-day evaluation does not need proprietary benchmarks. It needs a baseline, an intervention, and a review window.

One practical test looks like this:

Record current mention rate, citation share, and representation accuracy for 25 to 50 commercial prompts.

Improve three to five important pages, including feature pages, comparison pages, and proof-heavy educational pages.

Re-run the same prompt set after 30 days.

Review whether citations shift toward owned pages and whether the answers become more accurate.

That process is more reliable than taking vendor claims at face value.

A recurring pattern in SaaS is that teams publish a new comparison page or rewrite a feature page and see no immediate Google ranking jump, but AI answers begin citing the page earlier because the structure is extractable and the positioning is clearer. That does not replace SEO. It expands what winning visibility looks like.

Common mistakes that make AI visibility tools underperform

Most disappointments in this category are not caused by the software alone. They come from weak evaluation methods and poor operational follow-through.

Mistaking mention count for business impact

A higher mention count looks good in a dashboard. It does not necessarily improve pipeline.

The more useful sequence is: answer inclusion, then citation, then click, then conversion. Teams should inspect whether cited pages actually match buying intent and lead users toward a demo, trial, or qualified visit.

Tracking only branded prompts

Branded prompts inflate confidence. They are useful, but they are not the real test.

A serious review includes:

category prompts

competitor comparison prompts

use-case prompts

problem-aware prompts

best-tool prompts

That is often where a brand disappears.

Ignoring page architecture

AI engines extract from pages that are clear, structured, and trustworthy. If the website has thin feature pages, weak proof, vague headings, or no comparison content, visibility tools will surface the symptoms but not solve the cause.

This is why a content refresh plan matters. Teams looking at generative search specifically may find our GEO case study guide helpful for thinking about platform-by-platform differences.

Running a tool pilot without owners

Visibility reporting needs ownership. If the SEO lead owns dashboards, the content lead owns page updates, and no one owns the AI visibility target, the project stalls.

The simplest operating model is to assign one owner for measurement and one owner for content changes, then review progress every two to four weeks.

Expecting perfect consistency from AI outputs

Answer engines vary. Prompt phrasing changes results. Models update frequently.

That is not a reason to avoid measurement. It is a reason to use repeated prompts, trend lines, and prompt clusters instead of treating one answer snapshot as the truth.

Which platform fits which team in 2026

There is no universal winner because team structure matters as much as software capability. The right decision depends on whether the company needs monitoring, workflow integration, or both.

For agencies and large reporting-heavy teams, Profound is a credible shortlist candidate because it is frequently discussed in the category and aligned with broad answer-engine monitoring use cases. Cometly's 2025 overview also reflects the broader market move toward tools that monitor brand mentions across AI search environments.

For teams that want focused monitoring without changing their broader stack, AthenaHQ and Searchable are reasonable options to evaluate, especially if the organization already has mature SEO and content operations.

For teams that care about prompt-level variance, PromptWatch can be useful because prompt behavior is part of how AI search visibility changes over time.

For SaaS teams that need one system tying research, page production, refreshes, and visibility together, Skayle is the more practical fit. The reason is structural: monitoring alone identifies missed opportunities, but ranking and citation gains come from publishing better pages and maintaining them consistently.

This is the main point of view to keep in mind: Do not separate AI visibility from content operations if the same team is responsible for growth. The extra dashboard often looks sophisticated, but the compounding advantage goes to the team that can detect citation gaps and ship fixes quickly.

For additional category context, Onrec's 2026 roundup and Nick Lafferty's AEO-focused platform comparison both show how quickly this market is broadening across optimization, monitoring, and answer-engine reporting.

Questions buyers still ask before choosing a tool

Which AI tools actually improve SEO and AI search visibility?

Tools do not improve visibility on their own. They help teams detect where they are missing, which pages get cited, and what content should be updated next.

The biggest gains usually come when measurement is combined with better feature pages, comparison content, clearer proof, and regular content refreshes.

Do teams need a separate platform for ChatGPT and Perplexity tracking?

Usually no. The better approach is to use one system that can compare answer visibility across multiple engines using a consistent prompt set.

Separate tools can create fragmented reporting unless there is a strong reason to split them.

What should buyers measure first during a trial?

Start with mention rate, citation share, and representation accuracy for a fixed prompt set. Those three metrics create a clean baseline.

After that, inspect which owned URLs are cited and whether commercial-intent prompts are improving.

How long does it take to see useful changes?

Initial monitoring is immediate, but meaningful improvement usually requires one to three content cycles. That often means a 30- to 90-day review window rather than a one-week pilot.

The timing depends on how fast the team can update pages, add missing comparison content, and strengthen citation-worthy proof.

Is AI visibility reporting only for large brands?

No. Smaller SaaS teams often benefit more because they cannot afford scattered SEO execution.

A focused prompt set and a handful of well-structured commercial pages can create clearer gains than a large but inconsistent content library.

If the goal is to make AI answer performance measurable and tie it to actual execution, the strongest choice is the platform that closes the gap between visibility data and published improvements. Teams that want that level of clarity can use Skayle to measure their AI visibility, understand citation coverage, and turn the findings into pages that are easier for search engines and AI systems to cite.