Measure who AI engines

actually trust.

Mentions are easy to misread. AI Citation Benchmark separates visibility from trust by showing when your brand is actually cited, how competitors compare, and which source types shape authority in your category.

Most AI visibility tools

stop too early

They tell you whether your brand showed up in an answer. That is surface-level. A brand can be visible without being trusted. It can be mentioned without being selected. It can appear in the answer without being the source that shaped the answer.

That is exactly why AI Citation Benchmark exists.

Skayle does not just show whether you are present. It shows whether AI engines actually cite you, how often competitors win that trust instead, and whether your authority profile is improving or slipping over time.

What this page should show

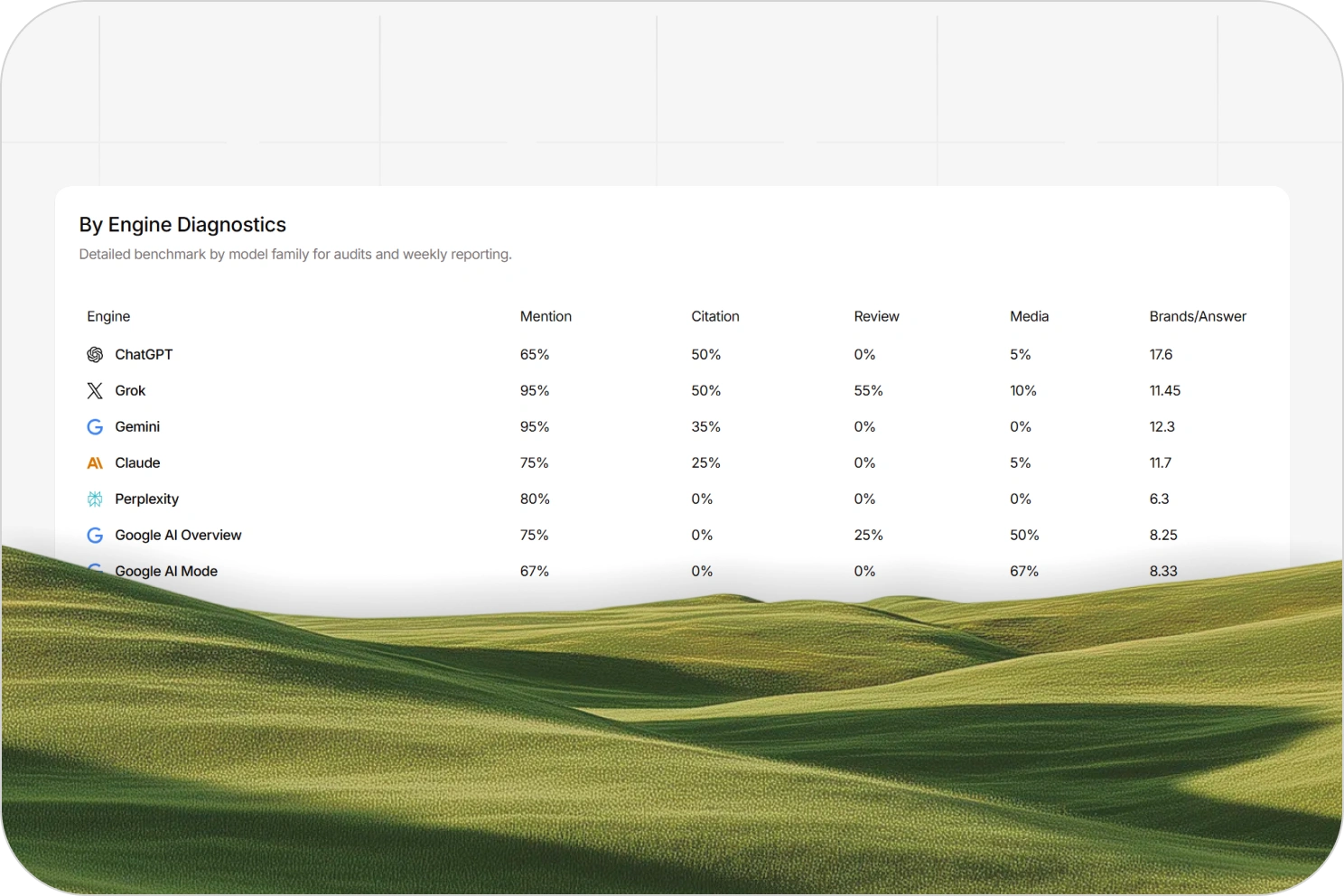

Citation Rate

Presence is not enough. Citation rate shows when AI engines treat your brand as a source, not just part of the conversation.

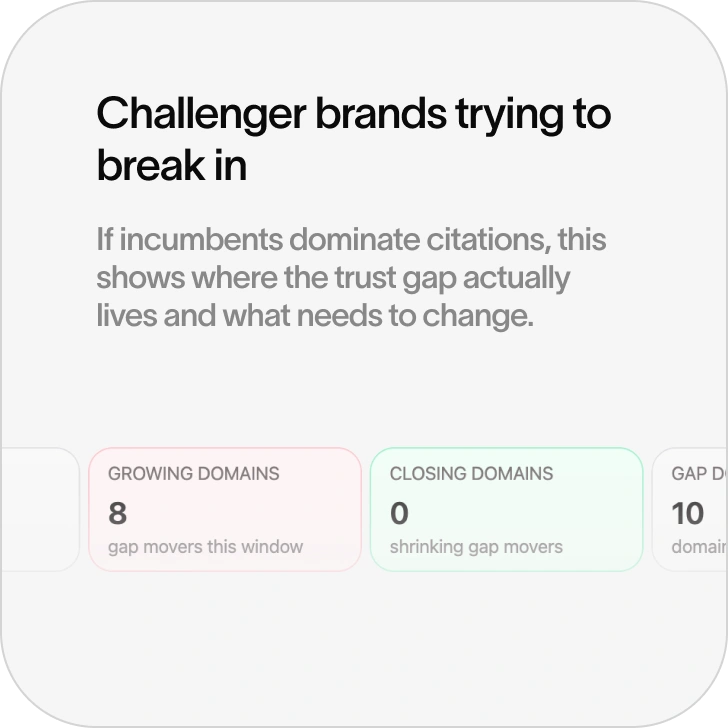

Competitor Comparison

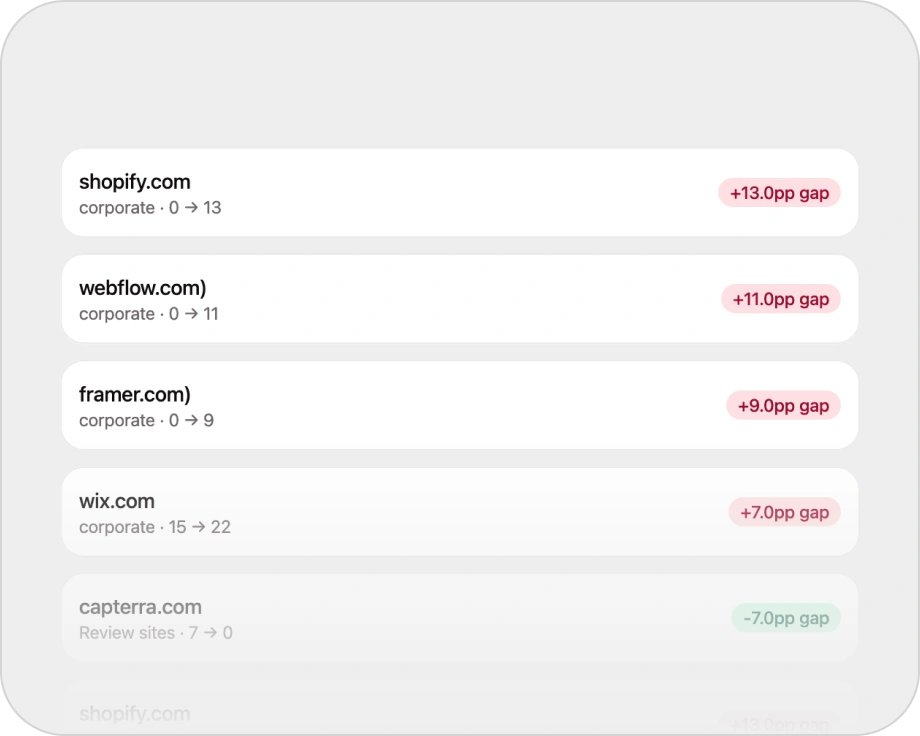

See whether competitors are being trusted more often, where they are gaining share, and how the benchmark is moving over time.

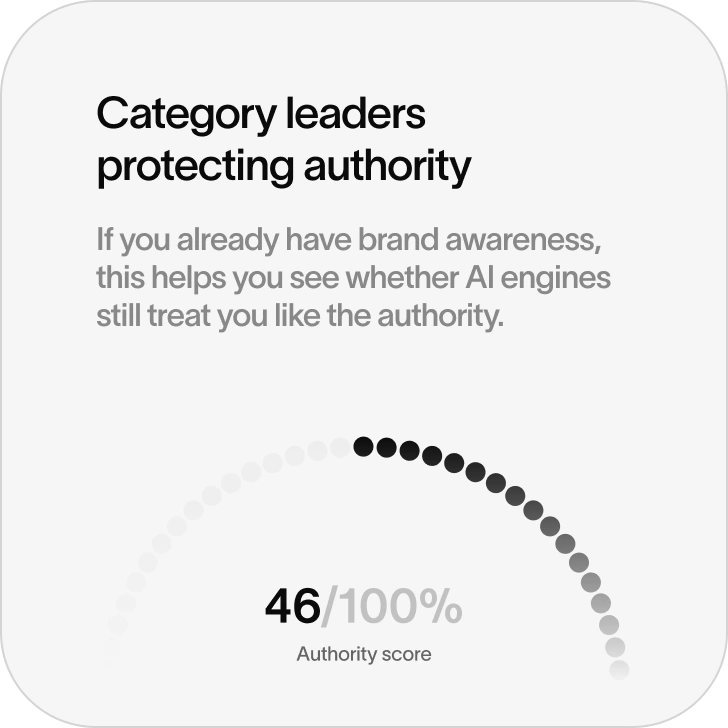

Authority Score

Understand whether your visibility profile is strengthening or weakening across the prompts and engines that matter to your category.

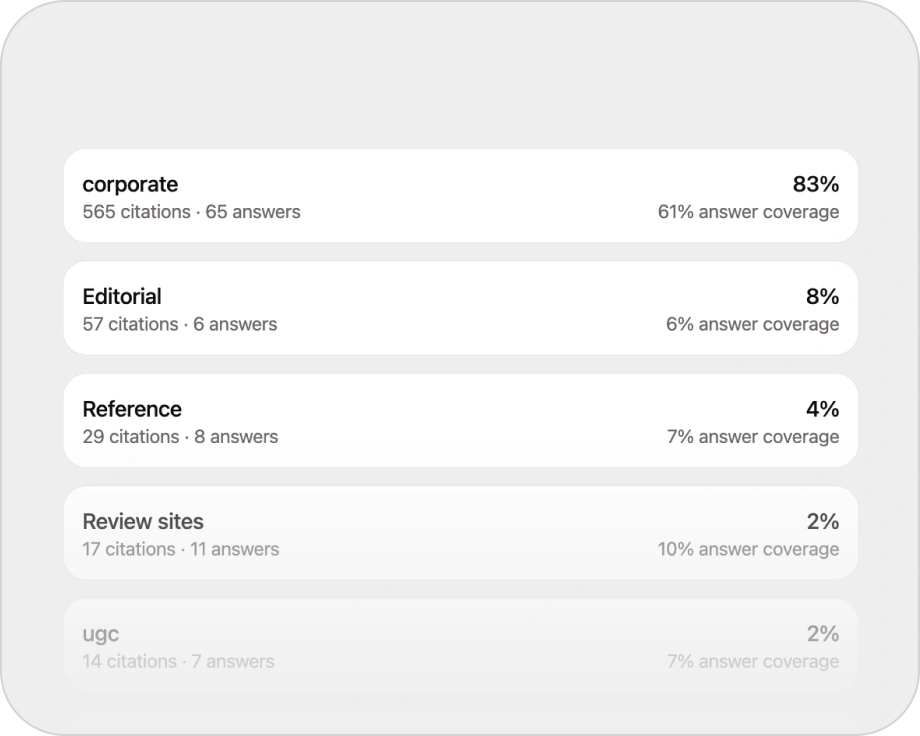

Source Trust Mix

See which source types are shaping answers and where your authority profile is thin. Editorial and review-driven signals.

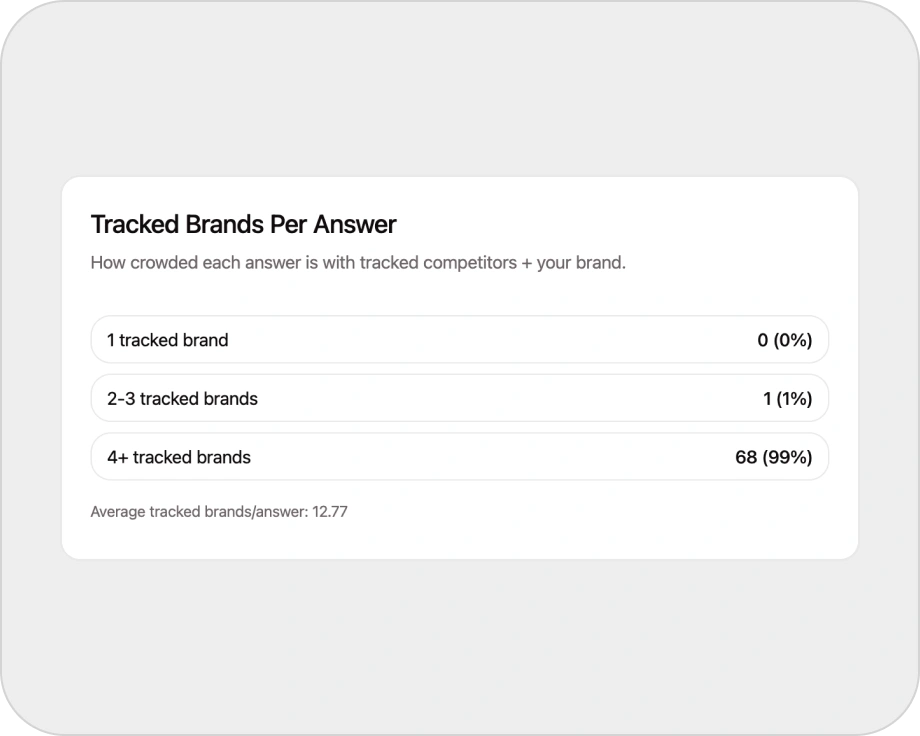

Tracked Brands

Understand who tends to appear together inside AI-generated answers and which brands repeatedly dominate the trust layer.

Trend Movement

AI authority is not static. Benchmark movement shows whether you are closing the gap or falling further behind.

Why it matters

AI engines do not simply rank pages. They choose sources.

That changes the game. If competitors are cited more often, they are shaping category understanding even when your brand still shows up occasionally. A mention can feel like progress. A citation is what actually matters. This page should make that distinction impossible to miss.

Skayle turns AI visibility into something commercially useful. Instead of asking, “Did we appear?”, teams can ask better questions: Are we actually being trusted?, Which competitors are winning citation share?, Which source types are reinforcing them?, Is the gap getting smaller or bigger?, What should we create next to improve citation coverage?

How it works

Four steps to turning benchmark gaps into action.

Track the prompts that matter

Skayle runs the prompts and topic clusters your team actually cares about across the AI engines you track.

Separate mentions from citations

The system distinguishes between simple presence and actual citation behavior, so teams can measure trust instead of guessing at it.

Benchmark against competitors

See how your brand performs against the other brands showing up in the same answer sets, not in isolation.

Turn gaps into action

Use the benchmark to decide what content, supporting pages, or authority-building assets deserve attention next.

Best fit

Frequently asked questions

Measure trust, not just mentions.

AI Citation Benchmark shows whether AI engines actually cite your brand, where competitors win trust instead, and what to fix next.