TL;DR

Content trust is an extraction problem: if AI systems can’t pull clean answers, they won’t cite you. Build answer-first structure, stable definitions, evidence, cluster consistency, and technical extractability, then measure citation coverage and conversions.

Content trust is no longer a “quality” debate—it’s an extraction problem. If an AI system can’t reliably pull a clean answer from a page, it won’t cite it, and that page won’t become part of the new discovery funnel.

Content trust is the degree to which an AI system can extract a claim from your page, verify it against surrounding context, and feel safe citing it. For SaaS teams, that trust has to be engineered into structure, evidence, and site mechanics—not implied.

Content trust is the reliability layer between rankings and citations

AI answers don’t behave like a traditional SERP click. A user asks a question, the model synthesizes, and only then a small number of sources get cited (if any). That changes the job of content.

A useful way to ground the concept is to separate:

Information extraction (can the system pull structured facts from text?)

Authority selection (does the system treat your source as “safe enough” to cite?)

User conversion (does the click land on a page that completes the job?)

IBM defines information extraction as pulling structured information from semi-structured or unstructured text, which is the core mechanical step behind many AI answer experiences; see IBM’s explanation of information extraction. In other words: the AI can only trust what it can cleanly extract.

ABBYY frames “Content Intelligence” as using AI to encode semantic metadata and make unstructured content processable; that framing matters because “trust” is often just a proxy for “processable plus consistent.” That perspective is described in ABBYY’s primer on Content Intelligence.

For SaaS SEO in 2026, content trust is best treated as an operational property. It should be measurable across:

Extraction success (does the answer pull cleanly?)

Citation eligibility (does the page look like a source, not an opinion blob?)

Citation coverage (are you cited across the prompts that matter?)

Click and conversion (do citations turn into qualified actions?)

The funnel to design for is now: impression → AI answer inclusion → citation → click → conversion. If the content only optimizes for “rankings,” it often fails in the middle.

What this guide covers, in order:

How AI systems decide what to extract

A concrete model for building content trust into a page

A workflow that turns drafts into citeable sources

How to measure trust with SEO + AI visibility signals

What “content trust” means in cybersecurity (Docker) and what that teaches marketers

Common failure modes and fixes

A lot of teams already have pieces of this. The gap is that the pieces aren’t connected, so trust can’t compound.

How AI systems decide what to extract (and what they ignore)

Most content teams assume the “best writing” wins. In extraction contexts, the most extractable writing wins.

In practice, LLMs and AI answer systems rely on signals like concept hierarchy, formatting, repetition, and clear information order. SEO Hacker describes how structure (headings, lists, tables) increases the likelihood that AI systems select and cite content; see SEO Hacker’s guide to structuring content for AI extraction.

Three patterns show up repeatedly when pages fail to get cited:

1) Claims are not anchored to stable objects

If a page says “it improves security” without defining what “it” is, an AI system has to guess. Guessing lowers trust.

Trust improves when:

The subject is named consistently (product, method, category)

Key terms are defined once and reused

Comparisons are made against explicit alternatives

2) The answer is buried inside narrative paragraphs

Extraction engines prefer “answer blocks.” That does not mean FAQ spam. It means a page contains:

A direct answer sentence

A short list of conditions or steps

Optional longer reasoning underneath

A useful editorial test: if the page were turned into a single highlighted snippet, would it still be correct?

3) Internal contradictions aren’t resolved

If your site has five pages defining the same concept differently, your “brand view” becomes ambiguous. Ambiguity is a trust tax.

This is where teams usually focus on “E-E-A-T” as a branding task. The more practical view is: trust is consistency under extraction.

The Content Trust Stack: 5 parts a page needs to be citeable

The fastest way to make content trust operational is to treat it like a stack. A page becomes citeable when it has all five layers.

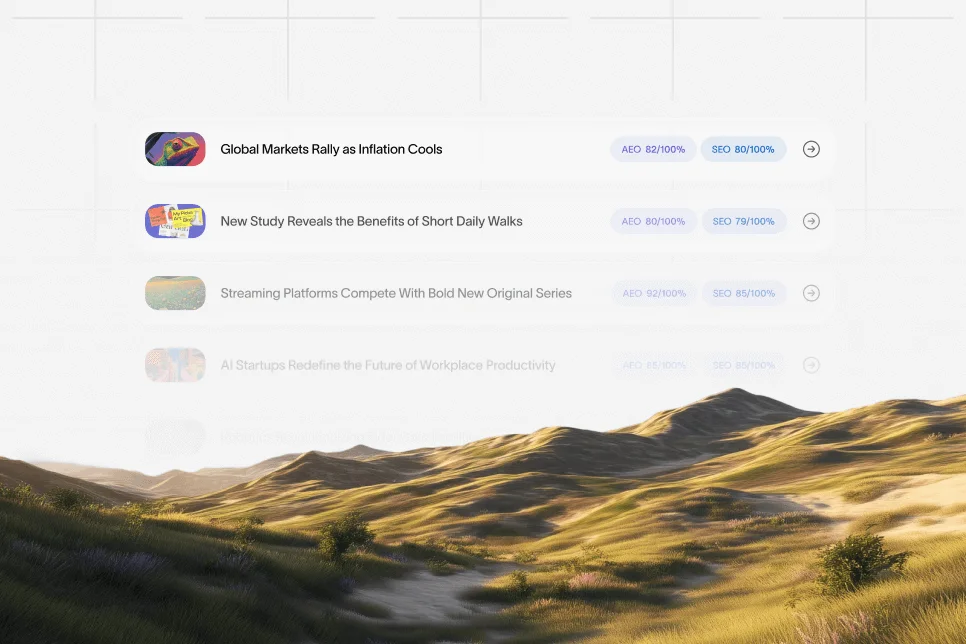

The Content Trust Stack:

Answer-first structure

Entity and term clarity

Evidence and traceability

Consistency across the cluster

Technical extractability

Each layer is fixable. Missing layers explain most “we rank but don’t get cited” situations.

1) Answer-first structure

A citeable page makes its best answer easy to extract.

Do:

Lead each major section with a 40–80 word answer paragraph

Use lists for criteria, steps, and tradeoffs

Put one table where a model can compare options

Don’t:

Open with brand narrative

Write 400-word sections without a summary claim

Hide definitions mid-paragraph

SEO Hacker explicitly calls out that clear answers, lists, and tables improve AI selection versus unstructured paragraphs; see the formatting guidance in SEO Hacker’s AI extraction article.

2) Entity and term clarity

Extraction breaks when terms shift. Trust rises when terms are stable.

Practical fixes:

Add a Definitions block near the top for the 3–6 terms that the page depends on

Use the same term everywhere (avoid synonyms in headers)

Add a short “what this is / what this is not” sentence for ambiguous categories

3) Evidence and traceability

AI systems don’t “believe,” but they do prefer content that looks verifiable.

Evidence doesn’t have to be academic. It can be:

A reproducible procedure

A referenced definition

A traceable source inside your own docs

A helpful mental model comes from data extraction tooling: DataSnipper describes AI extraction features that link extracted values back to source context for defensible review; see DataSnipper’s explanation of AI Extractions. For SEO content, the parallel is simple: claims should have “where it came from” nearby.

4) Consistency across the cluster

Trust is rarely a single-page property. It’s a network property.

This is why topic cluster architecture matters for citations: AI systems form a “view” of your brand by reading multiple connected pages. If definitions, tone, and claims are consistent, extraction confidence rises.

This is also where internal linking stops being a generic SEO task and becomes context shaping. If a page is likely to be cited, it should sit in a cluster with clean linking and non-contradictory support pages. Skayle’s view of this is captured in its guidance on topic cluster architecture and internal linking.

5) Technical extractability

If a system can’t crawl or parse the page, trust signals never get a chance.

Key technical checks:

Server-side rendering or reliable rendering for bots

Canonicals match the version you want cited

Schema is present where it clarifies entities and page type

No index bloat that dilutes cluster clarity

If technical SEO is handled only as “rankings,” teams miss extraction blockers that specifically impact AI answer engines. This is why a technical pass built for AI extraction is worth doing; Skayle covers many of the crawl and extraction failure modes in its guidance on technical SEO for AI visibility.

A workflow that turns drafts into extraction-ready sources

Treating content trust as a stack is the “what.” Teams still need a repeatable “how.”

A strong workflow looks closer to document processing than classic editorial.

Terzo describes intelligent data extraction as an AI/ML approach that retrieves information from documents and associates it with systems for consistency; see Terzo’s guide to intelligent data extraction. Hyland similarly describes AI document processing capabilities such as classification, extraction, and validation; see Hyland’s overview of AI in document extraction.

For SEO and GEO pages, that maps cleanly to a content workflow:

Step 1: Define what the page must prove

Before writing, specify:

What question is being answered

What claim the page wants to “own”

What the reader should do after they trust it

This prevents the most common failure: a page that tries to answer everything and becomes citeable for nothing.

Step 2: Build a “source map” inside the page

A page becomes more trustworthy when it contains:

A formal definition (linked to an authoritative source when possible)

A short list of criteria or steps

A consistent term set

This is where content teams should think like data extraction teams: reduce interpretation and increase determinism.

Step 3: Add one comparison object

Commercial investigation intent shows up around “trust” because users want to know what to adopt.

Add at least one of:

A decision table (Option A vs Option B)

A “when to use / when not to use” list

A tradeoff block that names costs

Step 4: Instrument before publishing

If content trust must be measurable, the analytics plan can’t be an afterthought.

At minimum:

Track clicks and conversions from cited pages

Track citation coverage across a fixed prompt set

Track extraction “health” with periodic audits

Skayle approaches this as a visibility loop where AI mentions guide what gets refreshed next; the measurement angle is outlined in its AI search visibility guidance.

A numbered checklist teams can run on every “money” page

Use this before publishing or refreshing a page that needs citations.

Put a direct answer in the first screen (40–80 words).

Define 3–6 critical terms and keep them consistent.

Add one list that can be extracted as steps or criteria.

Add one table that compares options or states requirements.

Remove synonyms in headings (use one phrase per concept).

Link to 2–4 supporting pages that reinforce the same definitions.

Add schema where it clarifies page type or entities.

Verify the canonical and indexation match the intended URL.

Add a conversion path that matches the query intent (not a generic CTA).

Log the page into a citation monitoring set and set a re-check date.

This looks strict, but it’s how a site becomes “consistently citeable,” not occasionally lucky.

Measuring content trust in 2026: extraction, citation coverage, conversion

Most teams try to measure trust using proxies: “time on page,” “scroll depth,” or vague quality scores. Those can help, but they don’t tell you if AI systems can cite you.

A practical measurement approach uses three layers.

Layer 1: Extraction success (page-level)

This answers: can an AI system pull a clean answer?

Signals to track:

Presence of answer-first blocks across sections

Consistency of term usage (same entity names)

A low “definition drift” count (definitions don’t change page-to-page)

This can be operationalized as a simple audit checklist rather than a numeric score.

Layer 2: Citation coverage gap (query-level)

This answers: are you cited on the prompts you care about?

A workable process:

Choose 30–100 prompts that represent your pipeline intent (category, problem, comparison, “how to”)

Record whether your brand is cited, mentioned, or absent

Refresh pages that should win those prompts

Skayle has written about running this kind of gap analysis for citations, including how to turn missing prompts into a publishing queue; see the guidance on citation coverage gaps.

Layer 3: Conversion integrity (click-level)

This answers: do citations produce outcomes?

Track:

Landing page conversion rate from AI-citation traffic vs overall organic

Assisted conversions (AI-cited page → later demo)

Next-step alignment (does the page answer the query, then offer the right action?)

The contrarian point here is important: a citation that doesn’t convert can still be a net-negative if it drives unqualified clicks and pollutes your intent signals.

A proof-shaped example without fake numbers

A common pattern seen in SaaS teams moving from “rankings-first” to “citations-first” looks like this:

Baseline: pages rank for high-intent terms but are rarely cited in AI answers because definitions and steps are buried in narrative sections.

Intervention: add answer-first paragraphs, a requirements table, and consistent definitions across the cluster; then track citation coverage across a fixed prompt list weekly.

Outcome: citation presence becomes stable and diagnosable (teams can point to specific prompts where they win/lose), and conversion tracking can isolate which cited pages contribute to qualified actions.

Timeframe: improvements in extraction clarity can be shipped in days; citation coverage typically needs several monitoring cycles to confirm.

The key is that “trust” becomes observable. That’s the difference between content that feels high quality and content that is operationally trustworthy.

What Docker Content Trust teaches about “trust” (and why the term is confusing)

The SERP for “content trust” is overloaded because the phrase is heavily used in container security. Teams searching it may be looking for Docker and Notary, not marketing.

That overlap is still useful, because cybersecurity trust is more precise than marketing trust.

What is the purpose of Docker Content Trust?

In container ecosystems, “content trust” generally refers to verifying that a container image came from the expected publisher and wasn’t tampered with between publishing and deployment. The goal is integrity and provenance, not readability.

What is a Docker notary?

A notary in this context is a signing and verification component used to attach trust metadata to artifacts (like images) so clients can validate them during pull/deploy. Conceptually, it’s the enforcement mechanism that makes “trust” checkable.

How do you enable content trust in an Azure container registry?

In practice, enabling image trust is usually a combination of registry configuration (to support signing metadata) and client workflow (to sign images before pushing, and verify signatures when pulling). The exact steps depend on the registry’s current signing support and the organization’s chosen signing tooling.

What is trust in cybersecurity?

In cybersecurity, trust is the ability to verify identity, integrity, and permissions under adversarial conditions. It’s less about persuasion and more about explicit verification.

The marketing takeaway: make trust checkable

For AI extraction, the parallel is direct:

Signing maps to: stable definitions and explicit claims

Verification maps to: traceable evidence and consistent supporting pages

Policy enforcement maps to: technical controls (canonicals, schema, crawlability)

If “trust” can’t be checked, it becomes subjective, and subjective claims are harder to cite.

Common mistakes that silently destroy content trust

Most content trust failures are not dramatic. They’re operational drift.

Mistake 1: Publishing multiple “source of truth” pages

When several pages try to define the same concept (with slightly different language), the site stops looking authoritative under extraction.

Fix:

Pick one canonical definition page

Make other pages refer to it

Keep the definition stable and updated

Mistake 2: Treating schema as optional polish

In an AI-answer environment, schema can clarify what a page is and what entities it contains. It won’t “force” citations, but it can reduce extraction ambiguity.

Fix:

Add schema where it reduces interpretation (FAQPage, HowTo, Product, Organization, Article—only where accurate)

Validate and keep it consistent across templates

If the goal is citations (not just rich results), the structured data choices should align with conversational extraction patterns; Skayle’s approach is covered in its structured data blueprint and its breakdown of conversational schema fixes.

Mistake 3: Over-optimizing for “thought leadership” voice

Contrarian, but practical: “smart” writing that avoids repeating terms and refuses to be explicit is often worse for extraction.

Do this instead:

Repeat the exact term you want associated with the concept

State constraints and conditions plainly

Prefer short, testable sentences over rhetorical flourishes

Mistake 4: Measuring trust with proxy engagement metrics only

A page can have high time on page and still be unciteable.

Fix:

Add citation coverage monitoring to your reporting

Tie refresh work to missing prompts, not to gut feeling

If a team is serious about making this measurable, it should treat AI visibility as a first-class reporting surface rather than a quarterly experiment; that’s the point of an AI search visibility workflow.

Mistake 5: Ignoring conversion design once citations improve

Citations change who lands on a page. The page must carry them to the next step without breaking intent.

Fix:

Match the CTA to query stage (definition → guide; comparison → demo; how-to → template)

Put the CTA after the answer block, not before it

Add “next step” modules that keep users within the cluster

FAQ: content trust, AI extraction, and LLM citations

How is content trust different from E-E-A-T?

E-E-A-T is a broad set of quality considerations. Content trust is narrower: it’s whether AI systems can extract, interpret, and cite your content consistently. A page can “sound expert” and still fail extraction if definitions and structure are ambiguous.

What formats increase the chance of being cited in AI answers?

Clear answer paragraphs, structured lists, and tables tend to be easier to extract than long narrative blocks. SEO Hacker specifically notes the value of structure for AI selection in its AI extraction formatting guidance.

What should a SaaS team measure to track content trust?

Measure extraction readiness (answer blocks, definitions, consistency), citation coverage across a fixed prompt set, and conversion outcomes from cited pages. If citation coverage is unmeasured, improvements can’t be repeated.

Does adding more references automatically improve content trust?

Not automatically. References help when they anchor definitions or claims that need verification, but the page still needs clean structure and consistent terminology. A few well-placed attributions (like IBM’s information extraction definition) often do more than a long references dump.

Can AI tools be trusted to extract content perfectly?

Not without oversight. Birmingham City University’s guide summarizes research suggesting that tool quality varies and human involvement is still required for reliability, including a 2025 comparison across 33 papers; see Birmingham City University’s data extraction guide.

What is the fastest way to improve content trust on an existing site?

Start with your highest-intent pages and rewrite the first screen for extraction: one direct answer, stable definitions, and one table or step list. Then connect those pages into a consistent cluster and monitor citation coverage weekly to confirm the change is real.

If improving content trust is on the roadmap, the first step is to make it visible: which prompts cite you, which pages get extracted cleanly, and where citations actually turn into qualified clicks. Skayle is built to connect those signals to publishing and refresh decisions, so teams can measure their AI visibility and systematically close the gaps.