TL;DR

Build an AI search visibility index by locking a stable prompt set, tracking presence/SoM/citation rate/sentiment, then rolling those into one weighted score. Use the score to drive monthly publishing and refresh decisions tied to citations, clicks, and conversions.

If you’re running SEO in 2026, “rankings went up” isn’t a complete performance story anymore. Your buyers are getting answers from AI surfaces, and your brand either shows up, gets cited, or disappears.

An AI search visibility index is how you stop guessing. It’s how you turn messy, anecdotal “I saw us in ChatGPT once” into a repeatable benchmark you can improve every month.

An AI search visibility index is a single score that tracks how often and how well your brand appears (and gets cited) in AI answers across a fixed set of prompts.

1. Decide what your index is for (and what it isn’t)

Most teams fail here because they treat AI visibility like a new dashboard, not a new funnel.

You’re optimizing for this path:

- Impression (your brand appears in an AI answer)

- Inclusion (you’re actually part of the response, not an afterthought)

- Citation (you’re linked or sourced)

- Click (a real visit happens)

- Conversion (demo, signup, or qualified lead)

If your index doesn’t map to that sequence, it won’t change what your team ships.

Here’s the practical definition I use: AI search visibility measures the frequency, prominence, and sentiment of your brand in AI answers across platforms (think chat-style engines and AI answer layers). That’s consistent with how AI visibility is described in the market, including KnewSearch’s breakdown of AI Search Visibility and why it’s distinct from classic SEO reporting (KnewSearch).

A point of view that will save you months

Don’t start by picking tools. Start by defining the prompts and the decisions you want to make.

Tools are fine. But if your prompt set is unstable and your score isn’t tied to actions (refresh, new page, schema fix, internal links), you’ll end up with a pretty report and no compounding outcomes.

What your index should drive (real decisions)

Your AI search visibility index should answer questions like:

- Which topic areas are we not showing up for (prompt coverage gaps)?

- When we show up, are we cited or just mentioned?

- Which pages (or hubs) should we refresh first to win citations?

- Which competitors are “default cited” sources in our category?

If you want a deeper workflow for turning those gaps into publishing decisions, we’ve mapped it out in our guide to measuring citation gaps.

What your index should not be

- Not a replacement for SEO KPIs. It’s a layer on top.

- Not a one-time audit. It’s a monthly benchmark.

- Not “mentions anywhere on the internet.” You’re measuring AI answer outcomes.

2. Build a prompt set you’ll track for 12 months (yes, 12)

The index lives or dies on whether you track the same prompts consistently.

I’ve seen teams “rotate prompts” every week because it feels productive. It’s not. It destroys your baseline.

Start with 3 prompt buckets (that map to revenue)

You want prompts that reflect buyer intent and category comparisons, not just definitions.

- Problem prompts (pain + “how do I”)

Example: “How do I reduce onboarding churn for a B2B SaaS?” - Solution prompts (category + evaluation)

Example: “Best onboarding analytics tools for SaaS” - Brand and competitor prompts (you vs alternatives)

Example: “Skayle alternatives for AI search visibility” (swap in your brand)

This isn’t about keyword volume. It’s about whether AI answers are shaping perception before search clicks happen.

Size your prompt set like an operator

A workable starting point:

- 50 prompts if you’re a small team and need fast signal

- 100–200 prompts for most SaaS SEO/growth teams

- 300+ prompts if you’re running multiple products or verticals

The key is stability. Track the same prompts monthly, then expand in quarterly batches.

Don’t ignore platform coverage

Different surfaces behave differently. Many AI visibility tools track multiple platforms, including AI answer layers and chat engines; SE Ranking’s overview is a good snapshot of what gets tracked and why platform coverage matters (SE Ranking).

You don’t need to measure everything on day one, but you should decide what “coverage” means for your company:

- Google AI Overviews visibility

- Chat-style engines visibility

- “Comparison” responses vs “how-to” responses

A baseline mistake I made (so you don’t)

I once built a prompt set that was 80% “definition” queries. The index looked great because we had a lot of top-of-funnel educational content.

Then sales asked the obvious question: “Cool. Are we being recommended when buyers ask for tools?”

We weren’t.

Fix: keep at least 40–60% of your prompt set in solution/comparison territory, even if it’s uncomfortable.

3. Pick metrics that don’t lie: presence, SoM, citation rate, sentiment

A good index isn’t a random blend of metrics. It’s a deliberate set of signals that represent the funnel.

The market is converging on a few core metrics. KnewSearch calls out Share of Model (SoM), citation rate, sentiment, and platform coverage as foundational for internal benchmarks (KnewSearch). Visiblie’s guide also reinforces prompt-level metrics like brand mention rate, recommendation rate, and share of voice as the practical building blocks (Visiblie).

Here’s what I recommend tracking first.

Presence rate (coverage)

Presence rate = the percentage of tracked prompts where you appear in the AI answer.

It’s basic, but it’s the quickest gut-check. The Rank Masters describes presence rate exactly this way for audits and baseline setup (The Rank Masters).

What to do with it:

- Low presence on a topic area usually means you’re missing content depth, entity clarity, or authority signals.

- High presence but low citation rate means you’re “in the mix” but not trusted enough to be sourced.

Share of Model (SoM) and Share of Voice (competitive position)

This is where the index becomes a competitive instrument, not just a vanity metric.

- SoM (Share of Model): how much of the AI answer space you occupy versus competitors.

- Share of Voice: share of mentions versus rivals for a topic.

You can treat SoM as the AI equivalent of “impression share.” It tells you if you’re becoming the default answer.

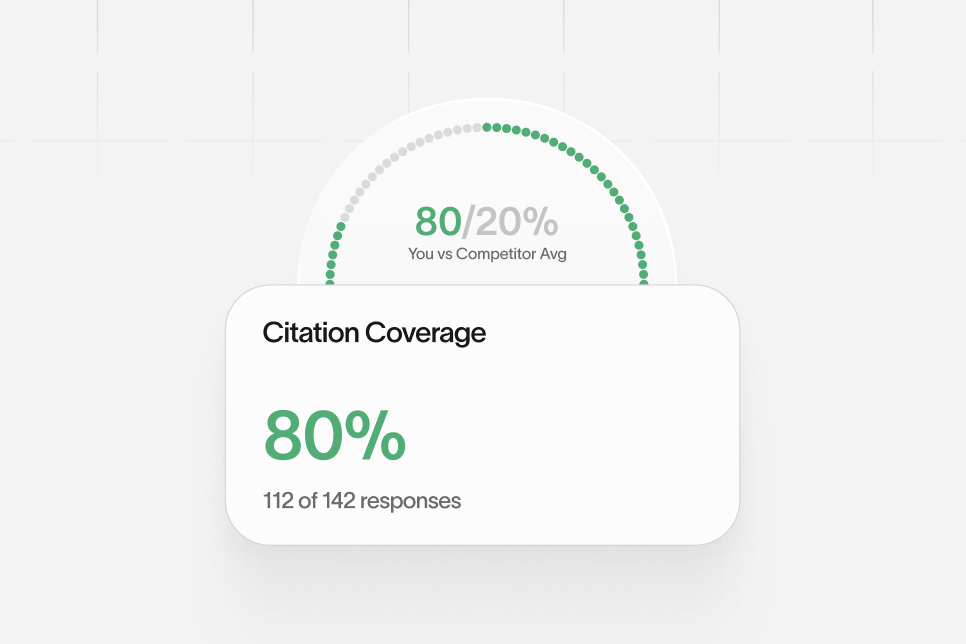

Citation rate (the scarce resource)

Citation rate = the percentage of prompts where your brand is cited with a source link.

Why it matters: AI answers often cite only a small set of sources. KnewSearch notes that typical AI responses cite 2–7 sources, which makes citation eligibility a real bottleneck (KnewSearch).

If you want to win, you need to build pages that are:

- Extractable (clean headings, direct answers)

- Structured (schema where it fits)

- Credible (clear authorship, definitions, unique insights)

If you’re serious about getting cited (not just mentioned), pair this with a systematic approach to fixing citation gaps.

Sentiment and “authority framing”

This is the metric teams skip because it feels squishy. It’s not.

Search Engine Land’s guide on measuring brand visibility in AI search discusses sentiment, citation share, and even composite ideas like an AI Reputation Index (Search Engine Land).

You don’t need perfect NLP. You need consistent labeling.

A simple approach:

- Positive: recommended, trusted, “best for X”

- Neutral: mentioned as an option

- Negative: flagged as risky, outdated, “not ideal for X”

This is how you keep “visibility” from masking reputation issues.

4. Turn the metrics into one AI search visibility index score (without overfitting)

If you don’t ship a single score, your org won’t pay attention. But if you ship a score with bad math, it’s worse than no score.

The trick: build a score that’s simple enough to explain in 30 seconds, but structured enough to diagnose.

The Coverage-to-Conversion Index (the model I use)

I use a 4-part model that mirrors the funnel:

- Coverage (presence rate)

- Competitiveness (SoM / share of voice)

- Credibility (citation rate)

- Conversion readiness (click + on-site conversion rate from those visits)

You can weight these differently depending on your stage:

- Early stage: heavier on coverage and credibility

- Competitive categories: heavier on competitiveness

- Mature demand capture: heavier on conversion readiness

A simple scoring approach that stays debuggable

Don’t do black-box scoring. You’ll never trust it.

Instead:

- Normalize each metric to a 0–100 scale.

- Apply weights (that you can explain).

- Keep the raw sub-scores visible.

Example weighting (starter):

- Coverage: 30%

- Competitiveness: 25%

- Credibility: 30%

- Conversion readiness: 15%

That gives you a single “index score” per month, plus four levers you can pull.

Use external benchmarks carefully

Benchmarks are useful for calibration, not for panic.

Semrush publishes an AI Visibility Index experience with monthly benchmarks and a Source Diversity concept (unique sources per prompt) (Semrush). Even if you don’t match their methodology, it’s a useful reference point for how the market is formalizing these measurements.

Also, Semrush’s material claims LLM-driven searchers can be 4.4x more likely to convert than traditional searchers (Semrush). Treat that as directional, not universal, but it’s a strong business-case argument for why the index should exist.

Proof block (illustrative, but realistic)

Here’s an example of how this plays out in a real SaaS org.

- Baseline (Month 0): You track 120 prompts. Presence is decent in “how-to” prompts, but you’re missing in “best tools” prompts. Citation rate is low.

- Intervention (Weeks 1–6): You refresh 10 hub pages to answer comparison intent directly, add clearer definitions, tighten internal linking, and add structured data where it’s appropriate.

- Expected outcome (Month 2): Presence increases first, then citations follow as pages become easier to extract and trust. Clicks lag slightly behind citations.

- Timeframe: 6–10 weeks to see reliable movement, assuming you measure monthly and keep prompts stable.

The important part isn’t the exact numbers. It’s that you’re measuring the funnel: coverage → citations → clicks → conversions.

If you want the technical layer that makes pages extractable and citation-eligible, this pairs well with our breakdown of technical fixes for AI visibility.

The contrarian stance (and the tradeoff)

Don’t build your index from web analytics and then try to “map it to AI.” Build it from prompts and citations first.

Tradeoff: you’ll spend more time on manual prompt work early. The payoff is you’ll actually know what the AI engines are saying, not just what traffic happened to arrive.

5. Make the index drive publishing, refreshes, and on-page conversion

This is where most AI visibility projects quietly die.

The index becomes a report. A few people look at it. Nothing changes.

So you need an operating rhythm that turns scores into output.

The action checklist I’d run this month

Use this as a monthly cycle. It’s boring on purpose.

- Lock your prompt set (no changes this month).

- Capture answers and label outcomes: not present / present / cited.

- Tag each prompt to a topic cluster and a target page (or “no page exists”).

- Pick 5–10 prompts with high business value and low citation rate.

- Decide the fix type:

- Refresh an existing page

- Publish a missing page

- Improve internal links to a hub

- Add/repair structured data

- Clarify entity definitions and comparisons

- Ship the changes within 2 weeks.

- Re-measure monthly, not daily.

That’s it. Do it for 90 days and you’ll have a story the exec team can understand.

What to fix when presence is low

Low presence usually points to one of these:

- You don’t have a page that answers the prompt’s intent

- You have the page, but it’s not extractable (buried answers, messy structure)

- Your content lacks entity clarity (the model can’t confidently attribute the claim)

This is where topic cluster design matters. AI systems “see” context in chunks, not just keywords, which is why hub-and-spoke architecture tends to outperform isolated posts. If you’re rebuilding clusters this year, our guide on topic cluster architecture is the blueprint we use.

What to fix when you’re present but not cited

This is a common pattern. You show up as a mention, but the citation goes to a competitor or a generic source.

Fixes that reliably move citation rate:

- Add direct answer blocks (40–80 words) high on the page

- Add clear definitions (one sentence, no hedging)

- Include comparison tables that don’t dodge tradeoffs

- Use structured data where it fits your content type

If you’re working on structured data as a lever for GEO/AEO, our structured data blueprint goes deep on what to implement and how to validate it.

Don’t forget conversion readiness

A citation is not a conversion.

Your index should include at least one conversion readiness signal, even if it’s rough:

- Visits from citation clicks (where measurable)

- Landing page conversion rate for those visits

- Assisted conversions (if you have the attribution maturity)

If you can’t measure “AI click referrals” cleanly yet, be honest about it. Track what you can, then build instrumentation over time.

Common mistakes that tank the index (and morale)

- Changing prompts midstream so nothing is comparable.

- Over-weighting sentiment before you have consistent labeling.

- Treating mentions as wins when citations are the real leverage.

- Optimizing only for definitions because it’s easier.

- Ignoring on-page conversion and then wondering why “visibility” didn’t turn into pipeline.

6. FAQ: how teams keep the index honest (and useful)

This is the part everyone asks about once you actually try to run the index for 2–3 months.

How often should we update the AI search visibility index?

Monthly is the sweet spot for most SaaS teams. Weekly measurement is noisy and usually leads to prompt churn and reactive decisions.

What’s the minimum viable index for a small team?

Start with 50 prompts, measure presence and citation rate, and add SoM once you have a stable baseline. Visiblie’s prompt-level approach is a good sanity check for keeping the metrics practical (Visiblie).

Should we track Google AI Overviews separately from chat-style engines?

Yes, if you can. Platform behavior differs, and your fixes may differ too. If you need a technical view of what matters for AI answer inclusion, Data-Mania’s 2026 steps are a useful checklist for optimization themes (Data-Mania).

How do we benchmark competitors without copying them?

Use share of voice and citation share to identify where competitors are “default cited,” then map that back to what they publish (and how it’s structured). Search Engine Land’s framing on citation share is a good reference for this competitive lens (Search Engine Land).

What if we get mentioned but the sentiment is negative?

Treat that as a content and positioning problem, not a measurement problem. Pull the exact prompts and answers, then ship pages that clarify tradeoffs, address misconceptions, and create a more accurate “authority framing.”

When does an index become a distraction?

When it stops producing a monthly list of pages to refresh, publish, or fix. If the index doesn’t change output, it’s just a report.

One more business-case reminder: KnewSearch cites that 47% of B2B research now includes AI search, which is a strong signal that this isn’t an “experimental” channel anymore (KnewSearch).

If you want to pressure-test your current measurement and see where you’re missing citations, start by measuring your current AI footprint, then decide what your first 50 prompts should be. If you want, we can also show you how teams operationalize this inside a system built for AI search visibility so the index turns into shipped work.

What would you rather know one month from now: “traffic was up,” or exactly which prompts you’re losing citations on—and what page you’ll fix next?

References

- KnewSearch — What Is AI Search Visibility? Complete Guide [2026]

- Search Engine Land — How to Measure Brand Visibility in AI Search

- Visiblie — What Are AI Visibility Metrics? Brand Guide (2026)

- The Rank Masters — Best AI Search Visibility Checkers & Audit Tools 2026

- Semrush — AI Visibility Index

- SE Ranking — 8 best AI visibility tracking tools explained and compared

- Data-Mania — 5 Steps to Optimize for AI Search Ranking in 2026