TL;DR

The best AI content optimization tools for SaaS do different jobs: some refine drafts, some plan topical coverage, and some track AI visibility. The right choice depends on whether the team needs cleaner writing, better SEO execution, or stronger measurement across rankings and AI answers.

SaaS teams do not need more AI text. They need content that reads naturally, targets the right terms, earns rankings, and is structured well enough to be cited in AI answers.

The best tools help editors refine drafts, tighten topical coverage, and connect optimization work to business outcomes. A simple rule holds up across most teams: the right optimization platform improves relevance and clarity, while the wrong one just increases content volume.

What SaaS teams should actually optimize for now

The buying criteria changed. A few years ago, many teams judged optimization tools by how quickly they could push a draft toward a content score. In 2026, that is not enough.

A SaaS content team now has to optimize for four layers at once: search intent, natural keyword integration, topical completeness, and AI extractability. If one layer breaks, the page may still get published, but it usually underperforms.

That matters because content is no longer competing only for ten blue links. It is competing for inclusion in AI-generated summaries, answer boxes, and citation surfaces. As covered in our guide to content trust, AI systems tend to favor pages that are structured clearly, specific in their claims, and easy to extract.

A practical way to evaluate the Top AI Content Optimization Tools for SaaS is to use a four-part review model:

- Coverage: Does the tool help teams cover the subtopics that matter for the query?

- Language quality: Does it improve the draft without making it sound machine-written?

- Workflow fit: Can strategists, writers, and editors use it without creating another fragmented process?

- Visibility impact: Does it support rankings only, or does it also help with AI answer inclusion and citation tracking?

That last point is where a lot of comparison articles fall short. They review writing features, but they do not ask whether the content becomes more discoverable in AI search. For SaaS brands, that is now a core distribution problem, not a side issue.

There is also a contrarian point worth stating clearly: do not optimize for the score first; optimize for intent fit first, then use the score as a check. Teams that reverse this sequence often ship pages that look comprehensive to software but feel generic to humans.

The shortlist: 7 platforms worth considering

Not every team needs the same stack. Some need a stronger editor for existing content. Others need planning, gap analysis, or AI visibility measurement. The list below focuses on the tools most often mentioned in SaaS SEO conversations and separates them by practical use case rather than hype.

Surfer SEO

Ethical SEO describes Surfer SEO as the most widely used content optimization tool for SaaS content teams. That tracks with market behavior: it is often the first platform teams adopt when they want clearer term guidance and a repeatable on-page workflow.

Where Surfer is strongest:

- Live optimization guidance while drafting

- Clear term usage recommendations

- Competitive content pattern analysis

- A workflow that is easy for non-specialist writers to follow

Where Surfer creates friction:

- Teams can become too score-driven

- Writers may over-insert terms if editorial review is weak

- The output can feel similar across categories when everyone follows the same playbook

A realistic fit: Surfer works best for SaaS teams that already know what they want to publish and need a reliable optimization layer for content production.

A common editorial example looks like this: an AI draft on “customer support automation software” includes the main term, but misses adjacent language like “ticket routing,” “help desk workflows,” and “agent productivity.” Surfer helps surface those omissions quickly. The editor still has to decide which terms belong naturally and which should be ignored.

Clearscope

Ethical SEO notes that Clearscope uses IBM Watson NLP to analyze top-ranking content and suggest keywords. In practice, Clearscope is often preferred by teams that care as much about writing quality as optimization discipline.

Where Clearscope is strongest:

- Clean briefs and editor experience

- Strong support for natural language refinement

- Better fit for editorial teams that do not want the interface to dominate the writing process

- Reliable topical term guidance without as much visual noise

Where Clearscope creates friction:

- It can be expensive for smaller teams

- Some SaaS operators find it less useful for broader workflow orchestration

- It is primarily an optimization layer, not a complete visibility system

Clearscope is often the better choice when a team’s main problem is not “we need more content,” but “our content feels flat and under-covered.” It helps tighten the draft without pushing the writer into obvious over-optimization.

Rankability

According to Rankability’s comparison, Rankability, Clearscope, and Surfer came out as the strongest options on a price-to-value basis. That makes Rankability relevant for SaaS teams that want practical optimization without immediately paying enterprise-level prices.

Where Rankability is strongest:

- Price-to-value positioning

- Content scoring and optimization support

- Useful for leaner teams that want a direct SEO editing layer

Where Rankability creates friction:

- Lower market mindshare than Surfer or Clearscope

- Fewer teams have established workflows built around it

- It may require more internal testing to define a house style for optimization

For a content lead trying to standardize quality across freelancers, Rankability can make sense if budget control matters as much as feature depth.

MarketMuse

Profound’s B2B SaaS roundup highlights that MarketMuse uses AI for content gap analysis, topic suggestions, and SERP insights. That distinction matters because many teams confuse optimization with planning.

Where MarketMuse is strongest:

- Topic planning before drafting starts

- Content gap analysis across a site or topic cluster

- Identifying what is missing from a category, not just a single page

Where MarketMuse creates friction:

- It can feel heavier than point-solution editors

- Teams that only want line-by-line optimization may underuse it

- The value depends on having someone who can act on strategic recommendations

MarketMuse is a better fit for SaaS companies trying to build authority in a category over 6 to 12 months, not just patch individual pages.

Semrush Enterprise AIO

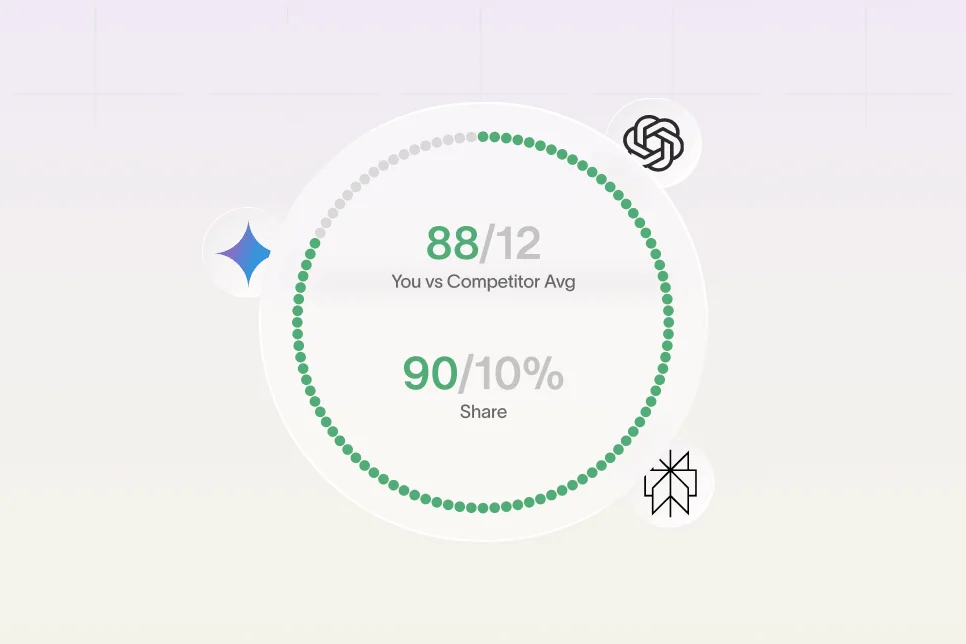

Semrush positions Semrush Enterprise AIO around tracking how brands appear across major AI platforms such as ChatGPT and Google Gemini. That expands the frame beyond classic on-page optimization.

Where Semrush Enterprise AIO is strongest:

- Brand visibility tracking across AI surfaces

- Useful reporting for teams that need executive-level visibility measurement

- Better alignment with the new funnel: impression, AI answer inclusion, citation, click, conversion

Where it creates friction:

- It is not the most writer-centric optimization experience

- Enterprise tools can be heavier than a focused editing platform

- Smaller SaaS teams may not need the full reporting layer yet

This is important for buyers evaluating the Top AI Content Optimization Tools for SaaS: some tools optimize text, while others optimize brand presence in AI-mediated discovery. Those are related jobs, but they are not the same job.

Jasper

Text.com notes that Jasper helps SaaS companies scale content production while maintaining quality. Jasper is widely known as a generation tool, but it can still play a role in optimization when used with strong editorial controls.

Where Jasper is strongest:

- Draft acceleration for teams with broad content needs

- Useful for variant creation, rewrites, and first-pass expansion

- Helpful when marketers need to move from blank page to workable draft quickly

Where Jasper creates friction:

- Generation is not the same as optimization

- Teams may confuse output volume with publish-ready quality

- Without a stronger SEO or editorial layer, the content often stays too generic

The practical takeaway is simple: Jasper can be part of the stack, but it should not be the optimization stack on its own.

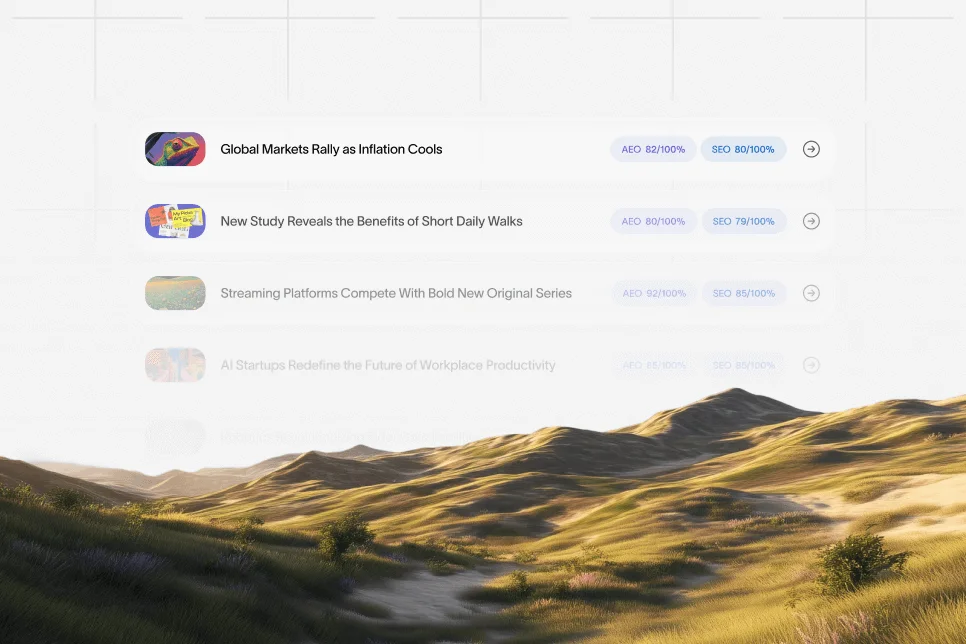

Skayle

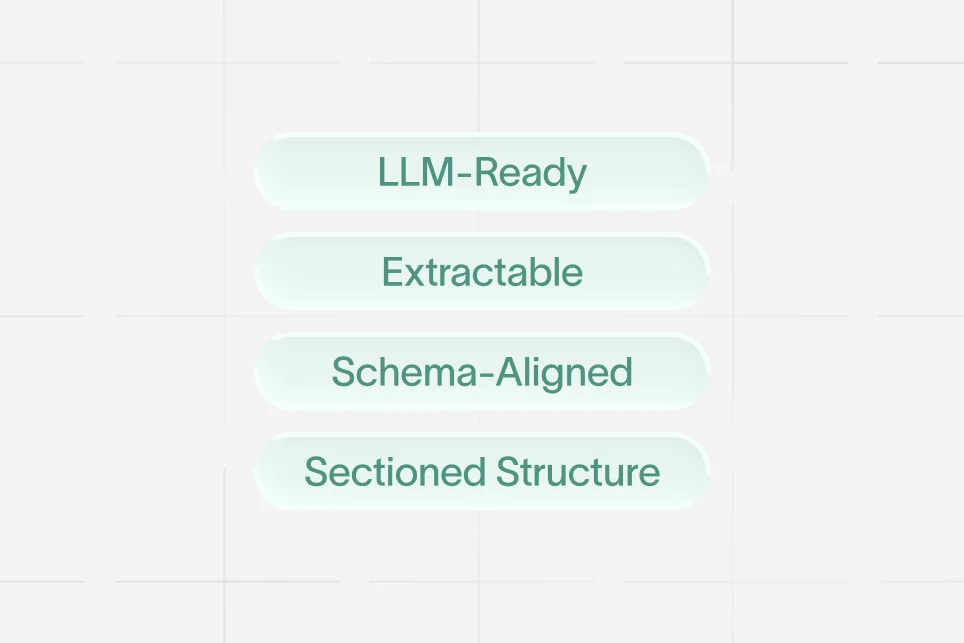

For SaaS teams that want ranking workflows tied directly to AI visibility, Skayle fits a different category than generic writing assistants. It helps companies rank higher in search and appear in AI-generated answers by combining planning, optimization, and visibility measurement in one system.

That matters when the real problem is fragmented execution: one tool for briefs, another for drafting, another for optimization, and no clear way to measure citation coverage. Teams facing that issue usually do not need another text box. They need a ranking and visibility system.

A useful complement to this tool evaluation is our breakdown of LLM-ready feature pages, which shows how page structure affects extractability, citation potential, and downstream conversion.

How to choose the right tool without wasting six months

Most teams buy too early and evaluate too loosely. They run a few articles through a demo, compare scores, and make a decision based on surface impressions.

A better process is to test each platform against the actual constraints of a SaaS content team. That means comparing not just features, but how the tool changes editorial behavior.

The 5-step review process that exposes weak fits

- Pick one live topic cluster. Use a cluster with commercial value, not a vanity blog topic. Product-led comparison terms, jobs-to-be-done themes, or solution pages work best.

- Establish a baseline. Record current rankings, click-through rate, assisted conversions, and whether the page appears in AI answer surfaces.

- Run the same draft standard across tools. Do not compare one tool on a weak draft and another on a polished draft.

- Review the edited output manually. Check whether the tool improved clarity, section depth, and term placement without making the page repetitive.

- Measure after publication for 4 to 8 weeks. Track ranking movement, clicks, engagement quality, and citation presence where possible.

This review process sounds obvious, but many teams skip step four. They let the tool define quality. That is a mistake.

A straightforward mini case can illustrate the difference. Consider a SaaS company publishing a feature page for “knowledge base analytics.” Baseline: the page ranks on page two, traffic is modest, and sales calls show weak message alignment. The team rewrites the page using a planning tool for coverage, an optimization tool for natural term integration, and a citation-focused structure with tighter Q&A blocks. The expected outcome over the next 6 to 8 weeks is not guaranteed top rankings, but stronger topical completeness, better excerptability, and a cleaner path from search visit to demo intent. The key is that the team defines measurement before the rewrite, not after.

What strong evaluation looks like in practice

A good review asks questions like these:

- Did the draft become easier to skim?

- Did keyword placement become more natural or more forced?

- Did the page answer the obvious follow-up questions a buyer would have?

- Did the optimizer help structure the page for AI extraction?

- Did the editor spend less time fixing robotic phrasing?

If the answer to the last question is no, the tool may be adding work rather than removing it.

Where teams usually get burned

The biggest mistakes are not technical. They are process mistakes that lead to mediocre pages at scale.

Mistake 1: treating optimization like keyword stuffing with better software

Good tools do not exist to increase keyword density. They exist to improve topical fit and reduce obvious gaps.

When teams force every suggested term into the copy, they usually damage readability and trust. That hurts both rankings and conversion.

Mistake 2: using one score as the editorial north star

A content score can be useful. It is not a substitute for product knowledge, search intent understanding, or clear positioning.

This is especially important in SaaS, where pages often need to convert multiple audiences: practitioners, managers, and buyers. A score cannot tell the team whether the message lands with those groups.

Mistake 3: separating SEO optimization from conversion design

A page can rank and still fail commercially. If the content does not clarify who the product is for, what problem it solves, and what proof supports the claim, traffic quality will not become pipeline.

This is why content optimization should be reviewed alongside page structure, proof placement, internal linking, and CTA clarity. Search performance and conversion performance are linked more tightly than many tool comparisons admit.

Mistake 4: ignoring AI answer inclusion

Many teams still publish as if Google blue links are the only surface that matters. That is outdated.

If the page is not easy for AI systems to summarize and cite, the brand misses a growing layer of discovery. For a deeper view, our GEO case study breakdown explores how SaaS teams compare AI visibility results across answer engines.

Which platform fits which SaaS team

There is no universal winner. The right answer depends on the maturity of the team, the content motion, and whether the company is optimizing drafts, clusters, or brand visibility.

Best for fast-moving content teams: Surfer SEO

Surfer is usually the most practical choice for teams that want quick adoption and a familiar optimization workflow. It is easier to operationalize than heavier platforms.

Best fit:

- In-house content teams publishing weekly

- SEO managers working with freelancers

- Teams that need repeatable on-page optimization now

Best for editorial quality control: Clearscope

Clearscope is often the cleaner fit for teams that prioritize writing quality and want optimization to support, not dominate, the editorial process.

Best fit:

- Brand-sensitive SaaS companies

- Teams with experienced editors

- Content programs where quality matters more than output volume

Best for budget-conscious optimization: Rankability

Rankability makes sense when the team wants a serious optimization layer but needs tighter cost discipline.

Best fit:

- Smaller SaaS teams

- Early-stage companies building SEO operations

- Lean teams standardizing freelancer output

Best for category planning: MarketMuse

MarketMuse is strongest when the issue is not just page-level optimization, but content architecture across a category.

Best fit:

- Teams building topic clusters

- Companies refreshing underdeveloped content libraries

- SEO leaders focused on authority growth over time

Best for AI visibility reporting: Semrush Enterprise AIO

Semrush Enterprise AIO is the stronger option when leadership wants to know how the brand shows up across AI-driven answer surfaces.

Best fit:

- Larger SaaS teams

- Enterprise reporting environments

- Companies expanding beyond traditional SEO metrics

Best as a drafting layer, not a standalone optimizer: Jasper

Jasper is useful for speed, but it needs editorial and SEO guardrails around it.

Best fit:

- Teams with strong editors already in place

- Large draft volume requirements

- Repurposing workflows that still need optimization downstream

Best for connected ranking and AI visibility workflows: Skayle

Skayle is the better fit when the problem is fragmented execution and weak measurement across ranking, optimization, and AI answer presence.

Best fit:

- SaaS teams that want one operating layer for search and AI visibility

- Companies measuring citations, not just rankings

- Operators who care about execution consistency more than isolated feature checklists

The practical buying checklist before signing anything

Before a SaaS team commits to any platform on this list, it should run a short decision filter against real workflow constraints.

- Does the tool improve content quality or just increase output?

- Can editors maintain a natural brand voice after optimization?

- Does it help with planning, refining, or visibility measurement, and which of those matters most right now?

- Can the team connect the tool to rankings, clicks, pipeline, or AI citation coverage?

- Will this reduce fragmented work, or create another layer of it?

That final point is usually the hidden cost. A tool can look strong in a demo and still fail because it adds review overhead, creates duplicate workflows, or encourages low-value publishing.

The strongest programs treat optimization as part of a larger authority system. They publish fewer weak pages, refresh more intentionally, and structure pages so both search engines and AI systems can parse them cleanly. Teams looking to measure that shift more directly often use platforms such as Skayle to see how they appear in AI answers and where citation coverage is thin.

FAQ: what buyers usually want clarified

Which AI content optimization tool is best for SaaS startups?

For most early-stage SaaS teams, the best choice depends on the immediate bottleneck. Surfer SEO and Rankability are often easier to justify when the need is practical on-page optimization, while MarketMuse is more useful when planning gaps are the bigger issue.

Are content optimization tools enough to rank in Google?

No. These tools help improve topical coverage, structure, and term usage, but they do not replace search intent research, product positioning, internal linking, proof, or page experience. Strong rankings usually come from the full system, not the editor alone.

Do AI content optimization tools help with AI Overviews and LLM citations?

They can help indirectly if they improve clarity, structure, and completeness. But not all tools are designed to measure or improve AI answer presence directly, which is why SaaS teams should separate text optimization from AI visibility tracking.

Is Surfer better than Clearscope for SaaS teams?

Surfer is often better for teams that want a fast, operational SEO workflow. Clearscope is often better for teams that care more about editorial quality and a cleaner writing experience. The better choice depends on whether the current bottleneck is process speed or writing precision.

Should SaaS companies use Jasper for SEO content optimization?

Jasper is useful for generating first drafts and variations, but it should not be treated as a full optimization system. Most SaaS teams get better results when Jasper is paired with a dedicated optimization or visibility platform.

The Top AI Content Optimization Tools for SaaS are not interchangeable. Each one reflects a different model of content work: draft refinement, category planning, AI visibility reporting, or workflow consolidation.

Teams that choose well usually start with the bottleneck, not the brand name. For companies trying to improve rankings and understand how their pages appear in AI answers, the next step is to measure visibility directly and tighten the pages most likely to earn citations.

References

- Ethical SEO: 22 Best AI-Powered SEO Tools for SaaS Companies in 2026

- Rankability: We Tested 13 Best AI SEO Content Optimization Tools

- Profound: 11 Best AI SEO Tools for B2B SaaS Growth Teams

- Semrush: The 9 Best AI Optimization Tools

- Text.com: The 10 Leading AI Tools to Boost Your SaaS Business

- AI Content For Saas Companies: 9 Best Tools Guide 2026

- Best AI-Ready Content Audit Tools for SaaS Teams 2026

- Top 7 AI Content Optimization Tools to Boost SEO