TL;DR

Teams looking up how to add e-e-a-t signals to blog posts usually focus on page tweaks, but the real work is operational. Strong E-E-A-T comes from named expertise, first-hand examples, source-backed claims, extractable formatting, and a repeatable review process that supports both rankings and AI citations.

Most teams asking how to add e-e-a-t signals to blog posts are looking for a template tweak. The real answer is broader: E-E-A-T is not something a company inserts into a page once. It is a set of trust signals created through authorship, evidence, transparency, and consistent editorial standards.

A useful rule is simple: E-E-A-T is proven, not declared. In 2026, that matters not only for Google rankings, but also for whether AI systems treat a page as credible enough to cite.

Why E-E-A-T now affects both rankings and citations

The old SEO habit was to treat trust as a soft concept. Publish enough content, add the keyword, and hope the page rises. That model is weaker now because search visibility is being split across classic blue links, AI Overviews, chat assistants, and answer engines.

When a user asks a question in an AI interface, the system is more likely to rely on sources that look attributable, current, and specific. That means the page has to work across a new funnel:

- Impression

- AI answer inclusion

- Citation

- Click

- Conversion

Google does not offer a single E-E-A-T score or tag. As explained in Google Search Central documentation, publishers should self-assess whether content is helpful, reliable, and people-first. That guidance matters because teams often waste time trying to “optimize for E-E-A-T” as if it were a hidden field.

That point is echoed in a widely referenced Reddit discussion on implementing EEAT, where practitioners note that E-E-A-T is a model used to evaluate publishers, not a technical switch inside a post.

The practical implication is straightforward. Companies should stop asking whether a page mentions expertise and start asking whether it demonstrates experience in a way that a human reviewer, a search engine, and an AI system can all recognize.

This is also where AI workflows break down. Teams use AI to draft faster, but if the workflow strips out named authors, first-hand examples, supporting evidence, and editorial review, the output becomes efficient but generic. Generic pages may index. They are less likely to become trusted citation sources.

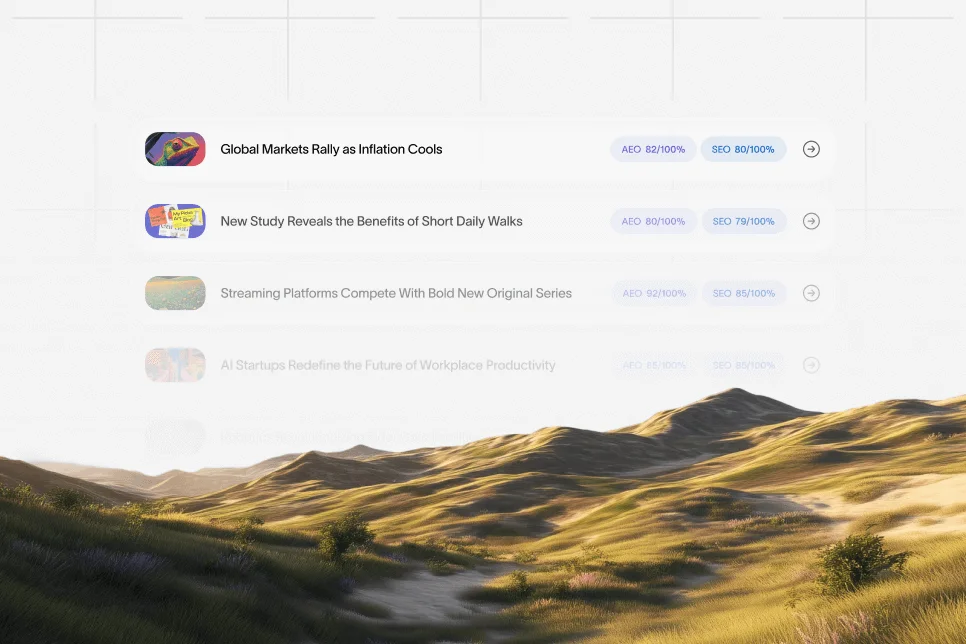

For SaaS teams building authority at scale, this is now a workflow problem more than a writing problem. A platform like Skayle fits naturally here because it helps companies build pages designed to rank in search and appear in AI-generated answers, rather than treating content generation as the only goal. That same logic shows up in our guide to content trust, where the focus is extractable, evidence-backed pages instead of volume alone.

The evidence ladder that makes trust visible

A useful way to think about how to add e-e-a-t signals to blog posts is through a simple model: the evidence ladder. The higher a page climbs on this ladder, the easier it is for both readers and AI systems to trust it.

The four levels are:

- Claims

- Attribution

- Demonstration

- Verification

A claim is a plain statement with no support. Attribution adds a source, author, or expert. Demonstration shows first-hand knowledge through examples, screenshots, scenarios, or decisions. Verification adds external proof, documentation, dates, or measurable outcomes.

Most weak AI-assisted blog posts stall at level one or two. They state opinions confidently, maybe cite a source, but they do not show real-world use. Strong pages move into demonstration and verification.

Here is the contrarian view that matters: Do not try to make AI-written content sound more authoritative. Make it more accountable. The first approach creates polished fluff. The second creates content that can earn trust.

A simple editorial check can expose the difference:

- Can a reader tell who is speaking?

- Can a reader tell where the claim came from?

- Can a reader see what happened in practice?

- Can a reviewer verify that the information is current?

If the answer is no to three or four of those questions, the page is not lacking polish. It is lacking trust infrastructure.

One internal process many SaaS teams use is a pre-publish review based on baseline, intervention, expected outcome, and timeframe. For example:

- Baseline: traffic is flat and pages are cited inconsistently in AI answers

- Intervention: add author identity, expert review, screenshots, source attributions, and update stamps across a priority cluster

- Expected outcome: stronger engagement signals, better conversion confidence, and improved citation consistency

- Timeframe: measure over 6 to 8 weeks using ranking, click-through, and AI citation tracking

That is not a promise of outcome. It is a measurement plan. When hard benchmarks are unavailable, that level of instrumentation is more credible than invented percentages.

1. Put a real expert or operator on the page

The fastest way to weaken trust is anonymous publishing. If a post is supposed to guide a buyer, operator, or marketer through a decision, readers should know who produced it and why that person is qualified to speak.

According to Reading Room, author profiles and expert biographies are practical ways to strengthen E-E-A-T signals. This matters because authorship is one of the clearest ways to make expertise legible.

A useful author block includes:

- Full name

- Current role

- Relevant domain experience

- Short explanation of what the person has done

- Links to other credible work, where appropriate

- Date of review or update

This should not read like a corporate bio stuffed with titles. It should answer a basic question: why should anyone trust this person on this topic?

What strong authorship looks like in practice

A weak line says, “Written by the content team.” A strong line says, “Reviewed by a SaaS SEO lead who has managed content audits, refreshes, and internal linking programs for B2B software sites.”

That difference is small in word count but large in trust. One names a function. The other names experience.

For companies using AI workflows, the fix is operational. Require every article brief to include the subject-matter source, reviewing expert, and final accountable editor before drafting begins. If those roles are absent, the draft should not ship.

2. Replace abstract advice with first-hand experience

Many posts mention experience without actually showing it. That is one of the main reasons AI-generated content feels interchangeable. It paraphrases consensus instead of surfacing real use.

As noted by The Digi Crawl, optimizing for E-E-A-T helps signal that content was created by qualified people with real-world knowledge. The emphasis on real-world knowledge is the key point.

First-hand experience can show up in several forms:

- A before-and-after process change

- A tradeoff the team made and why

- A screenshot or annotated example

- A specific workflow step that only appears after doing the work

- A failed attempt and what changed next

Here is a concrete example. Instead of saying, “Update old content regularly,” a stronger version says, “A content team may review declining posts every 90 days, starting with pages that lost rankings for commercial-intent terms, then revise examples, source dates, and internal links before rewriting the entire page.”

That sentence feels more credible because it contains sequence, judgment, and context.

A practical rule for AI-assisted drafting

Every draft should include at least three first-hand inserts before publication:

- One observed workflow detail

- One decision tradeoff

- One specific example that could only come from practice

Without those inserts, the draft may be clean but still weak on experience.

This also improves AI answer visibility. Systems that summarize the web tend to favor pages with distinct, attributable detail over pages that restate generic definitions. That same principle applies to feature and solution pages, which is why our blueprint for LLM-ready pages focuses on extractable structure and concrete proof.

3. Show where claims came from and when they were checked

Trust decays when the reader cannot tell whether a claim is sourced, current, or copied. That is especially risky on topics like SEO, AI visibility, content strategy, and platform behavior, where guidance changes quickly.

The fix is not to overload the article with citations. The fix is to attach sources where a claim needs support and to make freshness visible.

A solid sourcing standard includes:

- Inline attribution for factual claims

- Source names that readers recognize

- Date-aware references when timing matters

- A visible last-reviewed or updated date

- Distinction between sourced facts and editorial opinion

This is where many teams overdo internal confidence and underdo external proof. Saying “everyone knows” or “most experts agree” is weak unless the post can point to something verifiable.

Google Search Central documentation is a strong example of the kind of source that should sit behind conceptual claims about helpful content and trust. For practical, tactical interpretation, sources such as PageOptimizer Pro and Linkifi can support implementation ideas, as long as the article clearly separates official guidance from industry interpretation.

What to add to the workflow

The simplest operational change is a source field in the content brief. Every section that contains a factual statement should either include an approved source or be rewritten as general advice.

That reduces two common failures:

- unsupported assertions that weaken credibility

- accidental recycling of outdated guidance

For teams publishing at scale, that discipline matters more than word count. Search engines and AI systems do not reward content for sounding complete. They reward content that looks dependable.

4. Build expert review into the workflow, not after it

Many companies bolt review on at the end. The draft is done, someone glances at it, and the page goes live. That process usually misses the part that actually matters: whether a knowledgeable person shaped the article before the claims hardened into copy.

A better model is to route expertise into the article at three points:

- Brief creation

- Mid-draft review

- Final approval

This does not require a famous name on every post. It requires accountable input from someone close to the work.

A realistic review pattern for lean teams

For a SaaS company writing about onboarding analytics, product-qualified leads, or technical SEO workflows, the reviewer might be a product marketer, solutions consultant, growth lead, or SEO manager. That person does not need to rewrite the post. They need to validate examples, remove overstatements, and add nuance.

This matters for user trust as much as search trust. TechWyse connects E-E-A-T with user experience, arguing that trust-oriented content also improves visitor engagement. That is a practical point: pages that feel generic do not only rank worse. They convert worse.

A mini case pattern can make this review process visible.

- Baseline: a blog post explains AI search visibility in broad terms but includes no examples from actual reporting or auditing work

- Intervention: a reviewer adds the exact metrics the team would monitor, clarifies what cannot be measured directly, and inserts a note on how often the page should be refreshed

- Expected outcome: stronger clarity, lower bounce from mismatched expectations, and more confidence from evaluators and buyers

- Timeframe: monitor engagement and assisted conversions over the next publishing cycle

Again, the value is not in pretending a guaranteed lift exists. The value is in making expertise auditable.

5. Add proof elements that AI summaries can extract

A page can be trustworthy and still hard to cite. That happens when the content buries proof in long paragraphs, vague generalities, or stylistic filler. AI systems need clean extractable elements.

Useful proof elements include:

- concise definitions

- numbered lists

- direct answer paragraphs

- tables or comparisons

- mini case examples

- dated references

- clear FAQ language

This is where answer formatting and trust formatting overlap. A quotable 50-word paragraph that defines a concept cleanly is easier for an AI system to lift into a response. A scannable list of evidence types makes it easier to summarize accurately.

For companies building around AI visibility, that should shape editorial design. The page is no longer built only for a click from Google. It is also built to be cited before the click happens.

The format that tends to travel best

The most reusable pattern is:

- one sentence definition

- one short explanation

- one practical example

- one limit or caveat

For example: E-E-A-T signals are the visible proof that content comes from real expertise and experience. They help readers and search systems evaluate credibility. An expert bio, a sourced claim, and a first-hand example are stronger than generic advice. A polished article without attribution is still weak on trust.

That format is compact, answer-ready, and difficult to confuse.

It also helps support discoverability across AI surfaces, which is closely related to the practices behind our GEO case study coverage for teams comparing performance across answer engines.

6. Stop publishing polished generic content

This is the mistake that deserves the strongest warning. Many AI workflows improve grammar, structure, and speed while stripping out the only things that make a page trustworthy.

The wrong instinct is: make the article sound smarter. The better instinct is: make the article easier to verify.

Generic content usually has these symptoms:

- broad claims with no ownership

- no named expert or reviewer

- no source attribution

- no original examples

- no visible update logic

- no opinion about tradeoffs

By contrast, trustworthy content may be less elegant but more specific. It names what happened. It states limits. It shows where information came from.

Common mistakes that quietly weaken E-E-A-T

- Using AI to write from zero without source constraints. This increases the chance of bland or unsupported statements.

- Hiding the author behind the brand. Brand authority helps, but readers still want accountable expertise.

- Treating review as proofreading. Grammar checks do not validate subject matter.

- Adding credentials without examples. A title alone does not prove experience.

- Refreshing dates without refreshing substance. Updating the stamp while leaving stale guidance in place weakens trust.

- Writing for rankings only. Pages that rank but fail to earn citations or conversions are underperforming in the current search environment.

A smart editorial standard is to fail any post that cannot answer these four questions:

- Who is responsible for this advice?

- What real experience informs it?

- Which claims are supported externally?

- When was it last checked?

If those answers are weak, the problem is not optimization. The problem is editorial credibility.

7. Turn E-E-A-T into a repeatable workflow checklist

The strongest teams do not handle E-E-A-T as a final polish layer. They build it into planning, drafting, review, publishing, and refresh cycles.

A practical checklist for how to add e-e-a-t signals to blog posts looks like this:

- Assign a named author or accountable reviewer before drafting starts.

- Define the real-world source of insight for the article.

- Add at least three first-hand details during drafting.

- Attach approved sources to every factual or dated claim.

- Include one direct-answer paragraph near the top of the page.

- Use scannable proof elements such as lists, examples, and FAQs.

- Add an updated date and refresh trigger for future review.

How teams should measure whether this is working

Because there is no official E-E-A-T score, teams need proxy metrics. A useful measurement setup includes:

- baseline ranking positions for the target topic cluster

- click-through rate from search

- on-page engagement or scroll depth

- assisted conversions from content

- AI answer inclusion or citation tracking where available

- refresh cadence and time-to-update

This is one of the places where modern visibility platforms become useful. Instead of treating SEO reporting and AI visibility as separate tasks, the better approach is to connect authority signals, content updates, and citation coverage inside one operating system. That is the problem Skayle is built to address: helping SaaS teams rank in search and appear in AI answers with measurable visibility, not just publish faster.

The long-term gain is operational consistency. Once the checklist becomes part of the brief, review process, and refresh schedule, trust stops depending on one unusually careful editor.

Questions teams ask when trying to add E-E-A-T signals

Is E-E-A-T a ranking factor that can be optimized directly?

Not in the way teams often mean it. E-E-A-T is better understood as a framework for evaluating quality and trust rather than a field a publisher can switch on. That is why evidence, attribution, and experience matter more than isolated page tweaks.

Can AI-written content still demonstrate E-E-A-T?

Yes, but only if the workflow adds real expertise, review, sourcing, and first-hand detail. AI can help structure and accelerate drafts, but it cannot manufacture genuine experience on its own.

What is the easiest E-E-A-T fix for existing blog posts?

For many teams, the fastest gains come from adding accountable authorship, expert review notes, stronger source attribution, and more concrete examples. Those changes usually improve both reader trust and extractability for AI summaries.

Do author bios alone solve the problem?

No. Author bios help, but they are only one signal. A page also needs evidence in the body itself, including examples, sourced claims, clear reasoning, and visible freshness.

How often should E-E-A-T-focused content be refreshed?

That depends on the topic, but high-change areas like SEO, AI visibility, and software workflows should have defined review triggers. A practical model is to review priority pages quarterly and also refresh them when major product, market, or platform changes affect the advice.

What trustworthy content looks like in 2026

The companies that win on this topic are not the ones producing the most content. They are the ones making trust visible at scale. That means named expertise, source-backed claims, first-hand examples, extractable structure, and a refresh process that keeps pages credible after publication.

Teams that treat E-E-A-T as a line item will keep publishing clean but forgettable content. Teams that treat it as workflow design will build pages that rank, get cited, and convert.

For companies that want to measure how often their content appears in AI answers and where trust signals are still thin, Skayle offers a practical path: measure your AI visibility, understand your citation coverage, and turn trust from a vague concept into an operating discipline.

References

- Google Search Central documentation: Creating Helpful, Reliable, People-First Content

- Reading Room: 4 tactical ways to improve your SEO signals through EEAT

- Linkifi: How To Improve EEAT Signals On Your Website in 8 Ways

- The Digi Crawl: How to Create an E-E-A-T Optimized Blog Post

- PageOptimizer Pro: How To Build E-E-A-T?

- TechWyse: EEAT and User Experience

- Reddit: How to implement EEAT in my blogs?

- How to Add EEAT Signal in Content & Site?