TL;DR

Fragmented SEO content workflows create hidden costs through handoffs, delays, errors, and weak refresh discipline. Ranking systems reduce those losses by connecting planning, publishing, updates, and visibility measurement into one repeatable operating model.

Most SEO content problems do not start with writing quality. They start with disconnected workflows, unclear ownership, and too many handoffs between research, briefs, drafting, optimization, publishing, and reporting.

For SaaS teams in 2026, the real comparison is no longer agency versus in-house. It is fragmented manual execution versus ranking systems built to produce, maintain, and measure content at scale.

Why fragmented SEO content workflows get expensive fast

An SEO content workflow is the set of steps a team uses to research, produce, publish, update, and measure content. When those steps live across separate people, tools, and spreadsheets, cost rises long before the finance team sees it.

The short version: fragmented SEO content workflows turn every article into a small project instead of a repeatable asset.

That distinction matters because search does not reward effort. It rewards coverage, consistency, freshness, authority, and increasingly, extractable structure that AI systems can cite.

In a manual model, one team handles keyword research, another writes briefs, freelancers draft, editors revise, someone adds internal links, another person uploads into a CMS, and reporting sits in a separate dashboard. Every handoff creates delay. Every delay increases the chance that search intent shifts, the brief gets diluted, or the published page misses basic ranking elements.

According to monday.com’s SEO workflow guide, structured workflows improve collaboration, speed, and performance because they reduce process friction across teams. That sounds operational, but it is also financial. If five people touch one page in sequence, the page cost includes waiting time, coordination time, revision loops, and missed publishing windows.

This is where many SaaS companies underestimate the problem. They measure content cost as writer fees or agency retainers. They do not measure the hidden overhead:

- Time spent moving work between people

- Rework caused by weak briefs

- Missed internal linking opportunities

- Inconsistent on-page optimization

- Slow refresh cycles for aging articles

- Reporting that explains outcomes but does not improve the next cycle

A manual process can still work for a small content program. It breaks when a company needs cluster coverage, regular refreshes, product-led pages, and visibility in AI answers at the same time.

The real comparison: project-based content ops vs ranking infrastructure

The cleanest way to compare manual and automated SEO content workflows is to look at the operating model, not just the tools.

Manual content ops

Manual content ops usually look flexible from the outside. In practice, they are heavily project-based.

Typical pattern:

- A marketer identifies target keywords.

- A brief is assembled in a document.

- A writer drafts in a separate tool.

- An editor adds revisions.

- SEO checks happen late in the process.

- Publishing happens in the CMS.

- Performance is reviewed weeks later.

- Refreshes happen only when rankings drop enough to get attention.

This model has some advantages:

- Strong human judgment on a small number of high-stakes pages

- Easier to customize tone for complex topics

- Familiar process for teams used to agencies or freelancers

It also has predictable weaknesses:

- Throughput depends on people availability

- Quality varies by writer and editor

- Institutional knowledge lives in scattered docs

- Measurement is delayed and disconnected

- Refreshes are reactive, not systematic

A Reddit thread on standard SEO workflow steps reflects the baseline most teams still follow: keyword mapping, long-tail discovery, topic planning, and calendar management. Those steps are useful, but on their own they do not create a durable ranking system.

Ranking systems

A ranking system treats content as infrastructure. The goal is not to publish faster for its own sake. The goal is to reduce execution variance while improving search coverage, update speed, and citation readiness.

In this model, the workflow connects:

- Research and search intent mapping

- Repeatable brief structure

- On-page optimization requirements

- Internal link logic

- Publishing standards

- Refresh triggers

- Reporting tied to action

The practical difference is simple: a ranking system reduces the number of decisions a team needs to remake for every page.

That does not eliminate human input. It makes human input more valuable by using it at the right moments: topic prioritization, editorial judgment, product positioning, and quality control.

For SaaS teams trying to rank in Google and appear in AI-generated answers, this operating model matters more than the old debate over whether to hire another writer or retain another agency.

Where the hidden costs show up inside manual workflows

The cost of fragmented SEO rarely appears in one line item. It appears across delay, inconsistency, and lost compounding value.

Delays between steps

In manual workflows, the brief often waits for approvals, the draft waits for edits, the page waits for upload, and the refresh waits until performance drops. None of those delays are dramatic alone. Together, they slow the rate at which a site builds authority.

Search compounds when content clusters ship in sequence and reinforce each other through internal links, shared entities, and clear topical coverage. Fragmentation breaks that compounding effect.

Error rates rise when review is scattered

As documented in SEObotAI’s workflow automation best practices, automation reduces manual errors and improves consistency across larger SEO efforts. That matters because many ranking losses are not strategic failures. They are execution misses: wrong headings, thin intros, weak internal links, outdated examples, or pages published without clear search intent alignment.

These are expensive mistakes because they often survive until the next quarterly review. By then, the page has already underperformed.

Refresh work becomes a backlog

Most teams are good at launching net-new articles. Fewer are disciplined about updates. Yet aging pages often represent the fastest path to regained traffic, stronger conversion paths, and improved AI extractability.

Moz highlighted this operational shift in its piece on AI-powered SEO workflows, including role-based automation for content refreshing, competitor keyword analysis, and backlink prospecting. The important takeaway is not the novelty of named agents. It is that refresh work can be operationalized instead of treated as ad hoc cleanup.

Reporting does not change execution

A familiar failure pattern looks like this: analytics show ranking drops, dashboards get shared, and no process changes. The team learns what happened without fixing the system that caused it.

Good SEO content workflows close that loop. They tie performance back to page templates, linking logic, refresh schedules, and content coverage decisions.

A practical model for stronger SEO content workflows

A useful way to evaluate SEO content workflows is a simple four-part model: plan, produce, publish, preserve.

This is not branding. It is a working checklist for whether a team has a system or just a queue of content tasks.

Plan

This stage covers topic selection, intent mapping, SERP review, brief creation, and cluster logic.

A weak planning stage causes downstream waste. If the brief is vague, the draft will need extra revisions. If search intent is unclear, the page may rank for the wrong terms or fail to convert. If cluster relationships are not mapped early, internal links will be added late or missed entirely.

WebFX’s breakdown of SEO workflows makes a useful point here: large SEO goals become manageable when they are broken into smaller repeatable tasks. The same logic applies to editorial systems. Teams that standardize recurring decisions spend less time rebuilding the process every week.

Produce

This stage includes draft creation, factual review, editing, optimization, and brand alignment.

The difference between manual content ops and a ranking system is not whether humans edit. It is whether the production process starts from a structured brief and clear page requirements.

For example, a SaaS team building comparison pages, feature pages, and educational articles should not use the same brief shape for all three. Different page types need different proof, conversion paths, and citation signals. Teams that ignore that end up with content that reads fine but performs unevenly.

Publish

This stage includes CMS formatting, metadata, schema, internal links, calls to action, and analytics setup.

Many teams treat publishing as admin work. It is not. This is where strong drafts often lose ranking strength because the final page lacks structure that search engines and AI systems can interpret quickly.

That is one reason pages designed for extractability tend to outperform generic blog formatting. Structured headings, concise definitions, clean FAQ blocks, and linked supporting pages make it easier for systems to understand what the page is about. For teams working on AI visibility, this is the same discipline described in our guide to LLM-ready pages.

Preserve

This stage is what most manual processes neglect. It includes refresh triggers, citation monitoring, link maintenance, content decay checks, and performance reviews tied to action.

A page is not finished when it is published. It is only in maintenance mode. The preserve stage determines whether a content program compounds or slowly decays.

This is also where platforms such as Skayle fit naturally. The value is not just generating content. It is helping teams rank higher in search and appear in AI-generated answers by connecting planning, execution, updating, and visibility tracking in one system.

What the speed gap actually means for budget and output

Speed is often framed as a productivity issue. In SEO, speed is also a coverage issue.

According to Typeface’s end-to-end SEO workflow benchmark, automated workflows can scale content production up to 6x faster than traditional manual methods. That number should not be read as a promise for every team. It should be read as evidence that workflow design materially changes throughput.

The hidden budget implication is straightforward. If a team can move from one-off article production to structured, end-to-end SEO content workflows, the same budget can support:

- More pages published per quarter

- Faster topical cluster completion

- More frequent content refreshes

- Better consistency in on-page elements

- Shorter feedback loops between ranking data and editorial action

A concrete scenario

Consider a SaaS company with a small growth team and an agency-supported content program.

Baseline:

- Topics live in spreadsheets

- Briefs are built manually

- Drafts are produced by freelancers

- Editors optimize after the fact

- Publishing depends on marketing ops availability

- Refreshes happen only on major traffic drops

Intervention:

- Standardize brief templates by page type

- Centralize topic, intent, and internal link mapping

- Build publishing requirements into the workflow instead of leaving them to final review

- Add refresh triggers for declining rankings, outdated claims, and missing citations

- Connect reporting to the next editorial sprint

Expected outcome over one to two quarters:

- Higher page throughput without linear headcount growth

- Fewer revision cycles per asset

- Faster refresh velocity on aging pages

- More stable internal linking across clusters

- Better readiness for AI answer extraction

This kind of operational improvement is consistent with the workflow patterns described by Media Junction’s AI-assisted SEO process, which outlines how structured AI workflows can replace manual research and drafting bottlenecks for pillar pages and blogs.

The important point is not that every team needs full automation. It is that teams need fewer disconnected steps.

The contrarian point: do not optimize for content volume, optimize for system reliability

A common mistake in SEO content workflows is chasing lower drafting time while leaving the rest of the process untouched.

That is the wrong target.

Do not optimize for content volume first. Optimize for system reliability first. A team that publishes 20 inconsistent articles with weak linking and no refresh discipline often loses to a team that publishes 8 tightly integrated pages that reinforce a cluster and stay updated.

This is where agency-led models often underdeliver for scaling SaaS companies. Agencies can produce output. They usually do not own the full operating system across research, site architecture, publishing standards, refresh logic, and AI citation tracking.

That gap matters because Google rankings and AI answers both reward structured authority, not just isolated pieces of good writing. The trust signals that improve citation likelihood are similar to the trust signals that improve rankings: clean structure, specific definitions, supporting evidence, updated pages, and recognizable topical depth. That is closely aligned with this deeper look at content trust for AI extraction.

A numbered checklist for fixing fragmented workflows

Teams auditing their current process can use this checklist:

- Map every handoff from keyword research to reporting.

- Count how many tools, documents, and people touch one page before publish.

- Separate page types so briefs and quality standards match intent.

- Define required on-page elements before drafting starts, not at the final review stage.

- Build internal linking targets into the brief, not as a post-publish task.

- Set refresh triggers for rank decline, stale facts, and product changes.

- Connect reporting to editorial decisions for the next sprint.

- Track whether content is appearing in AI answers, not just whether it ranks in Google.

If a team cannot answer those eight points clearly, it does not have a ranking system yet.

Which model fits which company in 2026

Not every company needs the same level of operational maturity. The right model depends on content volume, site complexity, and growth expectations.

Manual workflows still make sense when

- The site is small and focused on a narrow product set

- Publishing volume is low

- A few high-value pages matter more than broad topic coverage

- Subject matter requires heavy expert involvement on each draft

In these cases, manual SEO content workflows can be acceptable if the team still enforces strong briefs, internal linking, and refresh discipline.

Ranking systems become necessary when

- The company is building multiple topic clusters

- Content production spans internal teams and external contributors

- Product marketing, SEO, and demand generation need shared workflows

- Refresh work is piling up faster than the team can handle

- The company wants visibility in both traditional search and AI-generated answers

This is where fragmented processes create real opportunity cost. A page that is never refreshed, never linked correctly, or never structured for extractability is not just underperforming. It is weakening the broader site system.

Profound

Profound is part of the newer market focused on AI visibility and brand presence in generated answers. Teams considering tools in this category should assess whether visibility measurement is connected to content execution or remains a separate reporting layer.

AirOps

AirOps is commonly associated with workflow-driven content operations. The core evaluation question is whether workflow automation improves ranking consistency across the full lifecycle or mainly accelerates production.

Searchable

Searchable reflects the broader shift toward discoverability tooling. For buyers, the practical issue is whether the platform helps operationalize updates, citations, and page maintenance rather than simply surface insights.

PromptWatch

PromptWatch sits in the monitoring and prompt visibility discussion. Monitoring matters, but for most SaaS teams the bigger operational need is closing the gap between what gets measured and what gets fixed.

AthenaHQ

AthenaHQ is another example of the market moving toward AI search intelligence. The useful comparison is not feature-by-feature. It is whether the model supports compounding authority through coordinated content workflows or adds another dashboard to an already fragmented process.

For companies that need both execution and visibility, platforms that connect content operations with ranking and AI answer presence are structurally better aligned with the problem.

What better design and measurement look like on the page

Workflow quality is visible on the page itself.

Strong pages built from reliable SEO content workflows usually have:

- A direct definition near the top

- Tight heading hierarchy

- Clear entity coverage

- Internal links that support topic depth

- FAQs phrased the way users ask questions

- Calls to action aligned with the search stage

- Structured sections that an AI system can quote without heavy interpretation

Weak pages usually reveal workflow fragmentation. They repeat the keyword loosely, bury the answer, use generic headings, and offer no obvious refresh discipline.

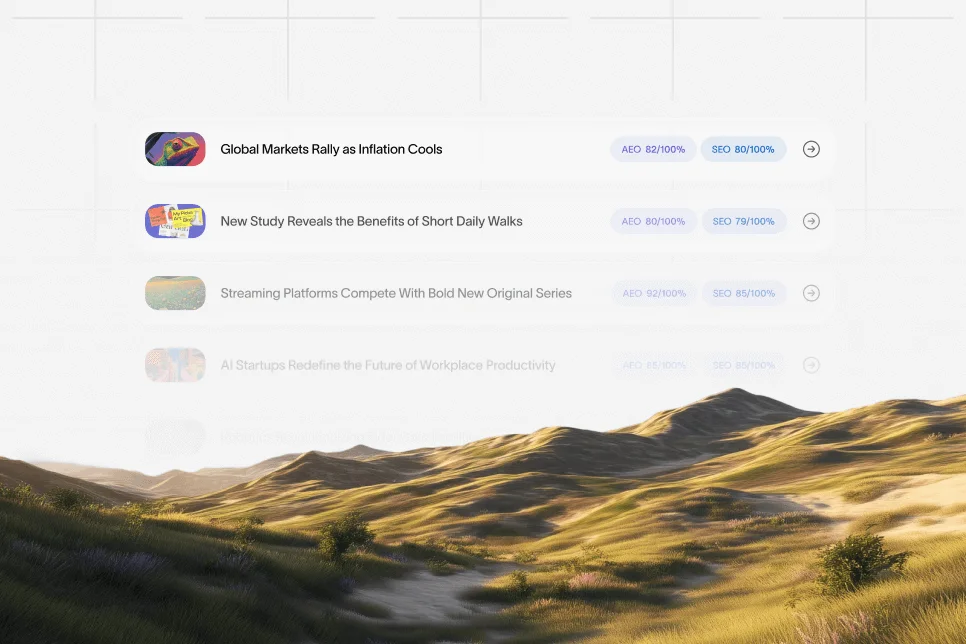

Measurement should follow the new funnel: impression -> AI answer inclusion -> citation -> click -> conversion.

That means teams should track more than sessions and rankings. They should also review whether a page:

- Is being cited or paraphrased in AI answers

- Has stable definitions and proof worth quoting

- Converts after the click with a clear next step

- Supports nearby pages through internal links and cluster depth

This is why a ranking and visibility platform matters more now than a standalone writing tool. The operating problem is not just draft generation. It is whether the team can measure authority, maintain pages, and improve citation coverage over time.

FAQ: what teams usually ask before changing their workflow

What is an SEO content workflow?

An SEO content workflow is the full process used to research, create, optimize, publish, update, and measure content. A strong workflow reduces delays, standardizes quality, and connects performance data back to future content decisions.

How much of an SEO workflow can realistically be automated in 2026?

Research support, brief creation, optimization checks, internal link recommendations, refresh identification, and reporting triggers can all be partly automated in 2026. Editorial judgment, product positioning, and final quality control still benefit from human oversight.

Are agencies always the wrong choice for SEO content workflows?

No. Agencies can work well for companies that need expert support on a limited number of pages or a focused campaign. The problem appears when agency output sits outside the company’s ongoing ranking system and refresh process.

What is the biggest hidden cost in fragmented SEO?

The largest hidden cost is not writer spend. It is the compounded loss from delays, inconsistent execution, weak refresh discipline, and reporting that does not change the next cycle of work.

How should teams decide when to move from manual ops to a system?

The shift usually makes sense when content volume rises, multiple contributors are involved, refresh backlog grows, and the company needs reliable visibility across both Google and AI answers. At that point, disconnected documents and handoffs start costing more than a coordinated system.

For SaaS teams reviewing their current setup, the next step is not to add another standalone tool. It is to reduce fragmentation, make visibility measurable, and build SEO content workflows that compound over time. Teams that want that level of clarity can use a platform like Skayle to measure AI visibility, understand citation coverage, and connect content execution to ranking outcomes.

References

- monday.com — SEO Workflow: How To Build A System That Drives Results

- SEObotAI — SEO Workflow Automation: 7 Best Practices

- Moz — 5 AI-Powered Workflows Every SEO Should Be Using Today

- WebFX — 5 SEO Workflows That Enhance Your SEO Strategy

- Typeface — Introducing a New End-to-End SEO Workflow That Scales

- Media Junction — AI for SEO Content: A Step-by-Step Workflow for Better Results

- Reddit — SEO workflow discussion

- Top 20 Content Workflow Tools That Can Maximize Team …