TL;DR

Searchable alternatives should be evaluated based on engine coverage, visibility analytics, automation, and the ability to turn insights into content improvements. Tools that only monitor AI visibility provide limited value compared to systems that connect visibility data with execution.

Companies are discovering that traditional SEO reporting no longer explains where their brand appears in AI-generated answers. Tools like Searchable emerged to measure visibility across engines such as ChatGPT and Gemini, but many teams now compare several platforms before committing.

A "searchable" brand today means one that can be discovered, extracted, and cited by both search engines and AI systems. Choosing the right platform determines whether teams only monitor visibility or actively improve it.

This guide breaks down the real criteria teams use when evaluating Searchable alternatives in 2026, including engine coverage, analytics depth, automation, and the ability to turn visibility insights into ranking improvements.

Why "Searchable" Matters in the AI Search Era

The term searchable originally described digital content that could be easily queried or indexed. According to the Cambridge English Dictionary, something is searchable when information within it can be found through automated search functionality.

In the AI search landscape, the meaning has expanded. Content is no longer only indexed by search engines. It is also extracted, summarized, and cited by AI systems.

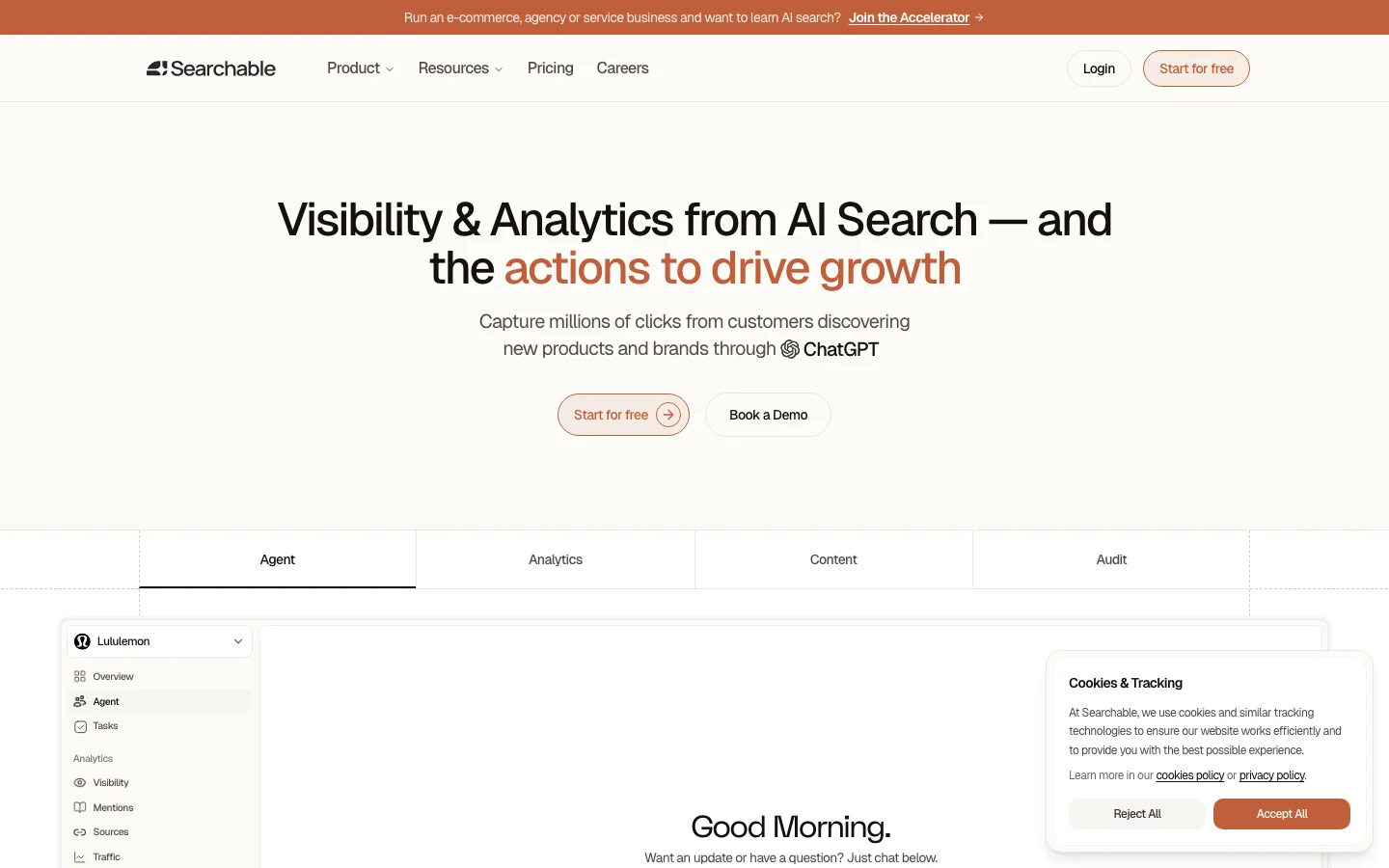

Platforms like Searchable position themselves as "full stack visibility" tools designed to measure where brands appear in AI-generated responses and search results. The platform tracks presence across multiple engines and surfaces visibility gaps where competitors are cited instead.

According to the company’s public documentation and product positioning, these systems analyze how brands appear across AI platforms including ChatGPT, Gemini, and Perplexity.

However, most teams evaluating searchable alternatives are not just asking "Where do we appear?"

They are asking:

Which prompts include our brand in AI answers?

Why do competitors get cited instead?

Which pages influence those answers?

How can we fix the gap quickly?

That shift changes how buyers evaluate platforms.

The AI Visibility Stack Buyers Should Compare

A useful way to evaluate Searchable alternatives is to break the problem into five layers of capability.

This model is often called the AI visibility stack because each layer builds on the previous one.

Engine coverage

Visibility measurement

Content extraction signals

Execution and optimization

Reporting and business impact

Most tools cover only one or two layers. Mature platforms address all five.

1. Engine Coverage: Which AI Systems Are Tracked

The first evaluation question is simple but often overlooked: Which AI engines does the platform actually measure?

Modern AI discovery does not happen in a single place. Brands appear across:

ChatGPT

Google AI Overviews

Gemini

Perplexity

Claude

According to the company’s product description and LinkedIn profile, Searchable tracks visibility across several AI engines including ChatGPT, Perplexity, and Gemini.

Engine coverage matters because each system surfaces sources differently.

For example:

ChatGPT often cites authoritative blog posts

Perplexity emphasizes referenced sources

Google AI Overviews prefer structured content and trusted domains

A platform that measures only one or two engines will provide an incomplete picture of brand discoverability.

Teams evaluating alternatives should verify:

Which engines are tracked

How frequently prompts run

Whether prompts are customizable

Whether competitor visibility is included

Without multi-engine coverage, visibility data quickly becomes misleading.

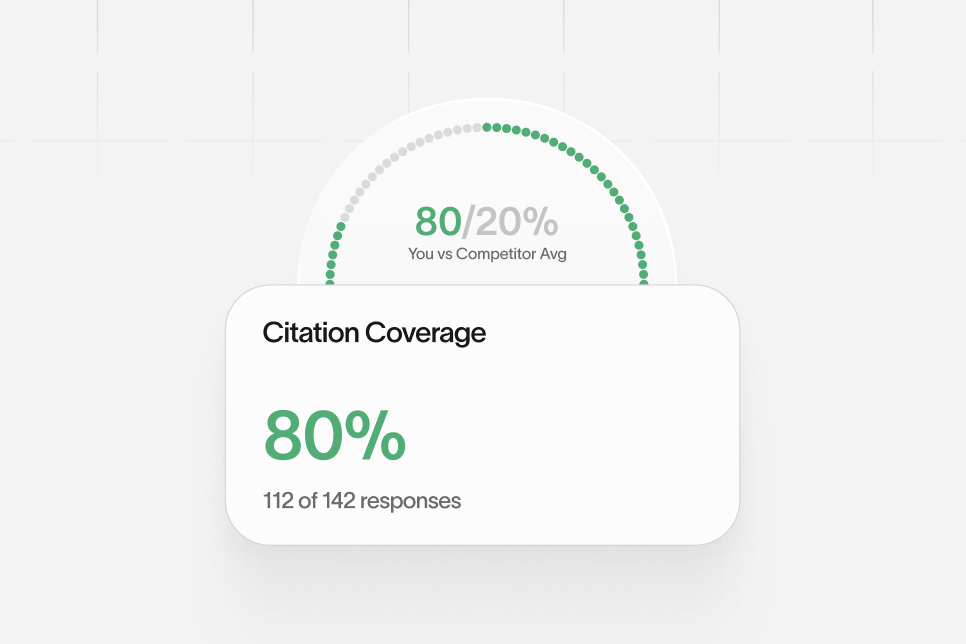

2. Visibility Metrics That Go Beyond Mentions

Tracking visibility requires more than simply checking if a brand appears in AI responses.

The most useful platforms break results into multiple signals, such as:

Citations

Mentions

Recommendations

Share of responses

This distinction matters.

A brand may be mentioned in an answer but not cited as a source. Citations carry more authority because they indicate that the AI system used the page as a reference.

Platforms positioned around AI visibility often highlight analytics dashboards that show this breakdown. For example, the product interface described on Searchable’s official site includes a centralized analytics environment designed to measure AI search visibility across queries.

When comparing alternatives, teams should confirm whether the tool provides:

Prompt-level analysis

Competitor citation comparisons

Visibility share metrics

Historical trend tracking

Tools that provide only "presence" signals create incomplete insights.

A Practical Evaluation Model Teams Use

One useful evaluation model many teams follow when comparing Searchable alternatives focuses on four decision layers:

Coverage – Which engines and prompts are monitored

Diagnostics – Why a brand appears or fails to appear

Execution – Whether insights lead to content changes

Measurement – Whether improvements translate into traffic or leads

Platforms often perform well in the first layer but fall short in the others.

For example, many AI visibility tools focus primarily on reporting dashboards. They show visibility data but do not provide mechanisms to fix the issues revealed.

That difference becomes important when teams attempt to scale visibility improvements across dozens or hundreds of pages.

Real-World Example: Fixing an AI Citation Gap

Consider a B2B SaaS company targeting the query:

"best tools for customer onboarding"

Initial visibility analysis shows:

ChatGPT cites three competitors

Perplexity references two competitors

The company never appears in AI answers

Baseline:

AI citation rate: 0%

Organic ranking: page 2

After a structured content update focused on:

clearer definitions

FAQ blocks

entity signals

structured data

Visibility changes over a six-week window:

ChatGPT mentions the brand in some responses

Perplexity begins citing the guide

Google rankings move to page 1

The improvement is not caused by the monitoring tool itself. It comes from the insight the tool reveals.

Platforms that stop at dashboards cannot close these gaps.

Automation vs Manual SEO Operations

Another key factor when comparing Searchable alternatives is operational efficiency.

AI visibility monitoring produces a large volume of data.

Teams quickly encounter questions such as:

Which pages influence AI answers most?

Which prompts should be monitored daily?

Which content updates produce measurable gains?

Some platforms attempt to automate parts of this process.

According to the platform description and public product information, Searchable attempts to identify visibility gaps and surface opportunities for improvement automatically.

Automation matters because AI monitoring expands the number of queries teams track.

A typical setup might include:

30–50 prompts

multiple AI engines

daily monitoring

Manual analysis quickly becomes impractical.

The strongest platforms therefore automate:

prompt discovery

competitor tracking

citation monitoring

visibility alerts

Automation does not replace strategy, but it significantly reduces operational overhead.

Common Mistakes Teams Make When Choosing a Tool

Many organizations evaluating searchable alternatives make similar mistakes during the selection process.

Choosing a Dashboard Instead of a System

The most common mistake is selecting a reporting dashboard rather than a full operational platform.

Dashboards answer questions like:

Where do we appear?

But they do not answer:

What content should we create next?

Which pages should we update?

Which changes will increase AI citations?

Without execution capabilities, insights rarely translate into improved visibility.

Ignoring Prompt Design

AI visibility monitoring depends heavily on prompt design.

Poor prompts often include brand names directly, which artificially inflates visibility.

Good prompts simulate how real users ask questions.

Examples include:

"best SaaS onboarding platforms"

"tools for improving onboarding conversion"

Brand-neutral prompts reveal true discoverability.

Measuring the Wrong Metrics

Another mistake is tracking only mentions rather than citations.

Mentions are weak signals. Citations indicate that the AI system trusted the page as a source.

Platforms that distinguish between these signals provide significantly more useful data.

Contrarian View: Visibility Data Alone Is Not Enough

Many companies assume that measuring AI search visibility automatically improves rankings.

That assumption is incorrect.

Monitoring tools expose problems. They do not solve them.

The real improvement happens when teams:

update content

improve internal linking

add structured data

clarify topical authority

Tools that combine visibility analysis with content execution workflows therefore create stronger outcomes than tools focused purely on monitoring.

Some platforms approach this by connecting visibility insights to content production systems so teams can publish updates immediately after identifying gaps.

For organizations running large content programs, this integration significantly reduces operational friction.

How Different Searchable Alternatives Position Themselves

While many platforms address AI visibility, they tend to fall into three categories.

AI Visibility Monitoring Platforms

These tools focus primarily on reporting.

Capabilities typically include:

prompt monitoring

brand presence tracking

competitor comparisons

They help teams understand visibility but do not provide mechanisms for improving it.

Content Optimization Platforms

Another category focuses on optimizing pages to increase their chances of appearing in AI answers.

These tools analyze:

content structure

answer formatting

entity coverage

However, many do not track AI visibility directly.

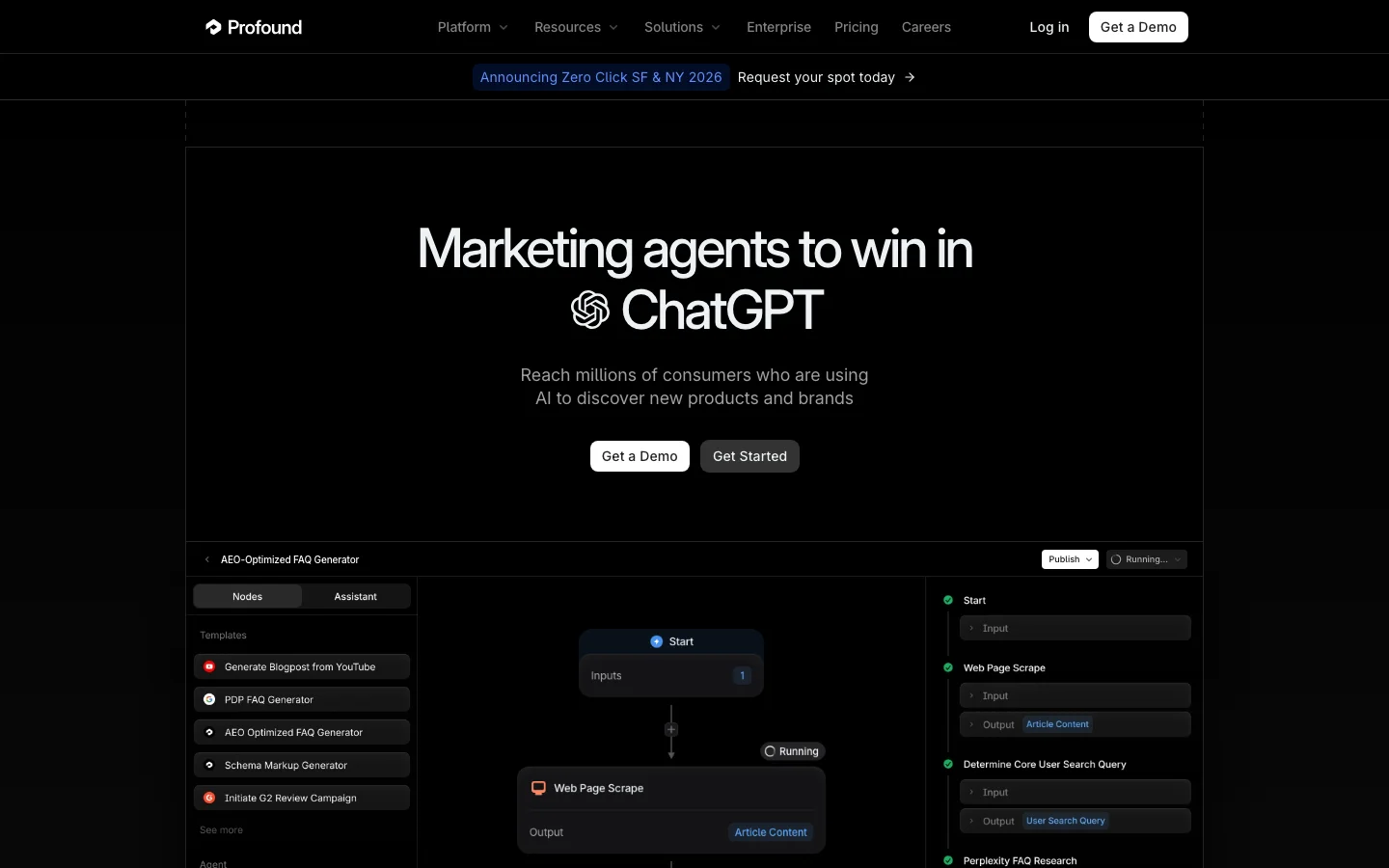

Hybrid AI Visibility Systems

A third category combines both layers.

These platforms connect:

AI visibility monitoring

SEO content production

performance measurement

Systems in this category aim to shorten the feedback loop between insight and action.

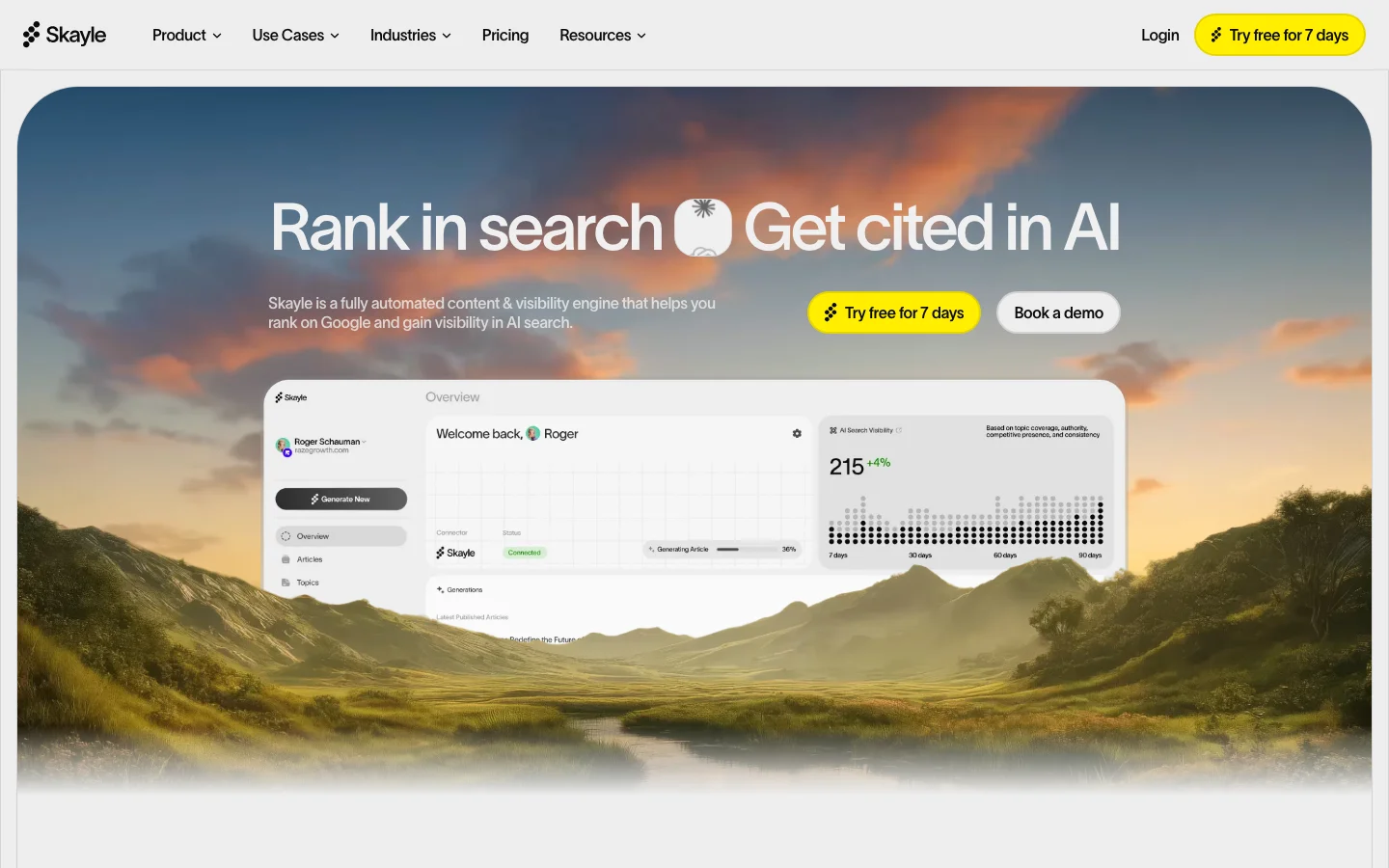

For example, platforms such as Skayle position themselves as ranking and AI visibility systems that combine content creation workflows with visibility tracking so teams can act on insights quickly rather than treating them as passive reports.

The distinction matters because AI search optimization often requires rapid iteration.

Profound

Tool: Profound

AirOps

Tool: AirOps

Skayle

Tool: Skayle

What to Look for During a Trial

Many vendors offer trial periods for evaluation.

Searchable itself highlights a short free trial period within its product offering according to its official product documentation.

During a trial, teams should focus on practical questions rather than feature lists.

Examples include:

How quickly can prompts be configured?

Are competitor insights easy to interpret?

Does the system highlight clear improvement opportunities?

How easy is it to act on those insights?

Trials should simulate real workflows rather than simply testing interface features.

Teams that approach trials this way often reach a clearer decision within a few days.

Frequently Asked Questions

What does "searchable" mean in the context of AI search?

In traditional computing, searchable content is information that can be queried and retrieved through a search system. In the AI search context, it also means content that can be extracted, summarized, and cited by AI engines when generating answers.

How do AI visibility platforms differ from traditional SEO tools?

Traditional SEO tools focus primarily on rankings and keyword positions in search engines. AI visibility platforms analyze how brands appear in AI-generated answers across systems such as ChatGPT or Gemini.

Which AI engines should a visibility platform track?

Most organizations focus on engines with the highest usage and influence. These typically include ChatGPT, Google AI Overviews, Gemini, Perplexity, and Claude.

Why are citations more important than mentions?

Mentions indicate that an AI system referenced a brand in an answer. Citations indicate the system used a specific page as a source. Citations therefore signal stronger authority and influence.

Can monitoring AI search visibility improve rankings directly?

Monitoring itself does not change rankings. Improvements occur when teams update content, improve site structure, or create new pages based on insights revealed by visibility analysis.

Final Takeaway

Evaluating searchable alternatives requires looking beyond feature lists and focusing on how visibility insights translate into real ranking improvements. Platforms differ widely in their ability to connect monitoring, diagnostics, and execution.

Organizations that treat AI visibility as an operational system rather than a reporting exercise are far more likely to improve how often their brand appears in AI-generated answers.

Teams exploring this space should focus on platforms that combine visibility measurement with actionable workflows so insights can immediately lead to better content, stronger authority signals, and higher citation rates.

For companies investing seriously in AI-driven discovery, understanding how search engines and AI systems surface information is now as critical as traditional SEO once was.