TL;DR

Niche programmatic hubs scale when a maintainable dataset and a modular template system produce genuinely useful pages, not thin variations. Control indexation, ship in batches, and tie refresh work to rankings, citations, and conversions.

Niche programmatic hubs are one of the few SEO plays in 2026 that can still compound without hiring a bigger content team. But most SaaS teams fail because they scale URLs before they scale trust, templates, and crawl control.

A programmatic hub scales only when its dataset, templates, and internal links behave like a product—not a pile of pages.

What this guide covers (so the page is scannable): how to pick a niche that supports high-authority page sets, how to design templates that avoid thin content, how to control crawl/indexation, how to instrument conversions, and how to optimize for AI citations alongside rankings.

Why niche programmatic hubs work for long-tail SaaS in 2026

Programmatic hubs are structured page sets generated from a consistent dataset and template system, usually organized under a hub (category) page and several sub-hubs (facets), with hundreds to thousands of long-tail leaf pages.

They work when the long tail has two properties:

- The query space is repetitive (patterns like “X for Y,” “X vs Y,” “best X in Y”).

- The decision criteria is consistent enough to standardize across pages without becoming generic.

For SaaS, the long tail is often vertical + workflow + constraint.

Examples of niche hub shapes that tend to work:

- “SOC 2 compliance software for {industry}” (vertical + buyer concern)

- “Expense management policy template for {country}” (vertical + jurisdiction)

- “Webhook integration with {tool}” (workflow + ecosystem)

- “Alternatives to {competitor} for {segment}” (comparison + segmentation)

Programmatic hubs matter more now because AI answers have changed discovery. Buyers increasingly start with conversational prompts (“What’s the best X for Y?”), and those systems pull from sources that are structured, explicit, and consistent.

A practical implication: a programmatic hub is not only an index of pages. It is a citation surface.

Teams that want a deeper view on how this infrastructure compounds should align hub decisions with a modern programmatic stack; the details in Skayle’s breakdown of programmatic page infrastructure map directly to hub scale problems (templates, crawl control, refresh loops).

The business case: why niche beats broad

Broad programmatic plays (“all industries,” “all tools,” “all templates”) are the default failure mode. They create lots of pages that:

- don’t earn links,

- don’t get indexed consistently,

- don’t convert,

- and don’t get cited.

Niche programmatic hubs trade page count for authority density.

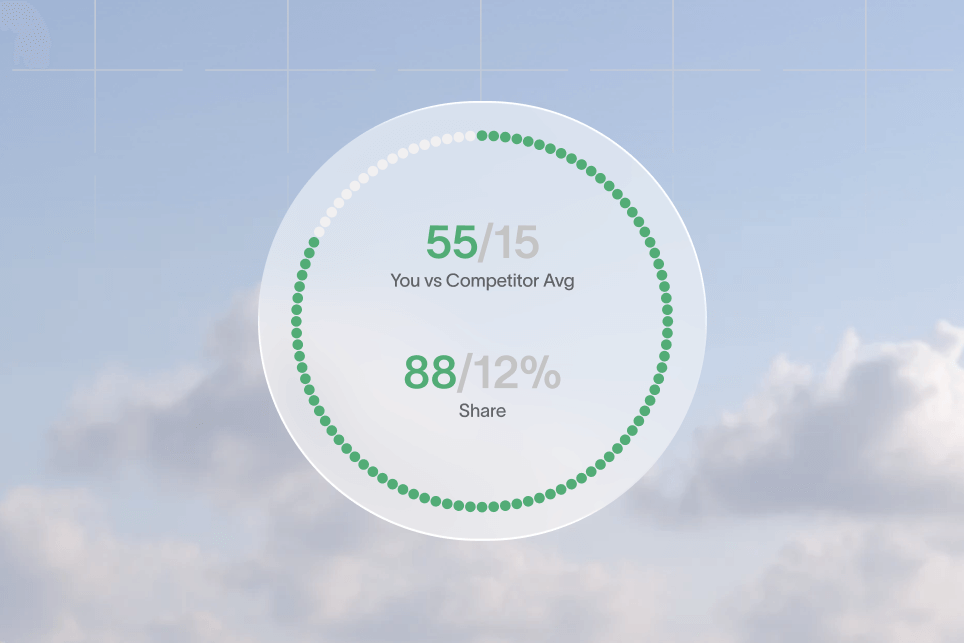

Authority density is the ratio of:

- how many pages exist in the cluster,

- how internally coherent the cluster is,

- and how much evidence (examples, entities, references, schema) each page carries.

In 2026, authority density is what keeps a hub stable when rankings fluctuate and when AI systems decide what to cite.

The new funnel to optimize: impression → citation → click → conversion

Traditional SEO funnels optimized for impressions and clicks.

Programmatic hubs in 2026 should be designed for:

- Impression (ranking + being eligible to be extracted)

- AI answer inclusion (being summarized accurately)

- Citation (earning the link mention)

- Click (standing out as the “source”)

- Conversion (turning that click into pipeline)

That means template work is not cosmetic. It is acquisition infrastructure.

The H.U.B.S. Loop: a scalable model for building programmatic hubs

Point of view: Programmatic hubs should be treated like a product launch with QA gates, not like a content sprint with a publish target. Scaling without gates creates index bloat, brand inconsistency, and low-trust pages that won’t convert or get cited.

A named model helps teams operationalize this.

The H.U.B.S. Loop (Harvest → Unify → Build → Ship → Sustain) is a five-step method to scale niche programmatic hubs without losing quality.

Harvest: capture the dataset before writing a word

Harvesting means defining the data you can reliably maintain.

A hub should have a dataset that can answer, at minimum:

- What is this page about? (primary entity)

- Who is it for? (segment/vertical)

- What is the job-to-be-done? (workflow)

- What are the constraints? (pricing tier, compliance, region, team size)

- What proof can be repeated? (templates, examples, documentation links)

If the dataset cannot support those fields, the pages will drift into thin paraphrases.

Unify: standardize the ontology and the internal linking map

Unify means forcing consistency:

- entity naming (“QuickBooks Online” vs “QuickBooks”),

- category logic (is “healthcare” a vertical, a segment, or both?),

- facet hierarchy (what belongs at /integrations/ vs /use-cases/?),

- and internal link rules (hub → sub-hub → leaf, plus lateral links).

This is where most teams silently lose. Two writers and one engineer can easily produce three different interpretations of the same “niche.”

Build: templates that can carry unique value at scale

Build is the template system: page layout, modules, schema, and reusable components.

Templates need “unique-value slots” that are filled by data or by controlled editorial rules. Without that, every page has the same paragraphs with swapped nouns.

Ship: release in crawl-safe batches

Ship is a release plan that respects crawl budget, indexing latency, and quality assurance.

Shipping 2,000 URLs at once is often worse than shipping 50 pages with strong internal linking and clean extraction.

Sustain: refresh loops tied to rankings, citations, and conversions

Sustain is the compounding engine:

- detect decay,

- update datasets,

- improve modules,

- and re-ship in a controlled way.

For SaaS teams building for AI answers, this step should incorporate citation monitoring; Skayle’s workflow on measuring citation gaps is a useful way to decide what to refresh first.

Choosing a niche and dataset that can support high-authority page sets

The fastest way to waste a quarter is to pick a hub topic that looks big but cannot carry depth.

A niche that scales typically has:

- clear terminology (entities are not ambiguous),

- stable attributes (fields don’t change weekly),

- strong intent (queries imply evaluation, not trivia),

- and enough variation to justify pages (not just synonyms).

A practical niche test: “Can the page be wrong?”

A contrarian but reliable test: avoid niches where the content cannot be meaningfully incorrect.

If every page could say “It depends” and still feel acceptable, the hub will not earn trust.

Good niches allow precise claims:

- “This integration supports OAuth 2.0”

- “This plan includes SSO”

- “This workflow requires audit logs”

Bad niches force vague copy:

- “This tool helps teams work better”

- “This category is growing fast”

Dataset minimum viable fields (MVF)

Before approving a hub, define MVF fields.

For a SaaS “integration” hub, a practical MVF could be:

- Integration name (entity)

- Integration type (native, Zapier-based, API)

- Auth method (OAuth, API key)

- Directionality (push, pull)

- Supported objects (contacts, invoices, tickets)

- Setup steps (3–6 steps)

- Common failure modes (2–4)

- Official docs URL

When mentioning documentation, link it. For example, OAuth terminology is best referenced directly from IETF OAuth 2.0 or vendor docs, not paraphrased from random blogs.

Example hub blueprint: “Compliance controls for {framework}”

A compliance SaaS might build programmatic hubs around control libraries.

- Hub: /controls/

- Sub-hubs: /controls/soc-2/, /controls/iso-27001/

- Leaf pages: /controls/soc-2/cc6-1-access-control/

Unique value slots could include:

- the control text,

- implementation guidance,

- evidence examples,

- mapped product features,

- and links to official standards.

For standards references, teams should link to primary sources like ISO or AICPA SOC where licensing allows.

Where teams get the dataset wrong

Common dataset failures show up later as SEO failures:

- “Scrape-first” data that can’t be kept fresh.

- Attributes that are subjective (“best for teams,” “easy to use”).

- Missing canonical entity IDs (no stable key → duplicates).

- No plan for deprecations (tools shut down, features change, pricing updates).

A clean dataset is not optional. It is the reason the hub stays accurate after month two.

Templates that avoid thin pages and still feel programmatic

A template is successful when Google can index it and a buyer can trust it.

Most template failures come from under-specifying modules. The result is a page with:

- a generic intro,

- a shallow feature list,

- and a CTA.

That pattern may get indexed, but it rarely earns links, citations, or conversions.

The “Module Stack” approach: repeatable structure with controlled uniqueness

A scalable programmatic hub template should be built as a stack of modules.

A typical stack for a niche SaaS hub leaf page:

- Direct answer block (40–80 words; defines what the page is about)

- Decision criteria table (structured attributes from the dataset)

- Use-case narrative (2–3 paragraphs with segment context)

- Setup/implementation steps (numbered, concrete)

- Limitations and edge cases (what breaks, what’s missing)

- Alternatives / comparisons (contextual internal links)

- Proof surfaces (docs links, changelog link, screenshots if allowed)

- Conversion module (CTA aligned to stage)

This makes the page extractable and reduces the chance of “template sameness.”

Copy rules that keep pages unique without hand-writing everything

Teams usually need editorial constraints more than “more AI.”

Practical rules that scale:

- Every page must include 2–3 dataset-backed facts (auth method, supported objects, limits).

- Every page must include one “when not to use this” sentence.

- Every page must include 1–2 internal links up (hub/sub-hub) and 2–4 sideways (related leaf pages).

For SaaS teams using AI in production workflows, consistency breaks when tools are fragmented; Skayle has covered this operational problem in its piece on fixing AI content workflows.

Structured data that supports extraction (without overdoing it)

Schema should be added when it matches the page.

Useful references:

- Schema.org for vocabulary

- Google Search structured data docs for eligibility guidance

Common schema types for programmatic hubs:

- BreadcrumbList (almost always)

- FAQPage (when the questions are real and page-specific)

- Product / SoftwareApplication (for SaaS product pages)

- HowTo (only when steps are truly procedural)

Schema should not be used to “fake” depth. It should reflect the page.

Teams optimizing for AI Overviews should also treat schema as conversational, not just syntactic; the JSON-LD patterns in Skayle’s guide to conversational schema fixes are directly relevant to programmatic hubs.

Visual element to include (non-stock, no text)

A clean diagram works better than screenshots in a hub guide. A useful visual for this article:

- A simple “hub → sub-hub → leaf” tree diagram with 2–3 lateral internal link paths.

It reinforces architecture without leaking product UI.

Crawl, indexation, and site controls that keep hubs healthy

Indexing is the silent bottleneck of programmatic hubs.

A team can generate 5,000 URLs in a week and still have 300 indexed two months later.

Don’t index everything: the controlled-indexing stance

The contrarian stance that holds up in practice: shipping more indexable pages faster is usually a mistake.

Instead:

- start with fewer pages,

- ensure they are internally connected,

- confirm they are being crawled,

- and only then expand.

This reduces index bloat and makes quality signals concentrate.

Technical checks that should be automated

A niche programmatic hub should have monitoring for:

- canonical correctness (no self-referential mistakes)

- robots and meta robots (no accidental noindex)

- sitemap coverage and freshness

- faceted navigation rules (no crawl traps)

- duplicate pages caused by parameters

Tools commonly used for this layer:

- Google Search Console for indexing signals

- Screaming Frog for crawl validation

- Ahrefs or Semrush for competitive SERP checks

Facets, filters, and crawl traps

Faceted navigation is where programmatic hubs often collapse.

If every filter combination creates a new indexable URL, the site can unintentionally produce millions of pages. That can dilute internal linking and waste crawl.

A safer pattern:

- Allow only curated facet combinations to be indexable.

- Keep all other filter states as non-indexable parameters.

- Provide static, internally linked “facet landing pages” that deserve ranking.

For example:

- Index: /integrations/crm/

- Index: /integrations/crm/salesforce/

- Do not index: /integrations?category=crm&sort=popular&billing=annual

Performance matters more on programmatic hubs

A hub page set amplifies any performance issue.

Use:

- PageSpeed Insights to spot render and TTFB issues

- Lighthouse for repeatable audits

If the hub stack is headless or framework-driven, the hosting layer and caching are part of SEO.

Common infrastructure choices worth linking for reference:

- Next.js for hybrid rendering

- Vercel for deployment

- Cloudflare for caching, WAF, and edge delivery

Teams working specifically on extraction reliability (for bots and AI systems) should treat rendering, canonicals, and schema as a single technical surface; Skayle’s overview on technical SEO for AI visibility aligns closely with hub-scale failure modes.

A release protocol that prevents indexing chaos

A practical batch approach:

- Ship 20–50 leaf pages.

- Ship the hub and sub-hub pages that link to them.

- Submit sitemap and inspect a sample in Search Console.

- Confirm server logs show consistent Googlebot fetches.

- Expand by another batch only after coverage stabilizes.

This is slower than “publish everything,” but it prevents months of cleanup.

Conversion design for programmatic hubs (traffic is not the goal)

Programmatic hubs often attract top-of-funnel queries, but the site still needs to create pipeline.

Conversion design on hub pages is mostly about intent alignment and reducing cognitive load.

Treat hub pages like category pages, not blog indexes

High-performing hubs often behave like ecommerce category pages:

- clear sorting and grouping,

- summary of what the category is,

- comparison helpers,

- and obvious next steps.

They are not just “lists of links.”

A hub page should include:

- a 60–120 word definition (what this category is, who it’s for),

- a “how to choose” section with 4–6 criteria,

- and a curated set of links to sub-hubs.

CTAs that match the stage

A leaf page targeting “integration with {tool}” may not deserve “Book a demo” as the only CTA.

Better options include:

- “See supported objects” (deep link to a table)

- “View setup steps” (jump link)

- “Talk to an engineer” (if the query implies complexity)

- “Get the checklist” (for compliance/ops)

The key is to build micro-conversions that lead toward a demo, not force a demo ask on every page.

Measurement plan (a proof-shaped pilot without invented results)

A credible way to validate conversion impact is to run an instrumented pilot.

- Baseline: current organic sessions and conversion rate for the target topic cluster (GA4), and indexed pages for the hub path (Search Console).

- Intervention: ship 30 leaf pages + 3 sub-hubs with the full module stack, plus internal links from existing relevant pages.

- Expected outcome: higher indexation rate for the new path, improved engagement on leaf pages (scroll depth / key events), and measurable assisted conversions from organic landings.

- Timeframe: 4–6 weeks for initial crawl + early conversion signals; 8–12 weeks for ranking stabilization.

Instrumentation references:

- Google Analytics 4 for events and conversions

- Google Tag Manager for deployment control

This is “proof” in the sense that it is auditable: the metrics, tools, and time windows are explicit.

The midstream checklist: what to verify before scaling from 50 pages to 500

Scaling programmatic hubs should have a gate. Below is a checklist used by teams that avoid index bloat and conversion dead-ends.

- Confirm 80–90% of the first batch is indexed (Search Console Coverage).

- Validate canonicals and self-references on a crawl sample (Screaming Frog).

- Verify the hub → sub-hub → leaf internal link graph is intact (no orphan pages).

- Ensure each template has at least 3 unique-value slots filled from data.

- Check that pages load consistently under mobile conditions (PageSpeed).

- Confirm breadcrumbs render and match the URL hierarchy.

- Verify at least one conversion path exists that is not “Book a demo.”

- Confirm query coverage: each page maps to a distinct intent, not a synonym.

- Add FAQ blocks only where questions are genuinely page-specific.

- Set up a refresh trigger: what changes in the dataset force a re-render?

If these checks fail, scaling multiplies the problem.

AI visibility: designing hubs that get cited and clicked

AI systems cite sources that are easy to extract and hard to misquote.

Programmatic hubs can win here because they are structured, consistent, and entity-rich—if templates are built correctly.

What makes a programmatic page cite-worthy

Three practical traits:

- Explicit definitions: short, direct answers near the top.

- Structured comparisons: tables with stable attributes.

- Source grounding: links to primary documentation or standards.

That last point is frequently missed. AI systems have to decide what to trust; pages that reference official docs are easier to treat as credible.

When possible, link out to:

- vendor docs (e.g., Stripe Docs for payments-related topics)

- APIs (e.g., Salesforce Developers for integration claims)

- standards bodies (ISO, IETF)

Entity consistency: the unglamorous citation lever

If the same tool is named five ways across the hub, extraction gets messy.

A practical approach:

- maintain a canonical entity list (name, URL, aliases),

- enforce it in the CMS,

- and ensure internal linking uses the canonical form.

This is also where structured data helps: consistent entity mentions make it easier for systems to connect the page to the real-world thing.

Monitoring AI citations without guessing

AI visibility needs measurement, not anecdotes.

Teams typically need:

- prompt sets per hub (10–50 prompts that match real buyer language),

- checks for whether the brand is cited,

- and a workflow for fixing pages that should have been cited.

Skayle’s guides on AI Overviews optimization and answer-ready AEO systems map well to this operational layer, especially when hubs are meant to influence comparisons.

Failure modes that quietly kill programmatic hubs

Most programmatic hub failures look like “SEO didn’t work.” The root cause is usually structural.

Duplicate intent disguised as scale

If 200 pages answer the same question with minor noun swaps, ranking and citation signals split.

Fix:

- merge synonyms into one canonical page,

- use internal links and anchors to support variants,

- and reserve new pages for meaningfully different constraints.

Orphan pages and weak hubs

Leaf pages without strong hub connections are harder to crawl and harder to interpret.

Fix:

- ensure every leaf links up to its hub and sub-hub,

- add lateral linking modules (“Related integrations,” “Related controls”),

- and build hub copy that summarizes the cluster.

Thin “AI-written” copy that never becomes a source

Pages that read like generic blog intros rarely get cited.

Fix:

- use direct answer blocks,

- add tables of real attributes,

- include limitations,

- and link to primary sources.

Refresh debt: the hidden tax

Programmatic hubs are not “set and forget.”

A dataset that changes (features, pricing, docs URLs) creates accuracy issues. Accuracy issues reduce trust, which reduces conversions and citations.

Teams should plan refresh cycles; Skayle’s playbook on content refresh systems is relevant because hub maintenance is the same operational problem, just multiplied.

Analytics that can’t answer basic questions

If teams can’t answer “Which hub paths create pipeline?” the hub becomes a vanity traffic project.

Fix:

- ensure hub paths are grouped as content groups in GA4,

- track assisted conversions,

- and report by template type (hub vs sub-hub vs leaf).

For teams with data warehouses, BigQuery and Looker Studio are common choices for combining Search Console exports with conversion data.

FAQ: scaling niche programmatic hubs without losing quality

How many pages should a niche programmatic hub launch with?

A controlled first batch of 20–50 leaf pages plus the hub/sub-hub structure is usually enough to validate indexing, internal linking, and conversion paths. Scaling should follow after coverage stabilizes and template uniqueness is proven.

Are programmatic hubs the same as programmatic SEO?

Programmatic SEO is the broader approach: using templates and datasets to create many search-targeted pages. Programmatic hubs are a specific architecture within that approach, organized around hub and sub-hub pages that consolidate authority and guide crawlers and users.

When should pages be noindex in a programmatic hub?

Pages should be noindex when they are low-intent, duplicate, parameter-generated, or thin by necessity (for example, incomplete dataset entries). Indexation should be reserved for curated, internally supported pages that deserve to rank.

What schema matters most for programmatic hubs?

BreadcrumbList is consistently useful because it clarifies hierarchy and supports navigation extraction. FAQPage can help when questions are truly page-specific, and Product/SoftwareApplication may fit when the page is describing a SaaS product in a structured way.

How do programmatic hubs influence AI answers and citations?

AI systems are more likely to cite pages that define terms clearly, use structured comparisons, and reference primary sources. Programmatic hubs help when templates enforce those patterns across many niche pages, creating consistent, extractable answers.

How should SaaS teams measure success beyond rankings?

Rankings and organic sessions are necessary but incomplete. Teams should also measure indexation rate by hub path, engagement on leaf pages (scroll/event completion), assisted conversions from organic landings, and whether key prompts produce citations.

Do programmatic hubs work without backlinks?

They can index and rank for low-competition terms without a dedicated link strategy, but durable performance is harder. Hubs that earn citations and links typically include unique assets (checklists, templates, mappings, benchmarks) that people can reference.

What’s the biggest risk when scaling to thousands of pages?

Index bloat and intent duplication. Both reduce authority density and make the site harder to crawl, harder to interpret, and less trustworthy as a citation source.

What’s a practical refresh cadence for programmatic hubs?

Refresh should be event-driven (dataset changes) plus periodic (quarterly) reviews of top landing pages for accuracy and conversion performance. High-velocity categories like integrations often need more frequent checks than stable categories like definitions.

If the goal is to scale programmatic hubs while staying indexable, cite-worthy, and conversion-safe, Skayle can help teams measure how they appear in AI answers and connect that visibility to what gets built and refreshed next—start by reviewing your current citation coverage and hub architecture.