TL;DR

To earn LLM citations from your documentation, design for extractability and verifiability. Use answer blocks, deep linked pages, tables/FAQs, and a refresh cadence that keeps critical docs aligned with product reality.

The fastest way to lose AI visibility is to treat your documentation like a dumping ground for feature notes. I’ve watched strong products get ignored in AI answers because the docs were impossible to cite—even when the information was technically “there.”

If you want LLM citations, you don’t need more content. You need documentation that an AI system can confidently extract, verify, and point to.

Why your documentation isn’t getting cited (even if it’s accurate)

LLMs don’t “reward” good intentions. They reward content that is easy to retrieve, easy to verify, and hard to misunderstand.

Here’s the problem I see most often: teams write docs for humans who are already convinced. But AI answers are usually built for humans who are comparing, evaluating, or trying to complete a task fast.

A citation-ready knowledge base is documentation written and structured so an LLM can extract a specific answer, verify it against a stable source, and cite the exact URL that proves it.

The hidden failure mode: the model can’t point to your proof

Citation failures aren’t just an “AI is hallucinating” issue. They’re often a knowledge base issue.

Research on citation failure in LLM systems breaks down how citations can fail when retrieval pulls weak passages, when the model’s attention drifts, or when the system can’t confidently align an answer to evidence (especially in RAG-style setups) arXiv.

That matters because your documentation is effectively “training data” for how the internet explains your product. If it’s not citeable, you’re invisible in the new funnel:

impression → AI answer inclusion → citation → click → conversion

Point of view: stop writing docs like blog posts

Most “LLM-friendly” advice gets interpreted as “write more content in a cleaner tone.” That’s the wrong center of gravity.

Don’t optimize for writing style first. Optimize for extractability and verifiability first. Then worry about tone.

If you want a deeper look at how to measure where you’re missing citations (before you rewrite half your docs), Skayle has a practical workflow for finding citation gaps and turning them into a prioritized backlog.

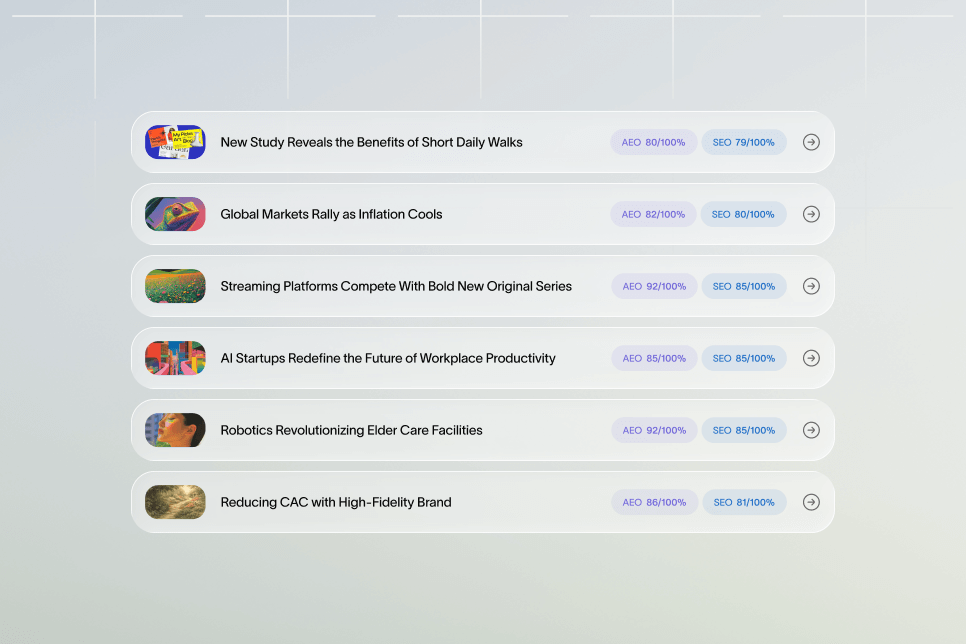

What the data suggests AI engines like to cite

We don’t get perfect transparency from AI answer engines. But we do have useful directional evidence.

Onely’s analysis is blunt:

- Listicles account for 50% of top AI citations.

- Content with tables gets cited 2.5× more often than unstructured content.

- Long-form content over 2,000 words gets cited 3× more than short posts.

- 82.5% of AI citations point to nested, topic-specific pages rather than homepages.

Those are strong clues about what “citation-ready” looks like in practice Onely.

The Citation-Ready Knowledge Base Model (4 parts you can actually ship)

When teams ask me where to start, I give them a simple model that keeps the rebuild grounded.

The Citation-Ready Knowledge Base Model: Scope → Answer Blocks → Deep Pages → Maintenance.

It’s not fancy. It’s shippable.

1) Scope: decide what you want to be cited for

If you try to be cited for everything, you’ll be cited for nothing.

You need a short list of “citation targets,” like:

- “How does {Your Product} handle SSO / SOC 2 / data retention?”

- “How do I integrate {Your Product} with X?”

- “Best way to do {job-to-be-done} with {Your category} software”

- “{Your Product} vs {competitor}: pricing / limitations / migration steps”

This is also where you pick the intent you want:

- Problem exploration (educational)

- Tool evaluation (comparison)

- Implementation (how-to)

- Troubleshooting (support)

2) Answer Blocks: make extraction trivial

If an AI answer has to “compose” your response from five paragraphs and two side notes, it’s less likely to cite you.

You want pages that start with:

- a direct 40–60 word answer (so it’s easy to lift)

- a short numbered process

- a table or decision grid

- an FAQ block

This “answer-first” pattern is explicitly recommended in LLM citation formatting guidance Onely, and it matches what other practitioners report when optimizing content for AI extraction Eflot.

3) Deep Pages: stop betting on one mega “Documentation” section

Here’s the contrarian stance that usually saves teams months:

Don’t build one giant knowledge base hub and hope AI finds the right paragraph. Build many deep, topic-specific pages that each answer one job extremely well.

Onely’s note that 82.5% of AI citations go to deep pages, not top-level pages, is the cleanest argument for this approach Onely.

This also aligns with how retrieval works in RAG systems: the chunk that gets retrieved needs to be tightly aligned with a specific question, and the URL needs to be stable and unambiguous OpenAI RAG guide.

4) Maintenance: freshness isn’t optional

A knowledge base that never gets updated is a citation liability.

Wellows calls out freshness as a major influence on citation selection—recently updated pages get cited more often in practice Wellows.

Even if you don’t fully buy that as a universal rule, it’s operationally true: your product changes, and old docs create contradictory evidence. Contradictory evidence makes systems less confident, which makes citations less likely.

If you want this to compound, you need a refresh loop. Skayle’s approach to content refresh strategy maps well to documentation too: you’re not rewriting everything, you’re keeping the pages that matter “current enough to trust.”

Step 1: Restructure pages so an LLM can lift the answer cleanly

Before you change architecture, fix the smallest unit: a single doc page.

Pick 10–20 pages that already get traffic, support tickets, or sales questions. You’ll usually find the same issues:

- page starts with product marketing instead of a direct answer

- key constraints are buried (“only on Enterprise plan”)

- setup steps are split across multiple pages with weak links

- examples are missing, so the model can’t ground the answer

Use a repeatable “answer block” template

I like a template that forces clarity. Here’s a version that works for both humans and AI systems:

- Direct answer (40–80 words).

- When to use this (3 bullets).

- How to do it (5–9 numbered steps).

- Edge cases / limitations (bullets).

- Example config / sample payload (if relevant).

- FAQ (4–6 questions).

This format is consistent with common recommendations for improving citation reliability via structured patterns like lists and FAQs HyperMind AI.

Add tables where people make decisions

Tables aren’t just for aesthetics. They compress a decision into a citeable unit.

If you have any of these, they should be tables:

- plan limits

- feature availability

- role permissions

- supported integrations

- retention windows

- rate limits

Onely reports that pages with tables are cited 2.5× more often than unstructured content Onely.

Write like you expect someone to quote you

This is subtle, but it’s a real unlock. You want sentences that can survive being ripped out of context.

Bad:

- “We support many authentication options depending on your plan.”

Better:

- “We support SAML SSO on the Enterprise plan; OAuth and password login are available on all plans.”

That kind of phrasing is both human-clear and machine-clear.

Troubleshooting: when your “direct answer” creates confusion

If you add a 60-word answer block and your support team starts pinging you, it’s usually because you:

- stated a rule without listing exceptions

- skipped prerequisites (permissions, plan level, required settings)

- used internal terms (“workspace,” “tenant,” “project”) without defining them once

Fix it by adding a short “Requirements” subsection right before the steps.

Step 2: Re-architect docs into deep, linked clusters (so citations land on the right URL)

Once pages are individually extractable, you need an architecture that makes retrieval and crawling predictable.

Think in clusters, not folders.

A folder like /docs/security/ is helpful for humans. But for AI citations, you care about whether each page is:

- focused on one intent

- internally linked to related pages

- stable in URL structure

- not competing with three near-duplicates

If you’ve built SEO topic clusters before, this will feel familiar. The difference is you’re designing for both crawlers and context windows. Skayle breaks down that dual-purpose hub design in our guide to topic cluster architecture.

The 10-point checklist I use before approving a doc cluster

Use this as a rebuild checklist for any “area” of your knowledge base (security, integrations, workflows, billing, etc.).

- Each page answers one primary question.

- The URL is human-readable and stable.

- The first section contains a direct answer.

- The page includes a numbered process.

- The page includes at least one table when decisions are involved.

- Every page links to 2–5 closely related pages.

- No two pages target the same question with different wording.

- Limitations and plan gates are explicit.

- An FAQ block covers edge cases and “what about…” objections.

- The page has a clear next step for the reader (not a hard sell).

That last point matters for conversion. A citation that generates a click is wasted if the page dead-ends.

Design the click path like a product surface, not a PDF

If you want citation → click → conversion, you need “conversion-safe” docs.

That doesn’t mean slapping demo banners everywhere. It means:

- context-aware CTAs (“See example setup,” “Compare plans,” “Talk to support”) instead of “Book a demo” on every page

- persistent navigation that keeps readers in the cluster

- a short “next best page” section at the bottom

This is where structured internal linking becomes a growth lever. If you’re rebuilding linking at scale, Skayle’s write-up on internal linking for clusters will save you from the usual mess (or the usual over-engineering).

Avoid duplicate intent: it kills citations

Duplicate intent looks like:

- “API Authentication”

- “How to Authenticate to the API”

- “API Keys Guide”

All three might be “good” pages. Together they’re a liability.

The model (and search engines) see competing evidence. Retrieval pulls different passages across different URLs. Confidence drops. Citations drop.

Pick one canonical page for the intent, then use sections (and anchored links) for subtopics.

Step 3: Add proof, freshness, and citation-grade structure signals

This is the step most teams skip because it feels “extra.” It’s not extra. It’s the difference between being mentioned and being cited.

Put verifiable proof on the page (not just claims)

A knowledge base earns trust when it includes evidence you can check.

In SaaS docs, “proof” usually looks like:

- explicit constraints (rate limits, supported regions, encryption at rest/in transit)

- configuration examples

- clearly defined terms

- version history (“Updated for vX behavior”)

If your page can’t be validated, it’s harder to cite.

This isn’t just an SEO thing. Citation best practices in academic contexts also emphasize verifiability and attribution—if readers can’t validate a statement, the citation is weaker by definition Jenni AI.

Build pages that are long enough to be complete (without becoming a junk drawer)

I don’t think “long-form” is inherently better. But completeness matters.

Onely’s dataset suggests content over 2,000 words gets cited 3× more than short posts Onely. In docs, that usually translates to:

- covering prerequisites

- covering the happy path setup

- covering the top 5 edge cases

- including examples

- including troubleshooting

If your page is 400 words because it punts everything to other pages, it’s probably not citeable.

Add an FAQ block that matches how people ask questions

FAQs aren’t just for SEO. They’re a way to “pre-chunk” your content into question-answer pairs.

You want questions like:

- “Does this work on the Starter plan?”

- “What happens if I rotate the key?”

- “Can I do this without admin permissions?”

Wellows explicitly recommends using structured elements like FAQs and lists because they match LLM training patterns and improve citation likelihood Wellows.

Use schema where it makes your content easier to interpret

A lot of teams treat schema as “SEO garnish.” For AI citations, schema is closer to a reliability signal: it makes entities and relationships explicit.

Onely reports that adding schema markup can increase appearances in AI recommendations by 3–5× (their phrasing, their dataset) Onely.

If you want the practical version of this for modern AI visibility, Skayle’s structured data blueprint is the right starting point.

If you’re using RAG internally, align your docs to how citations work

If your product includes an AI assistant or you’re building internal support automation, you’ll run into the same citation constraints.

OpenAI’s RAG guidance emphasizes preparing your knowledge base so retrieval returns the right chunks consistently OpenAI RAG guide. Anthropic’s documentation explains how citations can be attached to responses when source material is provided in the right format Anthropic citations.

One practical takeaway: design your docs so a single chunk contains a complete answer with minimal dependencies.

Proof block (use this pattern when you report outcomes internally)

If you want this project funded, you need a simple proof format your team can repeat.

- Baseline: Pick 25 high-intent prompts where you want LLM citations. Record whether you’re cited today and which URL is cited (if any).

- Intervention: Rewrite 10 pages using the answer block template, add tables/FAQs, and fix the internal linking so each page has a clean cluster.

- Outcome (what you measure): citation count, citation URL quality (deep page vs generic), and assisted conversions from doc visits.

- Timeframe: evaluate after recrawl/reindex cycles (often a few weeks, but it varies by site and change volume).

This isn’t a promise of results. It’s a measurement plan that makes the outcome undeniable.

Step 4: Ship, measure, and refresh so citations compound

Most knowledge bases fail for one boring reason: nobody owns them after launch.

A citation-ready knowledge base is an operating system problem, not a writing problem.

Pick KPIs that map to the AI funnel

Track metrics that match how citations create value:

- Citation coverage: how many of your tracked prompts cite you (and on which URLs)

- Citation quality: are citations pointing to deep pages that actually answer the question?

- Click-through behavior: do users land and continue into the cluster?

- Conversion assist: do doc visitors later convert (trial, demo, activation—whatever your business uses)

If you don’t track citation coverage, you can’t prioritize refreshes. Skayle has a concrete approach to AI citation coverage that turns “we think we’re showing up” into a measurable gap.

Build a refresh cadence that matches product change

You don’t need weekly updates across the whole docs site. You need a clear rule:

- Update pages whenever product behavior changes.

- Review the top citation-target pages on a fixed cadence (monthly or quarterly).

- Retire or redirect duplicates aggressively.

Wellows’ point on freshness isn’t just about dates—it’s about keeping the content aligned with reality Wellows.

Common mistakes that quietly kill LLM citations

I’ve made most of these mistakes myself.

- Publishing “overview” pages with no hard answers. They read well, but they don’t get cited.

- Splitting one task across five thin pages. Retrieval grabs the wrong chunk.

- Hiding plan gates and limitations. The page becomes untrustworthy.

- Letting duplicates pile up after launches. You create conflicting evidence.

- Adding popups or heavy scripts that interfere with rendering/extraction. (Even when humans don’t notice.)

If you suspect the last point is affecting you, it’s worth doing a technical pass focused on crawl and extraction. Skayle’s guide on technical SEO for AI visibility maps directly to documentation sites.

A quick note on “advanced” citation fixes

If you’re building RAG systems, the arXiv paper on citation failure includes a framework called Citention for improving citations without additional model training arXiv.

You don’t need to implement research-grade methods to improve your public docs. But it’s useful context: citations improve when retrieval and generation are better aligned to evidence.

If you want a broader research map, Raschka’s curated list of LLM papers is a solid jumping-off point Ahead of AI.

FAQ: Building a citation-ready knowledge base for LLM citations

How long does it take to see LLM citations after updating docs?

It depends on how quickly your pages are recrawled and reprocessed, and how competitive the prompt space is. In practice, plan to measure in weeks, not days, and focus first on “citation-quality” pages with clear answer blocks.

Should I move docs to longer pages if Onely says 2,000+ words gets cited more?

Not automatically. Use length as a completeness check, not a target. If you can answer the question fully in 900 words with tables, steps, and edge cases, that’s often better than 2,500 words of fluff.

Are tables and FAQs really worth the effort?

Yes, when they compress decisions and pre-answer edge cases. Onely’s analysis suggests tables are cited more often than unstructured content, and FAQs create clean question-answer chunks that are easy to extract Onely.

What’s the biggest architectural mistake teams make?

Treating the knowledge base like a library instead of a set of tasks. One mega hub with shallow pages forces retrieval to guess. Deep, tightly scoped pages with strong internal links are easier to cite and easier to convert.

Do I need schema to get LLM citations?

You can earn LLM citations without schema, but schema helps engines interpret entities and page intent reliably. If you’re rebuilding docs anyway, add schema where it clarifies products, FAQs, and key entities, then validate it as part of your release process.

How do I prove this work drives revenue, not just “visibility”?

Track the funnel: prompt → citation → click → assisted conversion. Even if you can’t attribute perfectly, you can show whether citation-target pages are gaining qualified visits and whether those visits correlate with signups, activations, or demos.

If you want to see how you currently appear in AI answers—and which doc URLs are (or aren’t) getting cited—Skayle can help you measure the gap and turn it into a rebuild plan. Start by looking at your current citation coverage, then decide which clusters to fix first. What’s the one doc topic you wish AI answers would stop getting wrong about your product?

References

- Citation Failure in LLMs: Definition, Analysis and Efficient Mitigation

- LLM Citations & How to Earn them to Build Authority in 2026 - Wellows

- LLM-Friendly Content: 12 Tips to Get Cited in AI Answers - Onely

- The Definitive Guide to Formatting Content for Reliable LLM Citations

- How to Optimize for LLMs and Get Cited in AI Results - Eflot

- Retrieval Augmented Generation - OpenAI Platform

- Citations - Anthropic Documentation

- Citing LLMs in Academic Writing: Standards and Best Practices

- LLM Research Papers: The 2024 List - Ahead of AI