TL;DR

Publishing more content can create a graveyard of decaying URLs. Content compounding fixes this by treating pages as assets: instrument outcomes, route authority with internal links, and refresh based on decay and citation gaps.

Most SaaS teams aren’t struggling because they publish too little. They’re struggling because they publish pages that never become assets. The fix is not higher volume; it’s building content that compounds domain authority, citations, and conversion over time.

Content compounding is the practice of turning each page into an improving asset through updates, internal linking, technical extractability, and measurement loops—so performance rises even when publishing slows.

A practical stance: publishing more is not a growth plan if the backlog is rotting. A smaller set of pages, instrumented and refreshed on purpose, will usually outperform a larger library that no one maintains.

1. The “content graveyard” pattern: what it is and why it spreads

A content graveyard is a library where most URLs get one push at launch and then quietly decay. The pages exist, they may even be indexed, but they stop earning rankings, links, conversions, and AI citations.

This pattern spreads for structural reasons:

- Output becomes the KPI. Teams report “X articles published,” not “Y pages improved in rankings and assisted revenue.”

- Research is disconnected from updates. Keyword research happens quarterly; refresh decisions happen never.

- Ownership is unclear. Writers ship, editors approve, and nobody is responsible for the URL’s next 12 months.

- The site becomes harder to crawl and understand. Thin pages, overlapping intent, and weak internal linking create index bloat.

How graveyards show up in analytics

The symptoms are usually visible in Google Search Console long before a team admits it.

Look for:

- A growing count of indexed pages with flat impressions.

- Query cannibalization where multiple URLs trade positions for the same intent.

- CTR decay: impressions stay stable, clicks drop.

- Content that ranks for “adjacent” queries but misses the high-intent modifiers (pricing, alternatives, integration, security, etc.).

On the conversion side, the same issue appears in Google Analytics (GA4) as a widening gap between organic sessions and meaningful events. The traffic is not the win. Assisted conversions and qualified demo requests are.

Why it matters more in 2026

In 2026, the funnel has an additional gate: AI answers. A page that ranks but is not extractable, cite-worthy, or clearly associated with an entity (brand, product, category) can lose the click even when it “wins” the SERP.

If AI answers summarize your category without citing your site, the library becomes a cost center.

This is why teams are shifting toward compounding domain authority: fewer URLs, higher extractability, and clear refresh economics. Skayle’s own writing on AI search visibility measurement frames the core issue well: it’s not just rankings, it’s citation coverage.

2. Content compounding, defined: the economics of improving URLs instead of replacing them

Content compounding means a URL gains performance because it is designed to improve with time. It is not “updating content sometimes.” It is a repeatable operating model.

A compounding URL has four characteristics:

- Stable intent. The page has a clear job (e.g., “SOC 2 compliance checklist for SaaS” or “X vs Y alternatives”).

- Updatable structure. Sections, tables, FAQs, and entities are modular so changes don’t require rewrites.

- Internal link gravity. The URL sits in a cluster with deliberate link flows.

- Measurement hooks. Rankings, citations, and conversion events are tracked at the page level.

The PILE Loop (a simple named model that teams can reuse)

A lightweight framework that fits most SaaS content programs:

- P — Publish with structure: ship with clear sections, FAQs, and schema-ready formatting.

- I — Instrument outcomes: track rankings, citations, and conversion events tied to the URL.

- L — Link to consolidate: strengthen the page’s cluster, reduce cannibalization, and route authority.

- E — Evolve on schedule: refresh based on decay signals and new query demand.

The PILE Loop is intentionally boring. That’s the point. Content compounding is infrastructure work.

Contrarian rule that prevents graveyards

Don’t build a bigger library to “cover more keywords.” Build a smaller set of pages that you can afford to maintain.

This tradeoff is easier to accept when the program is measured correctly. A team can keep a flat publishing cadence and still grow organic pipeline if its top cluster is refreshed and linked with discipline. Skayle has covered the mechanics of this kind of refresh system in its content refresh playbook.

A concrete worked example (illustrative, not proprietary)

Consider a SaaS site with 250 blog URLs.

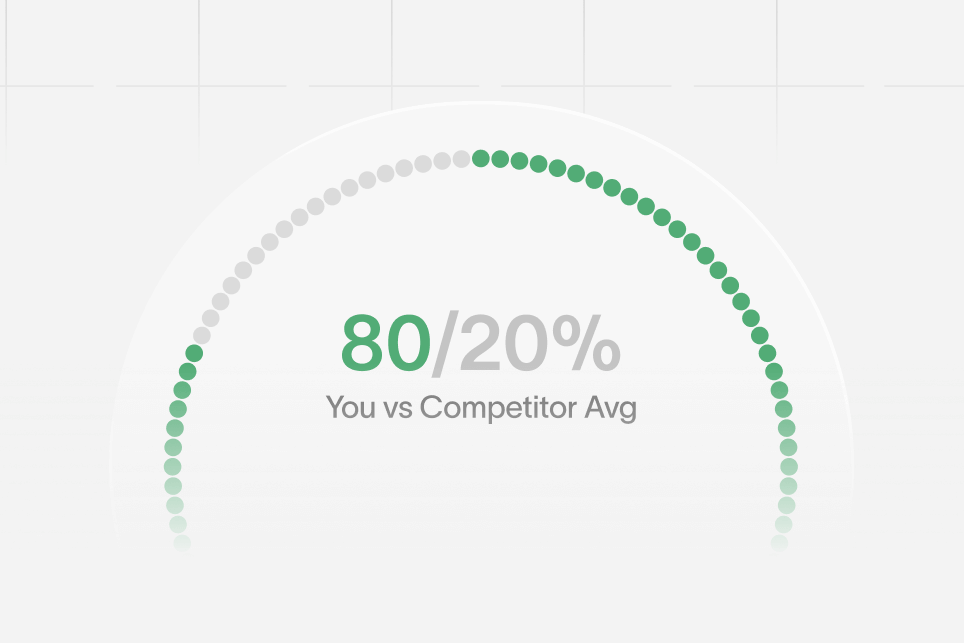

- Baseline: 40 URLs drive 80% of organic clicks; 160 URLs get <10 clicks/month.

- Intervention: consolidate 30 thin posts into 8 stronger pages, add internal links from high-authority pages, refresh the top 20 URLs with updated sections and FAQs.

- Expected outcome: fewer indexed URLs, higher average impressions per URL, and higher conversion rate because visitors land on pages built for decision-making.

- Timeframe: 6–10 weeks to see measurable movement in GSC impressions/CTR, longer for competitive head terms.

The point is not the exact lift. The point is that compounding changes the denominator: performance per URL matters more than raw URL count.

3. The audit that separates “publish more” teams from compounding teams

Compounding starts with a diagnostic that is ruthless about inventory quality. A content audit should answer two questions:

- Which URLs are true assets worth protecting?

- Which URLs are stealing crawl, authority, and clarity?

This is where most teams fail: they do keyword research and editorial planning, but they do not run audits often enough to keep the library coherent.

A practical audit workflow typically combines:

- Screaming Frog for crawl data and indexability signals.

- Google Search Console for query-level performance.

- Looker Studio (or a warehouse like BigQuery) for joining data into a usable view.

Skayle’s cluster-first approach to audits aligns with what high-performing teams do manually; the difference is cadence and consistency. For a deeper breakdown of refresh clustering logic, see the cluster audit approach.

The compounding scorecard (an audit artifact teams can copy)

Instead of scoring “SEO quality” subjectively, score pages on signals that predict compounding:

- Intent clarity: one primary job, one primary buyer stage.

- SERP match: does the format match what ranks (list, template, comparison, definition, tool page)?

- Extractability: answer-ready paragraphs, lists, and FAQs.

- Entity coverage: product, category, and problem entities are explicitly named.

- Internal link role: hub, spoke, or orphan.

- Conversion readiness: clear next step for the reader, not just “keep reading.”

This scorecard is “proprietary” in the sense that it becomes a team’s internal rubric. The value is not the score itself; it’s forcing decisions.

A numbered action checklist (what to do in week 1)

- Export all indexable URLs and group by topic cluster.

- Pull 90-day query + page data from GSC.

- Flag cannibalization (multiple URLs competing for the same query set).

- Tag pages as keep, merge, refresh, or retire.

- Identify 10 internal link opportunities from high-authority pages to money pages.

- Add conversion events in GA4 for demo, trial, signup, and key micro-conversions.

- Build a refresh calendar based on decay signals, not editorial preferences.

If a team can’t do these seven steps consistently, it will not achieve content compounding. It will only ship.

4. Building pages that AI answers can cite (without turning them into FAQ spam)

AI answer inclusion is increasingly mediated by trust, structure, and extractability. Pages get summarized when they are easy to parse, specific, and consistent with known entities.

In 2026, the most common failure mode is not “bad writing.” It’s un-extractable writing.

Design the new funnel: impression → citation → click → conversion

A compounding system assumes this path:

- Impression: the topic appears in SERPs and AI answer experiences.

- AI answer inclusion: the page is eligible because it has clear sections and entities.

- Citation: the brand is referenced and linked.

- Click: the snippet promises specificity, not generic advice.

- Conversion: the landing experience matches the promise.

This is why content and conversion design can’t be separated. If a cited answer leads to a bloated, slow, or unclear page, the click is wasted.

Structural elements that raise citation odds

These are not hacks; they are readability and extraction improvements:

- Short definitional blocks (40–80 words) near the top of the page.

- List-form breakdowns for steps, requirements, and decision criteria.

- Comparison tables that clearly define what differs (audience, pricing model, limitations).

- FAQ blocks that mirror how buyers ask questions.

On the technical side, validate eligibility and extraction:

- Use Google’s Rich Results Test for schema validation.

- Follow Schema.org vocabulary for FAQ, HowTo (where appropriate), and Organization entities.

- Monitor performance and indexing with Google Search Console.

Skayle’s coverage of technical extractability fixes is useful here because it treats crawl and rendering as prerequisites to AI visibility, not separate “tech debt.”

Common mistakes that kill AI citations

- Writing like a thought-leadership post. AI systems prefer specific, checkable statements over vague positioning.

- Burying the answer. If the definition appears after 800 words, it often won’t be extracted.

- FAQ spam. A wall of shallow FAQs doesn’t increase trust; it dilutes the page.

- Entity ambiguity. If the product name, category, and use case are inconsistent, the page is harder to associate with the right entity.

A team that wants content compounding should treat extractability as a content standard, not a last-minute SEO pass. If schema needs to be more conversational, Skayle’s notes on structured data tweaks are a practical reference.

5. Internal linking as authority routing (not “add 3 links per post”)

Most internal linking advice is generic because it’s written for blogs, not for domain authority systems.

Compounding teams treat internal links as routing. The goal is to concentrate authority into the pages that:

- match stable intent,

- sit closest to revenue, and

- are built to convert.

The cluster map that prevents cannibalization

A simple cluster map for SaaS looks like this:

- Hub: category-level explainer (e.g., “What is contract management software?”)

- Spokes: use cases, comparisons, integrations, templates, and alternatives

- Money pages: product pages, pricing, demo/trial pages

The hub is not a blog post. It is a durable asset that earns links and funnels authority.

When clusters are weak, teams often try to compensate with more publishing. That’s how graveyards expand.

Tactical linking moves that compound

- Link from winners to builders. Use the pages already getting clicks to lift the pages that should be getting conversions.

- Retire or merge near-duplicates. Fewer, stronger pages reduce confusion for crawlers and readers.

- Use descriptive anchors. “SOC 2 checklist” is better than “click here.”

- Build link loops inside the cluster. Each spoke links back to the hub and to at least one adjacent spoke.

For programmatic and template-driven content, linking becomes even more important because scale amplifies problems. Skayle’s perspective on programmatic infrastructure is useful for avoiding index bloat and keeping templates compounding instead of decaying.

Conversion implications: links should land on pages that close

Internal links are a UX decision, not just an SEO decision.

If a blog post drives clicks and then links only to other blogs, the user stays in research mode. If it links to a comparison page, a template, and a relevant integration page, the user progresses.

Compounding is about making the “next click” predictable.

6. The compounding cadence: refresh loops, measurement, and governance that scale

A compounding program needs cadence. Without schedules and ownership, the library becomes an archive again.

Refresh triggers that teams can operationalize

Refreshes should not be based on “this feels old.” Use triggers:

- Impressions stable, CTR down: titles/meta mismatch or snippet competition.

- Rankings down across a cluster: internal links and topical coherence need work.

- Traffic stable, conversions down: intent mismatch, weak CTAs, or page experience issues.

- AI citation gap: competitors are cited in prompts where the brand is not.

Skayle’s approach to LLM citation audits fits neatly here: treat citations like a measurable surface area, not a vague branding benefit.

Measurement plan (baseline → target → instrumentation → review)

A truthful compounding plan can be written without inventing results:

- Baseline metrics (per URL): GSC clicks, impressions, CTR, average position; GA4 conversion rate; assisted conversions; time to index.

- Target metrics (per cluster): increase total clicks and conversions from the cluster; reduce cannibalization; increase citation coverage for defined prompts.

- Instrumentation: GSC exports, GA4 events, annotation log of refreshes, and a dashboard in Looker Studio.

- Review cadence: biweekly for top clusters, monthly for the long tail.

Governance: who owns the URL after publish

Content graveyards happen when “publish” is treated as the finish line.

Compounding teams assign explicit ownership:

- A URL has a steward (often an SEO lead or content ops owner).

- Refresh windows are scheduled (e.g., 30/90/180-day reviews for priority pages).

- Change logs exist (what changed, why, what was expected to improve).

This is also where AI workflows break. When tools are fragmented, updates don’t ship. If the workflow is messy, Skayle’s breakdown of fixing AI content handoffs is a helpful reference because it treats content as an operating system problem, not a writing problem.

Key takeaways readers can apply immediately

- Content compounding is an operating model: structured publish, instrumentation, internal link routing, and scheduled evolution.

- The fastest way to stop building a content graveyard is to audit inventory and consolidate ruthlessly.

- AI answers raise the bar on extractability; definitional blocks, lists, and clean entities matter.

- Internal links are authority routing. Treat them like infrastructure.

- Refresh cadence and governance are the difference between a library and an asset base.

If the goal is content compounding rather than content volume, start by measuring where your brand appears in AI answers and which clusters are decaying, then build a refresh and internal linking cadence that turns the existing library into compounding authority. To see how Skayle approaches this end-to-end, teams can measure their AI visibility and use those signals to prioritize what should be refreshed next.