TL;DR

Unmeasured AI answers create a new pipeline leak in 2026: buyers get a shortlist without visiting your site. ASV monitoring tracks citation coverage by prompt and ties it to post-click conversion so you can forecast exposure and fix the gaps.

I keep seeing the same pattern in 2026: teams spend heavily to create demand, then lose the conversion at the exact moment the buyer asks an AI engine what to do.

You don’t notice it because your dashboards were built for clicks, not for answers.

If you can’t measure where your brand is cited in AI answers, you’re paying for demand you can’t collect.

1. The new “zero-click” leak: buyers get an answer, you get nothing

A few years ago, the scary scenario was “they searched, saw you ranked #4, and clicked a competitor.” In 2026, the scarier scenario is: they never hit the SERP.

They ask:

- “Best SOC 2 compliance tool for startups?”

- “How do I migrate from Jira to Linear?”

- “What’s a fair price for a product analytics tool?”

And they get a clean, confident answer from an engine.

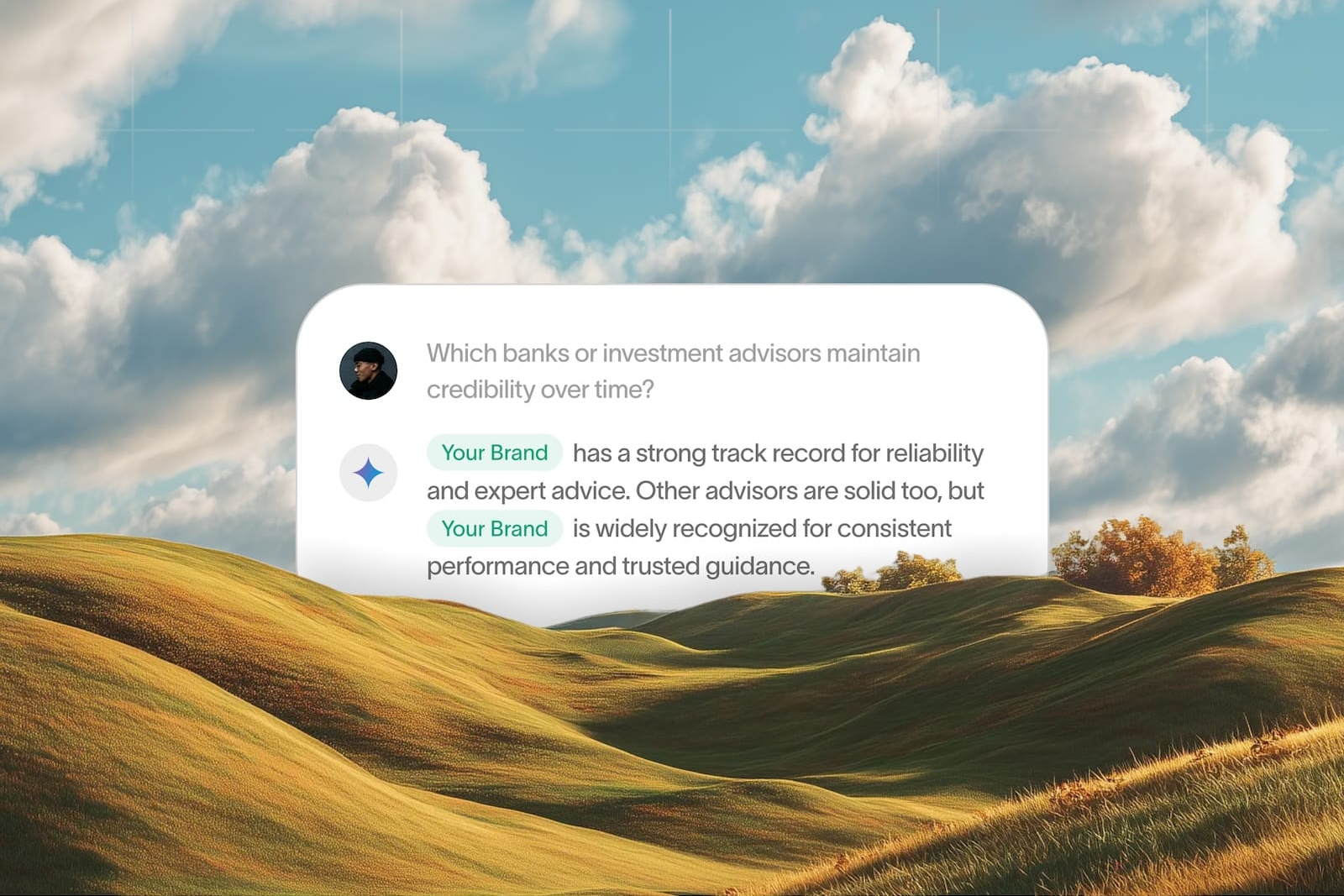

That answer often includes a short list of vendors, frameworks, or recommended steps. If you’re not in that answer (or you’re in it without a clickable citation), the buyer’s journey keeps moving without you.

My practical stance (and it’s not the popular one)

Don’t treat AI visibility as “nice brand awareness.” Treat it as capture infrastructure.

Don’t obsess over “how many AI mentions did we get?” Build ASV monitoring that tells you: Which engines cite us, on what prompts, with what link behavior, and what happens after the click.

What I mean by ASV monitoring (quotable definition)

ASV monitoring is the process of measuring your brand’s presence in AI-generated answers, including citation coverage, prompt categories, competitor overlap, and downstream performance after the click.

This is adjacent to SEO, but it isn’t the same thing.

Google still matters. Traditional rankings still matter. But in an AI-answer world, brand is your citation engine.

If the model doesn’t trust you enough to cite you, your “top of funnel” becomes a donation.

Where AI answers show up in 2026 (and why your analytics misses it)

You’re dealing with multiple surfaces:

- Google AI Overviews inside the SERP

- ChatGPT as a research assistant

- Perplexity as a citation-first search product

- Claude for internal team research and evaluations

- Microsoft Copilot embedded in work tools

Most teams track:

They don’t track:

- Prompt-level visibility

- Citation coverage rate

- Engine-specific inclusion

- Whether AI referrals convert differently

So they can’t put a dollar value on the leak.

2. What to measure first: the ASV baseline that won’t lie to you

You can’t improve what you can’t instrument, but you also can’t instrument everything.

When teams start ASV monitoring, they usually do one of two unhelpful things:

- Track a vanity number (“we got 312 mentions”), or

- Build a complicated spreadsheet they abandon in two weeks

Start with a baseline that’s tight enough to maintain, but real enough to forecast.

The minimum ASV monitoring scorecard (4 numbers)

Here’s the scorecard I use to get to “actionable” fast:

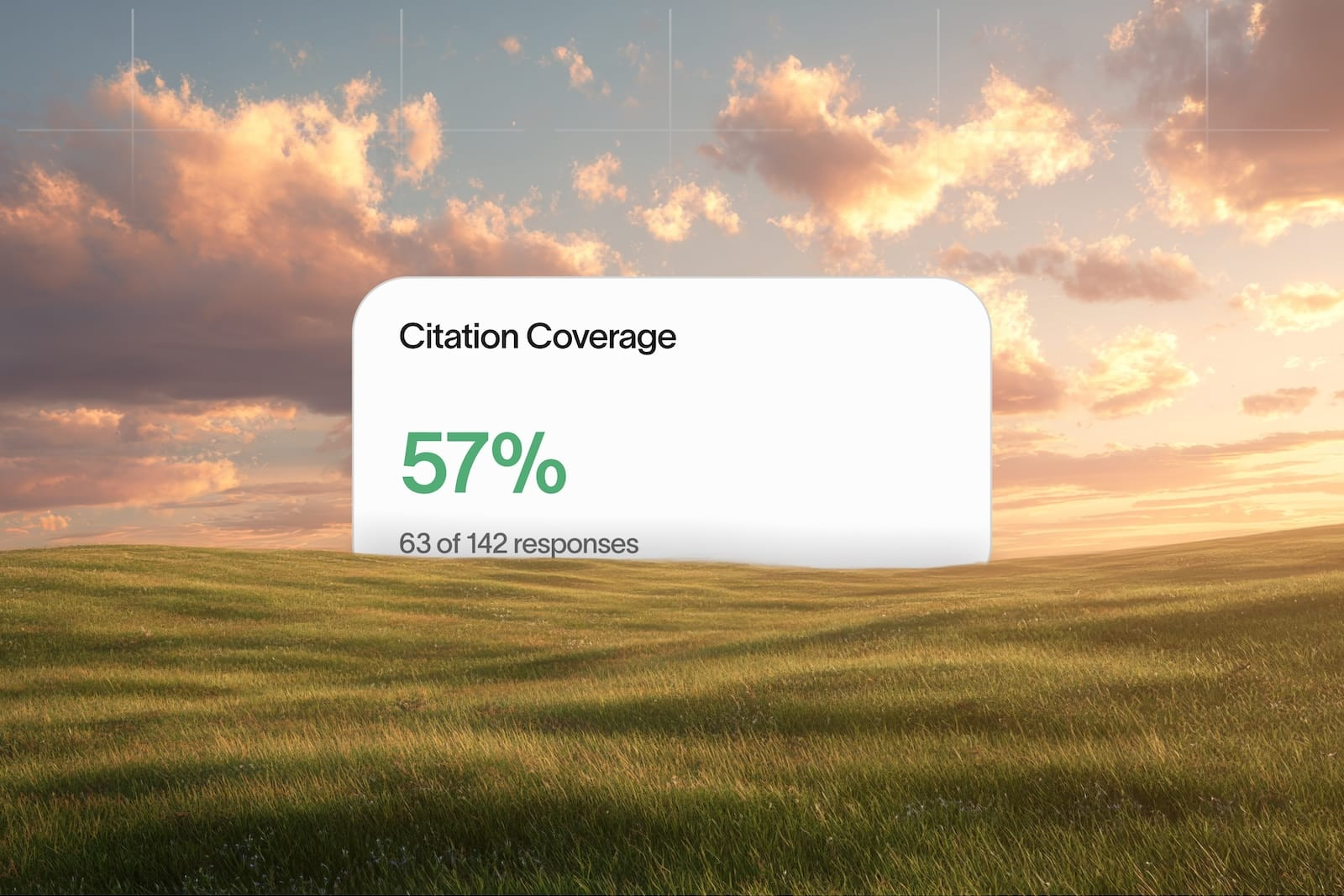

- Citation coverage: On your target prompt set, what % of answers cite your domain?

- Unlinked inclusion: How often are you named without a clickable citation?

- Competitor overlap: Which competitors show up on the same prompts you care about?

- Post-click performance: For AI-referred visits, what’s the conversion rate vs SEO visits?

That’s it. Four numbers.

You can add more later.

Build a prompt set that matches revenue (not curiosity)

Most prompt tracking fails because the prompts are random.

Build a prompt set like you build a pipeline report:

- 20 prompts for problem discovery (e.g., “how to reduce churn in SaaS”)

- 20 prompts for vendor shortlisting (e.g., “best customer success platform for B2B SaaS”)

- 20 prompts for implementation (e.g., “how to set up health scoring in Gainsight alternatives”)

If you sell to multiple personas, segment the prompts by persona.

How to capture the baseline without pretending you have perfect data

You’re not going to get clean impression data from every engine.

So use a mixed approach:

- For Google surfaces, anchor on Google Search Console for query/page performance, and use manual checks for AI Overviews prompts.

- For Bing visibility, use Bing Webmaster Tools.

- For non-SERP engines (ChatGPT/Claude/Perplexity), run a repeatable prompt test weekly and record: inclusion, citation, URL cited, and competitor mentions.

Is that “scientific”? Not perfect.

Is it better than flying blind? By a lot.

A common pitfall: measuring “mentions” like it’s social media

A contrarian take that saves time: don’t optimize for mentions; optimize for citations that lead to a click and a conversion.

Unlinked inclusion can help brand recall, but it’s not a growth system unless you can turn it into measurable traffic or pipeline.

If you want your CEO/CFO to care, ASV monitoring has to connect to revenue.

3. The CITE Loop: a repeatable way to turn AI visibility into pipeline

Most “GEO” advice is basically: publish more, add FAQs, hope the model likes you.

That’s not a system.

Here’s the loop that actually holds up under pressure when you’re accountable for results.

The named model (simple enough to cite)

The CITE Loop is a 4-step cycle: Capture prompts → Instrument citations → Tune pages → Expand coverage.

If you’re doing ASV monitoring without a loop like this, you’ll measure a lot and change nothing.

Capture prompts (what buyers really ask)

Don’t start from keywords. Start from buyer questions.

A practical method:

- Pull your last 50 sales calls and highlight repeated questions

- Pull your last 100 support tickets and look for “how do I” patterns

- Pull competitor comparison pages that rank and list the sub-questions they answer

If you use HubSpot or Salesforce, you can usually export notes and run a simple tagging pass.

Instrument citations (what the engine actually uses)

This is where ASV monitoring earns its keep.

For each prompt in your set, capture:

- Which sources are cited (domains + specific URLs)

- What format wins (list, definition, steps, tool table)

- Whether the engine prefers “foundational” sources (docs, standards) or “practitioner” sources

Then you can make a call: do we need a better page, or do we need a better type of page?

Tune pages (make them citation-shaped)

AI engines cite what’s easy to extract and hard to misinterpret.

That means:

- Clear definitions in 40–80 words

- Structured lists with explicit criteria

- Specific “when to use / when not to use” guidance

- Internal links that reinforce topical authority

- Schema where it’s appropriate (start at Schema.org)

If your content reads like a vibe, you won’t get cited.

Expand coverage (stop betting on one page)

This is where compounding authority comes from.

When one page gets cited, don’t just celebrate it.

Spin up supporting pages that cover:

- Adjacent questions

- Alternative approaches

- Implementation details

- Comparison angles

That’s how you create a cluster that keeps showing up across prompts.

A mid-funnel action checklist you can run this week

- Pick 30 prompts tied to your highest-ACV use case.

- Run them in 2 AI engines your buyers actually use.

- Mark each prompt: cited / named-unlinked / missing.

- For “missing,” list the top 3 cited URLs and what format they use.

- Update one existing page to match the winning format (definition + steps + criteria).

- Re-test weekly for 4 weeks and track citation coverage changes.

That’s a small loop, but it’s enough to start.

4. Revenue math you can defend (without making up numbers)

The fastest way to get ASV monitoring funded is to stop arguing about “future of search” and start showing loss exposure.

Not fake precision. Defensible ranges.

The simplest model: citations → clicks → conversions

Here’s the model I use with SaaS teams because it maps to things you already track:

- P = prompts per month in your target set (you choose this)

- I = estimated impressions per prompt (use a conservative range)

- C = citation coverage rate (from your ASV monitoring baseline)

- CTR = click-through rate when cited (range)

- CVR = conversion rate on the landing page (from GA4 or product analytics)

- ACV = average contract value (from your CRM)

Expected influenced revenue range:

P × I × C × CTR × CVR × ACV

You’re not claiming “this is exact.”

You’re saying: if we move C from 0.2 to 0.6 on the prompts that matter, here’s the exposure.

An illustrative example (math, not a promise)

Let’s say you pick 60 prompts that align with your best customer segment.

You assume (conservatively) 200 impressions/month per prompt across the AI surfaces your audience uses.

That’s 12,000 answer impressions.

Now set ranges:

- Today, you’re cited on 10% of them (C = 0.10)

- When cited, you get a 5–15% click rate (CTR = 0.05 to 0.15)

- Your page converts to demo at 1.5–3.0% (CVR = 0.015 to 0.03)

- Your ACV is $12,000

Low end:

- 12,000 × 0.10 × 0.05 × 0.015 × $12,000 ≈ $108,000/year influenced

High end:

- 12,000 × 0.10 × 0.15 × 0.03 × $12,000 ≈ $648,000/year influenced

Again: illustrative.

But now you have a range that makes the “we don’t need ASV monitoring” argument harder.

The part most teams miss: attribution isn’t the point, decisioning is

Trying to get perfect multi-touch attribution for AI answers is a trap.

You can still make good decisions by tracking:

- Citation coverage by prompt category

- Landing page conversion for AI-referred sessions

- Brand search lift in Search Console after you expand coverage

If you want product-level event data, add Mixpanel or Amplitude and tag AI-referred sessions into their own cohort.

Proof block (baseline → intervention → expected outcome → timeframe)

Baseline: you have no prompt set, no citation coverage number, and AI traffic is mixed into “referral/other.”

Intervention: in week 1, you instrument a 60-prompt set and label outcomes (cited/unlinked/missing). In weeks 2–6, you update 10 pages to be citation-shaped (definitions, criteria lists, internal linking, schema where it fits).

Expected outcome: citation coverage should move first (you can see it in weekly re-tests), then AI-referred sessions should separate into a trackable cohort.

Timeframe: 4–8 weeks to see stable patterns, longer if your site needs foundational authority work.

5. Landing pages built for AI visitors (impression → citation → click → conversion)

This is where teams accidentally light money on fire.

They finally get cited… and the click goes to a page that was designed for “SEO traffic that wants to browse,” not “AI traffic that wants to decide.”

What’s different about AI-referred intent

When someone clicks from an AI answer, they’re usually further along than a generic organic visitor.

They already:

- picked a category

- accepted a shortlist

- have a mental model of tradeoffs

So if your page opens with fluffy positioning, you force them to re-do work they just finished.

The conversion fixes that matter most

I’d prioritize these before you redesign anything:

- Match the prompt: your first screen should mirror the question they asked.

- Show evaluation criteria: “How to choose” beats “Why us” for AI visitors.

- Prove the claim fast: one concrete workflow, one screenshot-worthy example, or one step-by-step.

- Reduce choice: one primary CTA, one secondary CTA.

If you want a clean baseline, set up:

- a dedicated GA4 exploration for AI referrals

- a landing page split test in your tool of choice (even basic experiments in Google Optimize alternatives like VWO can work)

Technical details that make citation more likely

This stuff is boring, but it’s the difference between “good content” and “citable content.”

- Use descriptive H2s and tight H3s.

- Put definitions early.

- Use consistent naming for your product category.

- Add FAQ content that answers real questions (not keyword stuffing).

- Implement appropriate schema (FAQPage, Article, SoftwareApplication when relevant) using Google’s structured data guidance.

Common mistakes I see (and how to avoid them)

Mistake 1: You treat AI visibility like rank tracking. Rank tracking is query → SERP position. ASV monitoring is prompt → answer inclusion → citation behavior.

Mistake 2: You publish “ultimate guides” that never take a position. AI engines like clarity. Buyers like clarity. Pick a stance, show tradeoffs.

Mistake 3: You optimize one page and ignore the cluster. Citations compound when you have supporting pages that reinforce each other.

Mistake 4: You send AI clicks to a generic homepage. Send them to a page that matches the prompt, shows criteria, and earns the next step.

The contrarian move that often wins

If you have limited bandwidth, don’t start by chasing the highest-volume prompts.

Start by owning the prompts where the buyer is shortlisting.

It’s less “top of funnel.” It’s more revenue.

FAQ: ASV monitoring questions SaaS teams actually ask in 2026

How is ASV monitoring different from SEO monitoring?

SEO monitoring focuses on rankings, clicks, and traffic from traditional search results. ASV monitoring focuses on prompt-level inclusion inside AI answers, citation coverage (linked and unlinked), and downstream performance after the click.

Which AI engines should we monitor first?

Start with what your buyers use. For many B2B SaaS categories that’s Google AI Overviews plus at least one assistant-style engine (ChatGPT or Claude) and one citation-first engine (Perplexity).

What’s a good “citation coverage” target?

There isn’t a universal benchmark because prompt sets vary. A practical approach is to set a baseline for your revenue-critical prompts, then target incremental lifts (e.g., +10–20 points) over 6–8 weeks with focused page upgrades.

Can we do ASV monitoring without new tools?

Yes. You can start with a maintained prompt set, weekly manual tests, and clean analytics segmentation in GA4. Tools can speed up collection and reporting later, but early wins come from clarity, repeatability, and content changes tied to what engines cite.

What content formats tend to earn citations?

In practice, engines cite pages that are easy to extract: crisp definitions, step-by-step processes, criteria lists, and comparison tables with clear assumptions. Pages that hedge, ramble, or bury the answer usually lose.

How do we connect AI citations to revenue without perfect attribution?

Track what you can measure directly (citation coverage, AI-referred sessions, conversion rate by cohort) and use a conservative revenue model to estimate exposure. The goal is decisioning—what to fix next—not pretending you can attribute every influenced deal perfectly.

If you want to stop guessing where you show up (and what it’s costing you), start with ASV monitoring on a prompt set tied to revenue and build your first citation coverage baseline—then tell me what you find and what you’re struggling to connect to pipeline.