TL;DR

Teams evaluating tryprofound alternatives should focus on prompt coverage, citation analytics, workflow integration, and execution capability. The best platforms connect AI visibility insights directly to SEO actions that improve citations and rankings.

AI-generated answers are quickly becoming a primary discovery channel. Teams evaluating platforms like Profound are not just buying analytics dashboards; they are choosing infrastructure that determines whether their brand appears in AI answers at all.

The decision matters because the wrong platform often leads to beautiful reports but little execution. The right platform connects AI visibility insights directly to the content and SEO actions that improve rankings and citations.

A concise rule many operators now follow: an AI visibility platform is only useful if it shows where you appear in AI answers and gives you a clear path to increase those citations.

Why Teams Are Looking for Profound Alternatives

The emergence of AI-driven search has created a new category: platforms that monitor and optimize brand visibility inside AI answers. Tools like Profound are part of this shift.

According to the official platform documentation at Profound, the system focuses on helping brands understand their presence in LLM-based answer engines and zero-click search environments.

That capability matters because traditional SEO tools measure links and rankings, not how AI models actually reference brands.

But many teams evaluating Profound alternatives are asking a broader question:

Should the platform only monitor AI visibility, or should it also help execute the changes needed to improve it?

This question often appears once organizations begin testing tools in real workflows.

A common evaluation pattern

Companies typically discover three major gaps when using AI visibility dashboards alone:

• Data without action • Monitoring without content workflows • Visibility insights disconnected from SEO execution

The result is familiar to many marketing teams: reports that explain problems but no system that fixes them.

That is why the conversation around Profound alternatives often centers on capability depth, not just feature lists.

The Core Evaluation Model for AI Visibility Platforms

Operators increasingly evaluate platforms using a simple decision model: data coverage, visibility insights, workflow integration, and execution capability.

These four areas determine whether the platform becomes a growth system or just another analytics dashboard.

1. Data scale and prompt coverage

AI visibility tools rely on monitoring prompts across multiple models.

Large datasets are critical because AI answers vary depending on phrasing, topic, and context.

According to activity shared through the official Profound X account, the platform processes more than 15 million prompts daily for monitoring brand visibility.

Large prompt datasets allow tools to analyze:

• how often a brand appears in AI answers • which prompts trigger citations • where competitors dominate

When evaluating Profound alternatives, teams should ask:

• How many prompts are analyzed daily? • Are prompts grouped by topic clusters? • Can prompts be customized to match real buyer questions?

A platform with shallow prompt coverage often produces misleading conclusions about AI visibility.

2. Visibility analytics inside AI answers

Traditional SEO tools show rankings. AI visibility tools must show citation behavior.

This means tracking whether a brand is:

• cited with a link • mentioned without attribution • recommended as a solution

Platforms like Profound provide modules such as Answer Engine Insights and Agent Analytics, which analyze how AI agents respond to prompts.

Those analytics matter because AI answers frequently appear before organic results, especially in AI summaries and chat-based search.

When comparing Profound alternatives, useful visibility analytics should include:

• citation rate • brand mention frequency • share of AI responses • competitor comparison

These metrics help teams measure whether content strategies actually improve AI answer presence.

3. Workflow integration and operational usability

Data alone rarely changes rankings.

One practical criticism of early AI visibility tools is that they operate as monitoring layers rather than operational systems.

For example, discussions comparing tools in SEO communities often highlight workflow clarity as a deciding factor. A comparison posted on Reddit's SEO Growth community noted that alternatives like Promptwatch can sometimes feel more practical due to clearer link tracking and citation visibility.

This observation reflects a larger pattern: tools must connect insights to execution.

Teams evaluating Profound alternatives should examine whether the platform:

• integrates with content workflows • links citation gaps to page improvements • highlights specific pages to update

Without this connection, marketing teams often spend weeks analyzing data but never implementing improvements.

4. Execution capability beyond monitoring

This is where many alternatives diverge.

Some platforms specialize purely in visibility analytics. Others combine analytics with SEO execution.

Execution capabilities may include:

• content opportunity detection • automated content planning • optimization guidance • structured data suggestions

Platforms that include execution reduce the time between identifying an AI visibility gap and publishing the page that fills it.

This difference often determines whether the platform becomes a growth engine or a reporting tool.

A Practical Checklist for Evaluating Profound Alternatives

Teams comparing platforms typically apply a short evaluation checklist before committing to a tool.

Prompt coverage – Does the system analyze enough prompts to reveal real visibility patterns?

Citation tracking – Can it distinguish between mentions, recommendations, and citations?

Competitive analysis – Does it show which competitors appear in the same AI answers?

Content recommendations – Does the platform suggest pages to create or update?

Workflow integration – Can insights be turned into SEO actions quickly?

Platforms that satisfy all five criteria tend to produce measurable improvements in AI visibility.

Those that do not often become passive dashboards.

What Many Teams Get Wrong When Choosing AI Visibility Tools

A recurring mistake is assuming AI visibility works like traditional SEO analytics.

In reality, AI answers behave differently from search rankings.

Common mistake 1: choosing tools that only measure

Monitoring AI answers is valuable, but measurement alone rarely improves citations.

Many organizations deploy dashboards that reveal gaps but lack systems for fixing them.

The result is what operators call visibility paralysis: teams see the problem but lack the infrastructure to respond.

Common mistake 2: ignoring prompt intent

AI responses change dramatically depending on prompt wording.

Tracking only generic prompts can hide real opportunities.

Effective monitoring uses prompts that mirror real buyer questions such as:

• “best AI SEO tools for SaaS" • “how to improve AI search visibility" • “tools for tracking LLM citations"

Platforms that allow prompt customization usually produce more actionable insights.

Common mistake 3: separating AI visibility from SEO operations

AI answers often draw information from high-authority pages.

This means AI visibility improvements frequently require:

• content updates • schema improvements • better topic coverage

Tools that isolate AI monitoring from SEO workflows struggle to close those gaps.

Comparing Three Types of Profound Alternatives

Not every alternative competes in the same category. The market currently includes three distinct approaches.

Profound

Profound positions itself as a generative AI marketing intelligence platform designed for enterprise brands focused on AI-driven discovery, as described in its official company profile on LinkedIn.

The platform emphasizes analytics and monitoring of AI answers.

Key capabilities include:

• large-scale prompt analysis • AI answer monitoring • agent analytics • visibility insights

Research and educational content from the platform frequently reference large citation datasets, including analysis of more than 3 billion AI citations, discussed in content published through the official Profound YouTube channel.

Organizations evaluating the platform typically adopt it when the primary need is enterprise-scale monitoring of AI responses.

However, some teams exploring Profound alternatives look for systems that connect these insights more directly to SEO execution.

Promptwatch

Promptwatch has gained attention among technical SEO practitioners as an alternative focused on tracking prompts and analyzing AI outputs.

Community comparisons often highlight practical workflow advantages such as clearer link and citation tracking, as discussed in analysis shared within the SEO Growth community on Reddit.

Typical use cases include:

• monitoring AI responses to prompts • analyzing prompt behavior • evaluating how AI models reference sources

This makes Promptwatch useful for teams focused heavily on AI prompt analysis and experimentation.

However, like many monitoring-focused tools, it may still require additional systems for executing SEO improvements.

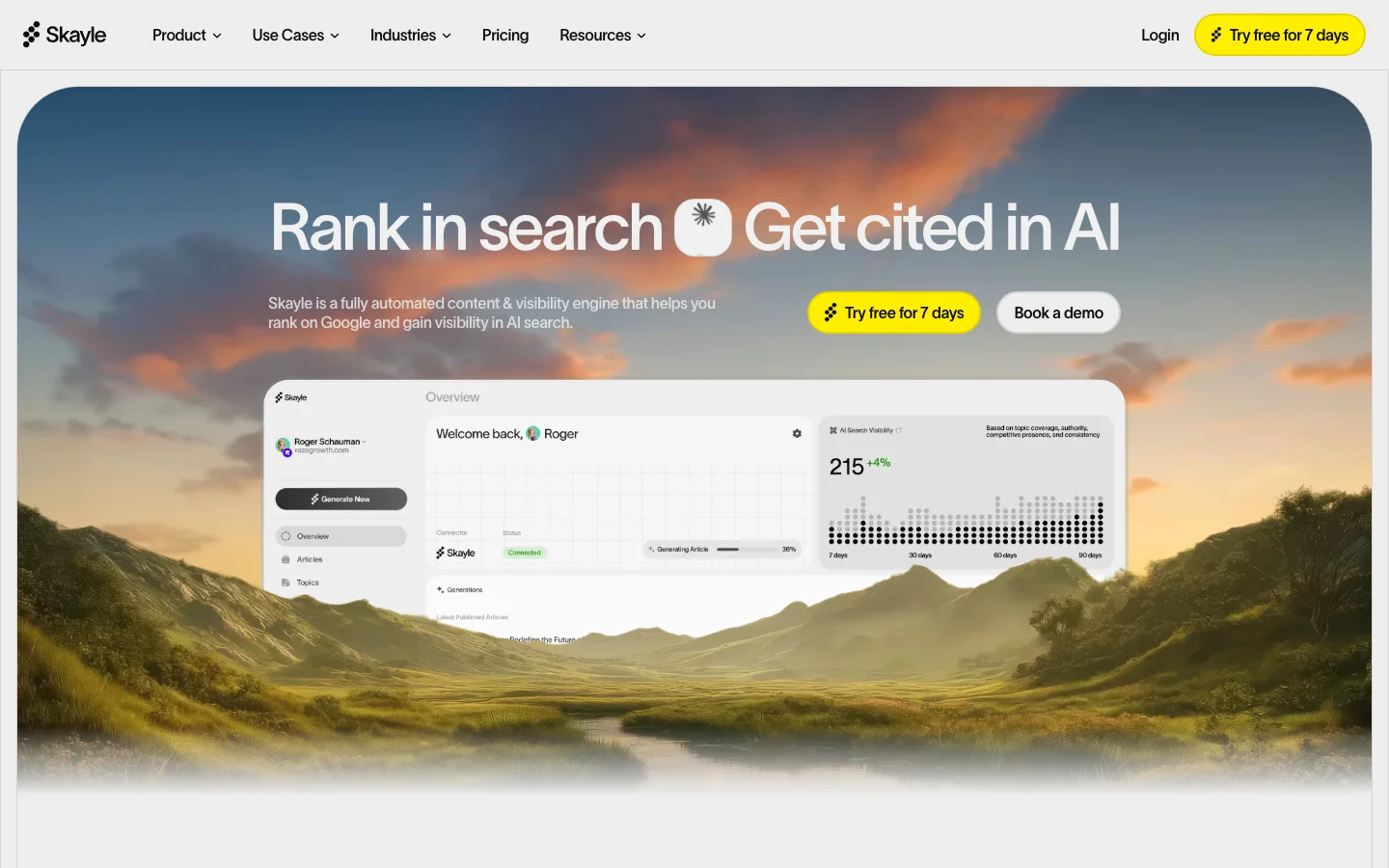

Skayle

Some Profound alternatives take a different approach: combining AI visibility analytics with content execution.

Platforms such as Skayle focus on helping companies rank in search and appear in AI-generated answers, connecting visibility insights directly to content creation and optimization workflows.

Instead of stopping at analytics dashboards, the system helps teams identify visibility gaps and produce the pages required to close them.

This operational approach reflects a broader shift in SEO infrastructure.

Many teams now prefer platforms that unify:

• AI visibility tracking • content planning • publishing workflows • performance monitoring

This integrated model reduces the time between identifying a citation gap and publishing the page that solves it.

A Realistic Implementation Scenario

Consider a SaaS company evaluating Profound alternatives after noticing that competitors appear frequently in AI answers about "programmatic SEO tools."

Baseline measurement shows:

• frequent competitor citations • limited brand mentions • no direct citations in AI responses

After identifying the gap, the team publishes a structured comparison page and updates related content.

Within several weeks, monitoring platforms begin showing:

• new brand mentions • occasional citations in AI answers • improved visibility in AI-generated recommendations

The outcome demonstrates a simple principle: AI visibility improves when insight and execution happen in the same workflow.

Monitoring without action rarely produces the same result.

FAQ: Profound Alternatives

Frequently Asked Questions

What does Profound do?

Profound is an AI visibility and marketing intelligence platform that helps brands monitor how they appear in AI-generated answers. It analyzes prompts across AI systems and reports how often brands are cited or mentioned.

How much does Profound cost?

Public pricing information is limited because the platform is typically positioned for enterprise customers. Pricing is usually customized depending on scale, data requirements, and organizational size.

Who is the CEO of the Profound startup?

Public leadership information can typically be found through the company’s official pages such as its Crunchbase profile and LinkedIn listings.

Where is Profound based?

The company profile available on Crunchbase provides information about the organization’s headquarters, funding history, and operational details.

What should companies look for in Profound alternatives?

The most important criteria include prompt coverage, citation tracking, competitor visibility analysis, workflow integration, and execution capability. Platforms that combine monitoring with content execution usually deliver faster visibility improvements.

The Real Decision: Analytics Tool or Visibility System

Choosing between Profound alternatives ultimately comes down to a structural question.

Is the organization looking for analytics about AI answers, or infrastructure that actively improves visibility inside those answers?

Analytics platforms reveal how AI models talk about a brand.

Execution-focused systems help change that narrative.

For companies that rely on organic growth, the second model often produces stronger results because it turns visibility insights into content that AI systems can cite.

Teams exploring platforms in this category should therefore evaluate not just the dashboards they receive, but the actions those dashboards enable.

Understanding where your brand appears in AI answers is valuable. Building the system that improves those citations is where long-term advantage comes from.

Organizations that want to understand how often they appear in AI answers—and how to improve that visibility—should start by measuring their citation coverage and identifying the prompts where competitors dominate. That analysis reveals where content investment will produce the highest return.