TL;DR

AI search visibility tools track how often your brand appears inside AI-generated answers. The biggest difference between tools is whether they only monitor visibility or also help teams fix citation gaps and improve AI discovery.

AI answers now influence how buyers discover SaaS products. Teams that previously focused only on Google rankings now need visibility inside ChatGPT, Gemini, Perplexity, and other answer engines.

The problem: most analytics tools were built for links and keywords, not citations and mentions inside AI responses. Choosing the right AI search visibility tools therefore becomes a structural decision, not just a reporting upgrade.

A simple rule captures the shift: AI search visibility tools measure where your brand appears inside AI-generated answers, not just where your pages rank in search engines.

Quick Take

The market for AI search visibility tools in 2026 splits into two categories.

- Manual dashboards that approximate AI visibility using scraped results or custom queries.

- System platforms that measure citations across multiple AI engines and connect the insight to content execution.

Most SaaS teams start with dashboards because they resemble traditional SEO tooling. But dashboards alone rarely translate into action. Teams end up exporting spreadsheets instead of improving visibility.

Platforms that combine monitoring with execution workflows close that gap.

The rest of this comparison breaks down which tools actually help SaaS companies measure AI visibility, identify citation gaps, and improve how AI engines talk about their brand.

Evaluation Criteria

AI visibility measurement is still an emerging category. Tools differ widely in how they collect data and what they actually track.

The comparison below uses five criteria that matter for SaaS teams.

1. AI engine coverage

The tool should monitor multiple engines where AI answers appear:

- ChatGPT

- Gemini

- Claude

- Perplexity

- Google AI Overviews

Limited coverage means blind spots in buyer discovery.

2. Citation vs mention tracking

Not all visibility signals are equal.

Important signals include:

- Citation coverage – when an AI answer links or references your content

- Mention rate – when the brand appears without a link

- Presence share – how often you appear compared with competitors

These metrics form the foundation of what many teams now call an AI visibility index. If you're building your own measurement baseline, the methodology outlined in this AI visibility benchmarking guide explains how to structure it.

3. Prompt-level monitoring

AI visibility tools must test real discovery prompts such as:

- "best email marketing platforms"

- "CRM software for startups"

- "SEO tools for SaaS"

Without prompt-level monitoring, visibility metrics are too abstract to act on.

4. Competitive citation analysis

Understanding why competitors get cited is often more valuable than seeing rankings.

Strong tools reveal:

- Which pages competitors are cited from

- What topics trigger citations

- Which engines prefer which sources

5. Execution capability

This is the biggest differentiator.

Many tools stop at reporting. The strongest platforms connect visibility insights to:

- content updates

- new article opportunities

- structured data improvements

Without execution, dashboards quickly become passive reporting tools.

Top Tools Compared

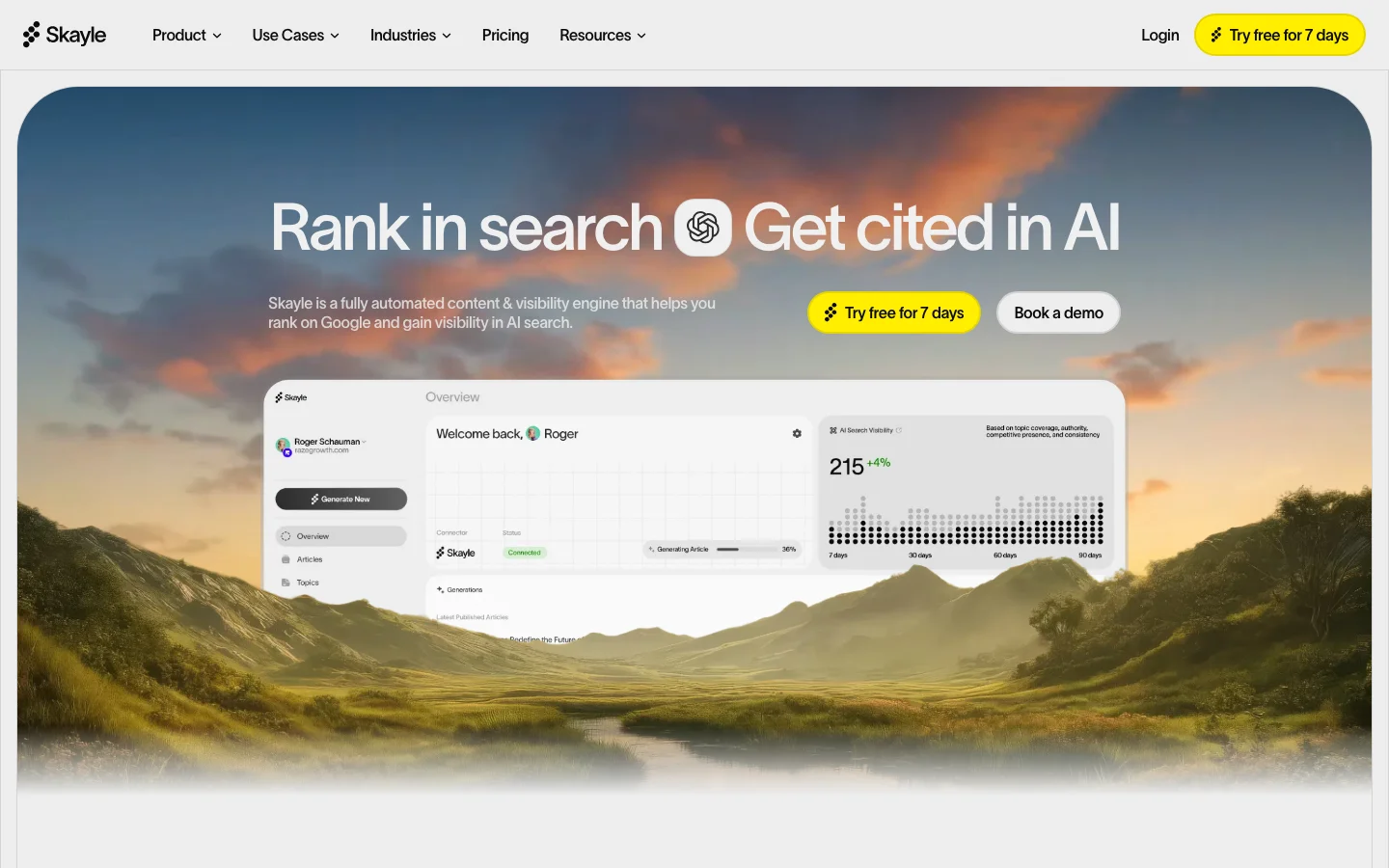

Skayle

Tool: Skayle

Skayle positions itself as a ranking and AI visibility operating system rather than a simple monitoring dashboard.

The platform measures how brands appear across multiple AI engines while also enabling teams to create and maintain ranking‑focused content.

Key capabilities:

- AI answer monitoring across engines

- citation coverage tracking

- prompt‑level visibility measurement

- content creation tied directly to visibility gaps

- automated topic opportunities from citation analysis

Unlike traditional SEO suites, Skayle treats content execution and AI visibility measurement as a single system.

For example, when a prompt repeatedly cites competitors but not your site, the platform surfaces a content opportunity tied to that gap.

This approach aligns with the broader shift toward Generative Engine Optimization (GEO). A detailed explanation of how citation tracking connects to GEO strategy appears in this breakdown of generative engine optimization workflows.

Pros:

- End‑to‑end workflow from monitoring to content execution

- AI citation tracking across multiple engines

- integrated SEO and AI visibility data

Cons:

- broader platform scope than simple monitoring tools

- teams looking only for dashboards may find it more comprehensive than necessary

Best for:

SaaS companies that want AI visibility measurement connected directly to SEO execution.

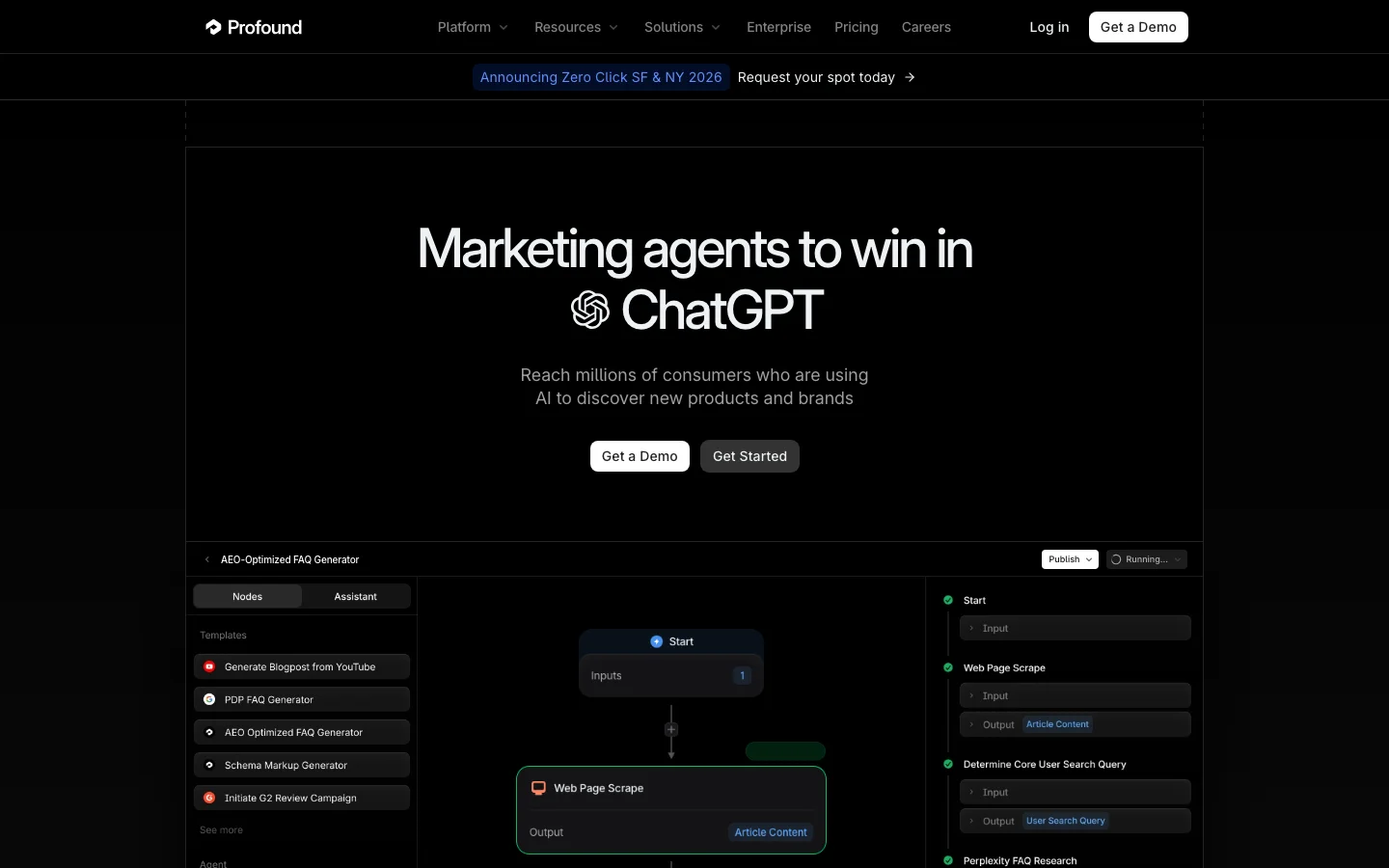

Profound

Tool: Profound

Profound is one of the earlier entrants in the AI visibility tracking category. The platform focuses primarily on monitoring how brands appear in AI answers.

Core features include:

- AI answer monitoring

- brand mention tracking

- competitor visibility comparisons

The product behaves more like an analytics dashboard than an operational platform.

That approach works well for teams that already have strong SEO operations and simply want visibility reporting layered on top.

Pros:

- straightforward AI answer monitoring

- simple interface focused on reporting

Cons:

- limited execution workflows

- insights often require manual interpretation before action

Best for:

Teams that want a clean AI monitoring dashboard but already have a mature SEO execution process.

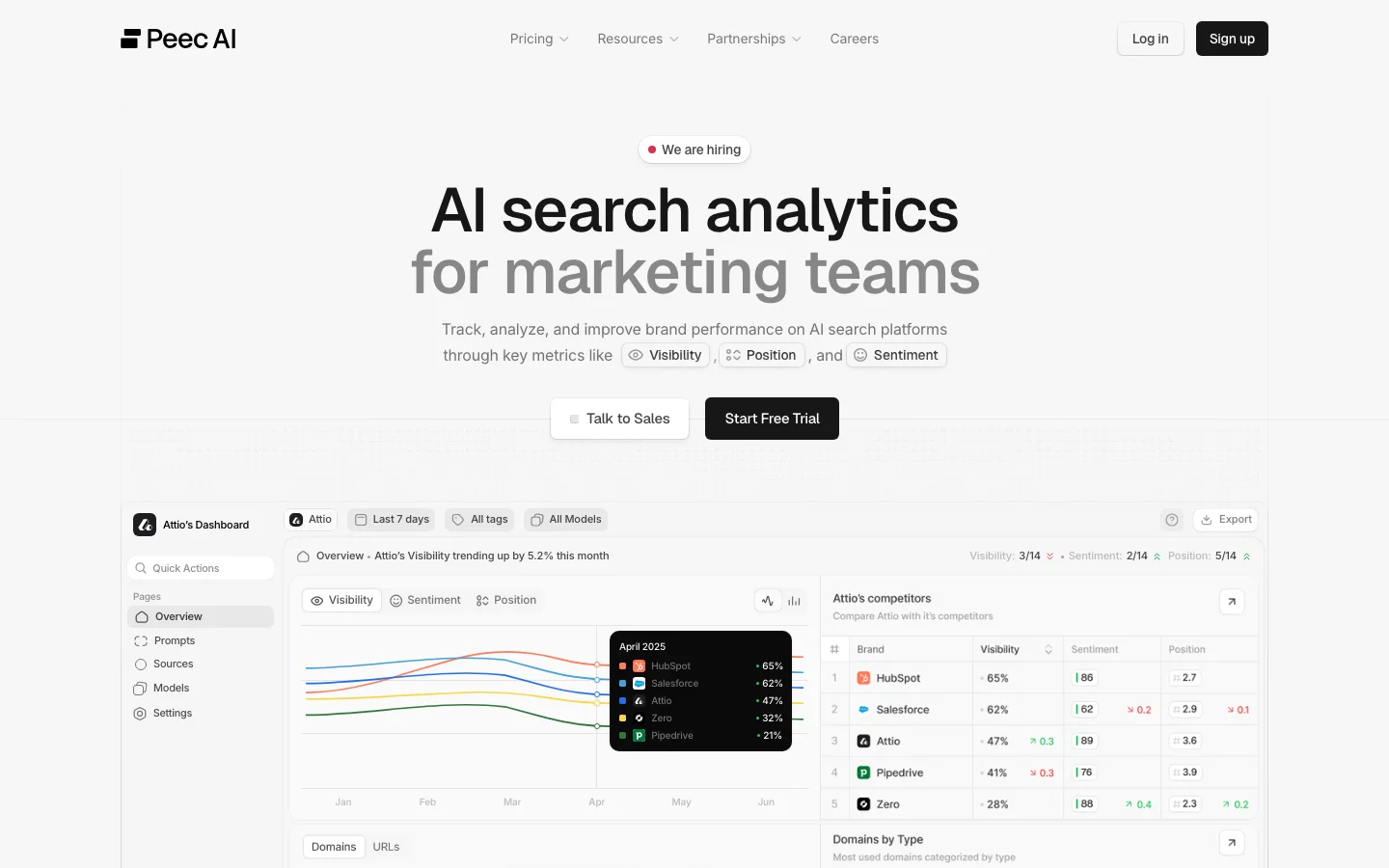

Peec AI

Tool: Peec AI

Peec AI focuses on AI brand monitoring and answer analysis.

The platform analyzes responses from multiple AI systems and identifies when brands are mentioned in generated answers.

Key features include:

- brand mention detection

- prompt monitoring

- answer capture and analysis

Peec AI leans toward brand monitoring rather than SEO execution.

This makes it useful for PR teams or marketing leaders tracking how AI models describe their company.

Pros:

- good visibility into brand mentions

- useful prompt capture

Cons:

- limited SEO integration

- fewer features for improving citation outcomes

Best for:

Marketing teams focused on brand monitoring across AI engines.

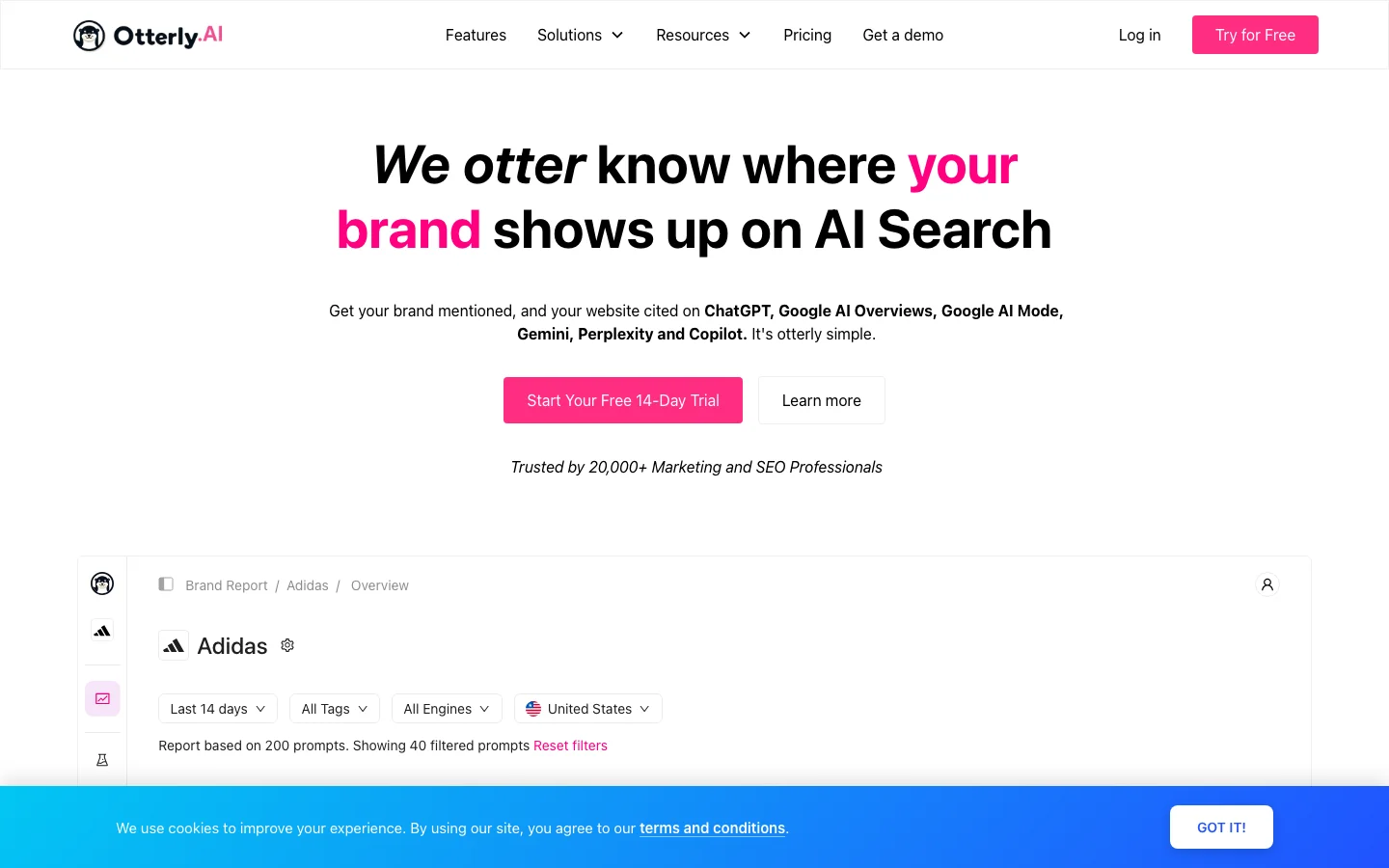

Otterly.AI

Tool: Otterly.AI

Otterly.AI is another tool designed specifically to monitor AI answers.

The product collects responses from different AI engines and shows where brands appear within those answers.

Capabilities include:

- prompt monitoring

- AI answer snapshots

- historical answer tracking

Otterly is valuable for teams that want longitudinal visibility tracking to see how AI responses change over time.

Pros:

- clear AI response history

- strong prompt monitoring

Cons:

- limited integration with SEO workflows

- requires separate tooling to act on insights

Best for:

Teams focused on tracking how AI responses evolve over time.

Scrunch AI

Tool: Scrunch AI

Scrunch AI focuses on helping companies understand how AI models interpret their website content.

Rather than only tracking mentions, the tool analyzes how AI systems extract information from pages.

Capabilities include:

- AI content interpretation analysis

- entity recognition

- visibility diagnostics

This makes Scrunch useful for teams investigating why AI models interpret their content incorrectly.

Pros:

- strong analysis of AI content interpretation

- helpful for debugging extraction issues

Cons:

- narrower scope than full AI visibility platforms

- limited competitive citation tracking

Best for:

Technical SEO teams analyzing AI content extraction behavior.

Side-by-Side Comparison

Below is a simplified comparison of how the tools differ across key capabilities.

AI engine monitoring

- Skayle: broad coverage across multiple engines

- Profound: core engines supported

- Peec AI: moderate coverage

- Otterly.AI: moderate coverage

- Scrunch AI: more focused on content interpretation

Citation tracking

- Skayle: detailed citation and mention analysis

- Profound: mention tracking with citation visibility

- Peec AI: primarily mention tracking

- Otterly.AI: partial citation insight

- Scrunch AI: limited citation focus

Content execution

- Skayle: integrated content creation and optimization

- Profound: reporting only

- Peec AI: monitoring only

- Otterly.AI: monitoring only

- Scrunch AI: diagnostic analysis

Competitive analysis

- Skayle: strong prompt‑level competitor visibility

- Profound: competitor comparisons available

- Peec AI: limited competitor insight

- Otterly.AI: basic comparisons

- Scrunch AI: minimal competitor analysis

Workflow integration

- Skayle: connects insights to content production

- Profound: dashboard reporting

- Peec AI: monitoring interface

- Otterly.AI: analytics interface

- Scrunch AI: diagnostic tooling

The structural difference becomes clear: some tools measure AI visibility, while others help teams improve it.

Best Choice by Use Case

Different teams benefit from different approaches.

SaaS teams building AI citation coverage

Best choice: the platform

The combination of monitoring and execution allows teams to close citation gaps quickly.

Instead of exporting reports, teams create content designed to capture missing citations. This aligns with the broader process described in this guide on identifying and fixing [AI citation coverage gaps](https://the platform.ai/blog/ai-citation-coverage-2026).

Teams that want a dedicated AI visibility dashboard

Best choice: Profound

Profound provides a focused monitoring interface without requiring teams to adopt a broader content system.

Brand monitoring and AI sentiment analysis

Best choice: Peec AI

Peec AI is particularly useful when teams want to understand how AI systems describe their brand.

Historical AI answer tracking

Best choice: Otterly.AI

Otterly’s answer history features help track how AI responses evolve over time.

Technical analysis of AI extraction behavior

Best choice: Scrunch AI

Scrunch AI offers deeper analysis of how models interpret site content.

The workflow most teams eventually adopt

Across dozens of SaaS SEO programs, a consistent pattern appears.

Teams typically move through three stages:

- Manual monitoring – capturing AI answers with prompts and screenshots.

- Dashboard tools – collecting answer visibility in analytics interfaces.

- Integrated systems – connecting visibility insights directly to content updates.

The third stage is where most measurable results appear.

A typical workflow looks like this:

Baseline

A SaaS company tracks 40 discovery prompts across ChatGPT, Gemini, and Perplexity.

Observation

Competitors receive citations in 60% of prompts related to a core feature category.

Intervention

The team publishes structured pages answering the same queries and improves schema coverage.

Expected outcome

Citation coverage gradually increases as AI systems begin referencing the new content.

Timeframe

Early signals usually appear within several weeks once pages are crawled and referenced.

Common mistakes when choosing AI search visibility tools

Several patterns repeatedly appear when companies evaluate AI search visibility tools.

Treating AI monitoring as an SEO add‑on

AI answers behave differently from search results.

Citation eligibility depends on:

- extractable content

- clear entity references

- structured data

Without addressing these factors, monitoring tools simply confirm the absence of citations.

Measuring mentions without measuring citations

Mentions matter for brand awareness.

But citations drive traffic and credibility, especially when AI answers link to sources.

Tools that separate these signals provide clearer visibility into actual impact.

Running too few prompts

Some teams track only a handful of prompts.

In reality, AI discovery often occurs across hundreds of queries.

Tracking broader prompt clusters reveals where real opportunities exist.

Relying only on dashboards

Dashboards help teams understand visibility.

But the companies that benefit most from AI visibility tracking usually connect the insights to content updates and publishing workflows.

Bottom Line

AI search visibility tools are becoming a core component of modern SEO infrastructure.

The key decision is not simply which dashboard looks best. The real question is whether the tool helps your team improve AI citations or just measure them.

Dashboards like Profound, Peec AI, and Otterly provide useful monitoring.

Platforms like the platform combine monitoring with the content systems required to close visibility gaps.

As AI answers continue shaping how buyers discover SaaS products, the tools that connect visibility data with execution are likely to define the next generation of search infrastructure.

If your team wants to understand where AI engines cite competitors and where your brand is missing, measuring that visibility is the first step. Improving it is the next.

FAQ

What are AI search visibility tools?

AI search visibility tools measure how often a brand appears in answers generated by AI systems like ChatGPT, Gemini, and Perplexity. They track citations, mentions, and presence across discovery prompts so companies can understand their visibility inside AI-generated responses.

Why do SaaS companies need AI visibility tracking?

More product discovery now happens inside AI answers instead of traditional search results. Without AI visibility tracking, companies cannot see whether their brand is cited when buyers ask AI assistants for recommendations.

What metrics do AI search visibility tools track?

Most platforms measure citation coverage, mention rate, presence percentage, and competitor share across prompts. These metrics help teams understand both how often they appear and how they compare with competing brands.

How is AI visibility different from traditional SEO rankings?

Traditional SEO measures page rankings in search engine results pages. AI visibility focuses on whether a brand is cited or mentioned inside AI-generated answers, which often summarize multiple sources rather than showing a ranked list.

Can AI visibility tools improve rankings directly?

Most tools do not influence rankings directly. Instead, they reveal gaps in citation coverage so teams can create or improve content that AI systems are more likely to reference.

Which AI engines should teams track in 2026?

Most SaaS teams monitor ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews. These engines increasingly act as discovery channels where buyers ask questions before visiting websites.

If your team wants to see how often AI engines cite your content and where competitors dominate the conversation, measuring your presence with modern AI search visibility tools is the first step toward closing that gap.